Kicking off with BLEU: A Methodology for Automated Analysis of Machine Translation, this methodology is a major growth within the discipline of machine translation. It goals to guage the standard of machine translations by evaluating them to human translations.

The BLEU methodology has turn out to be a extensively accepted commonplace for evaluating machine translation techniques, and it has been instrumental in advancing the sector of pure language processing. Its significance lies in its capability to offer a quantitative measure of translation high quality, which is important for bettering machine translation techniques.

How BLEU Scores are Calculated

Understanding the mechanics behind BLEU scores is important for evaluating the standard of machine translation. The BLEU rating is a extensively used metric for measuring the fluency and accuracy of machine-generated content material, and its calculation includes a number of key parts.

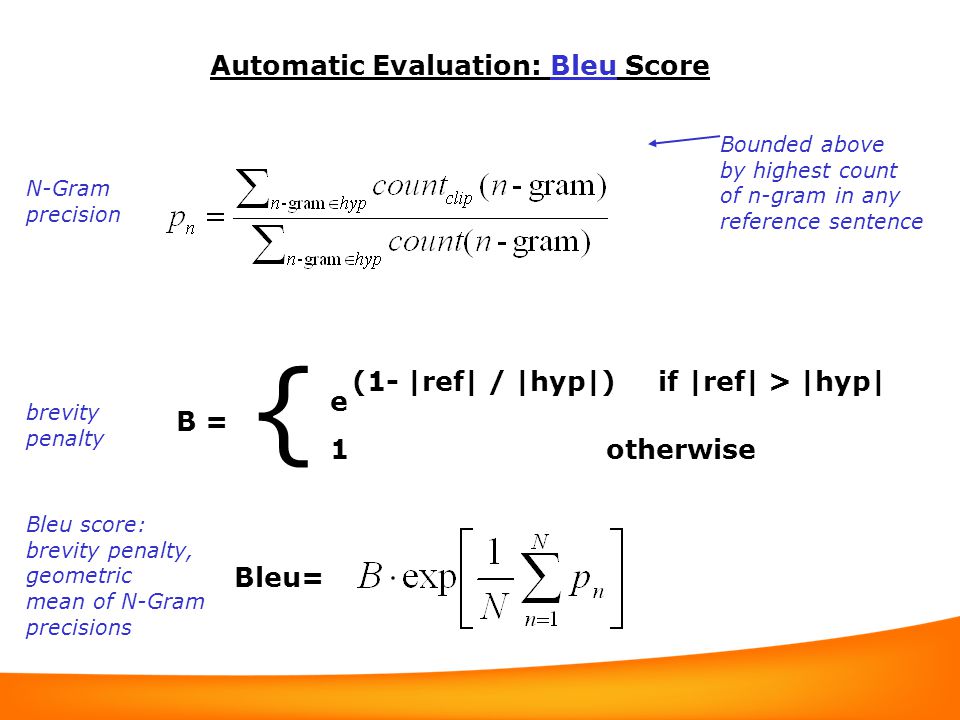

The BLEU rating is calculated utilizing the next method:

BLEU = BP * * (n-gram precisions)

the place BP is the brevity penalty, * is the geometric imply, and n-gram precisions are calculated primarily based on phrase n-grams.

Breaking Down the Calculation

To grasp the calculation of BLEU scores, it is important to understand the person parts concerned.

n-gram Precision

The n-gram precision is a measure of how carefully the machine-generated content material matches the reference translation. It is calculated by evaluating the variety of right n-grams to the whole variety of n-grams within the reference translation.

The precision of a single n-gram is calculated utilizing the next method:

p(n) = right n-grams / complete n-grams in reference

The place right n-grams are the variety of n-gram occurrences within the machine-generated content material which might be additionally current within the reference translation.

Brevity Penalty (BP)

The brevity penalty is an element that is utilized when the machine-generated content material is shorter than the reference translation. This penalty ensures that shorter translations aren’t unfairly penalized compared to longer ones.

The brevity penalty is calculated utilizing the next method:

BP = 1 if (r / t) < 1 The place r is the size of the reference translation, and t is the size of the machine-generated translation. For longer translations, the brevity penalty is 1, since there isn't any must penalize longer translations.

Geometric Imply

The geometric imply is a mathematical operation that calculates the common of a set of numbers. Within the context of BLEU scores, it is used to mix the person n-gram precisions right into a single rating.

The geometric imply is calculated utilizing the next method:

GM = * (p(1) * p(2) * … * p(N))

The place p(n) is the precision of every n-gram, and N is the whole variety of n-grams thought-about.

Influence of Precision, Recall, and F-score on BLEU Scores

Whereas the BLEU rating is a extensively used metric, it has some limitations. One of many key limitations is its reliance on n-gram precision, which may result in biases in analysis.

The BLEU rating is delicate to each precision and recall, with a bias in the direction of precision. Which means a translation that has a excessive variety of right n-grams however is comparatively quick might rating greater than a translation that has a decrease variety of right n-grams however is longer.

The F-score, which is a weighted common of precision and recall, can be utilized to mitigate this bias. Nevertheless, the F-score will not be immediately built-in into the BLEU rating calculation.

Actual-World Functions of BLEU Scores

BLEU scores are extensively utilized in machine translation analysis, however they’ve limitations in real-world purposes.

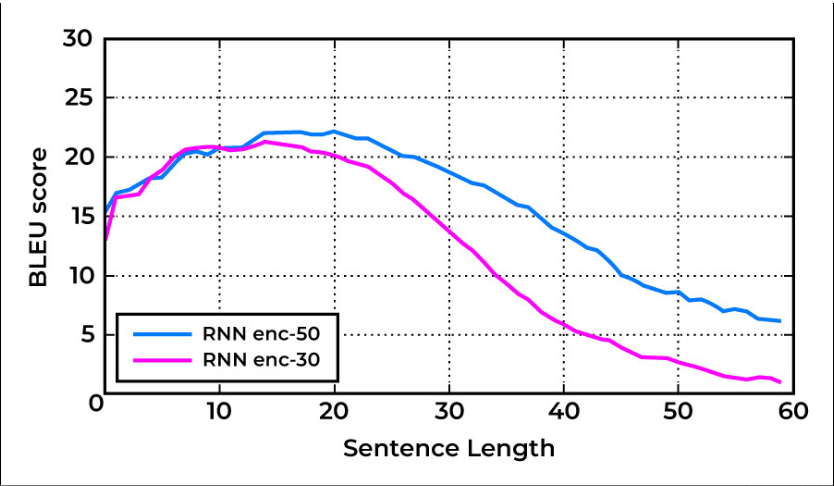

In follow, BLEU scores may be delicate to the selection of n-gram measurement, and the optimum n-gram measurement might range relying on the language pair and the precise translation job.

Moreover, BLEU scores might not precisely seize the nuances of human translation, which may contain context-dependent and figurative language that is troublesome to quantify.

Functions of BLEU in Machine Translation

On the planet of machine translation, a mess of analysis metrics exists to evaluate the efficacy of translation techniques. Nevertheless, the BLEU rating stands out as a extensively accepted metric, employed in various purposes to gauge the standard of translation outputs. From evaluating English translations to assessing machine translation for non-English languages like Chinese language and Spanish, BLEU’s versatility has made it an indispensable instrument for researchers and practitioners alike.

Language Help

BLEU’s utility will not be restricted to a particular language or language pair. It has been tailored and fine-tuned for numerous languages, together with English, Chinese language, and Spanish, to call a couple of. The metric’s flexibility lies in its capability to accommodate adjustments in linguistic buildings, idioms, and syntax of various languages.

In English, BLEU has been extensively used to guage machine translation techniques for a variety of domains, together with technical and literary texts. Its utility in non-English languages has been equally spectacular, with researchers efficiently adapting BLEU for Chinese language and Spanish translation techniques.

Duties past Translation

Whereas BLEU is primarily related to machine translation analysis, its purposes lengthen past translation duties. In textual content summarization, BLEU is used to evaluate the accuracy of summaries generated by machine translation techniques. This ensures that the summaries precisely replicate the essence of the unique textual content.

Moreover, BLEU performs an important position in query answering techniques, the place its output is used to guage the relevance and accuracy of solutions generated by the system. In dialogue era duties, BLEU serves as a metric to evaluate the coherence and fluency of generated dialogue.

Analysis and Growth

BLEU’s significance extends to the realm of machine translation analysis and growth. In benchmarking new fashions, BLEU serves as a yardstick to measure their efficiency. By evaluating the strengths and weaknesses of a mannequin utilizing BLEU, researchers could make knowledgeable selections about mannequin enhancements and optimization.

As well as, BLEU is employed in testing and evaluating the robustness of machine translation techniques underneath numerous situations, reminiscent of language variability and area adaptation. By subjecting fashions to rigorous testing utilizing BLEU, researchers can establish areas that require enchancment and optimize the techniques accordingly.

Limitations and Challenges of BLEU: Bleu: A Methodology For Automated Analysis Of Machine Translation

The Bleu rating, though a extensively used and efficient methodology for evaluating machine translation, will not be with out its limitations and challenges. As with every analysis metric, there are specific shortcomings that have to be thought-about when utilizing Bleu to evaluate the accuracy of machine translation techniques.

The first criticism of Bleu is its reliance on n-gram precision, which focuses on measuring the overlap between the expected translation and the reference translation. Nevertheless, this method neglects the syntax and semantics of the language, resulting in a superficial evaluation of the interpretation high quality.

Reliance on N-gram Precision

Bleu’s reliance on n-gram precision has been a topic of criticism. Whereas it is ready to seize the co-occurrence of phrases and phrases, it fails to seize the deeper syntactic and semantic buildings of language.

That is notably problematic when coping with non-trivial instances, the place the interpretation might not merely be a matter of swapping out particular person phrases, however somewhat re-arranging all the sentence construction to convey the meant which means.

Neglect of Syntax and Semantics

The Bleu rating neglects the syntax and semantics of language, which may result in a shallow evaluation of translation high quality. By focusing solely on n-gram precision, Bleu fails to seize the nuances of language, reminiscent of context, idioms, and figurative language.

For instance, the sentence “The canine chased the cat” and “The cat was chased by the canine” have the identical n-gram precision, however clearly convey completely different meanings. Bleu wouldn’t be capable of seize this distinction.

Out-of-Vocabulary Phrases, Punctuation, and Particular Characters

Bleu will also be misled by out-of-vocabulary phrases, that are phrases or phrases that don’t seem within the coaching information. In such instances, the Bleu rating will not be an correct reflection of the interpretation high quality, because the mannequin could also be compelled to depend on context or paraphrasing to convey the meant which means.

Moreover, out-of-vocabulary phrases can embrace punctuation and particular characters, which are sometimes used to disambiguate language and convey tone. Bleu’s failure to seize these nuances can result in a deceptive evaluation of translation high quality.

Methods for Enhancing Bleu Scores

To enhance Bleu scores, a number of methods may be employed. One method is to make use of phrase embeddings, which may seize the semantic relationships between phrases and enhance the accuracy of translation.

One other method is to make use of named entity recognition (NER), which may establish and disambiguate named entities within the translation, reminiscent of folks, locations, and organizations. This could enhance the accuracy of translation and cut back the reliance on out-of-vocabulary phrases.

Lastly, sentiment evaluation can be utilized to seize the tone and sentiment of the unique textual content and make sure that it’s precisely conveyed within the translated textual content. This might help to enhance the coherence and consistency of the interpretation.

Phrase Embeddings

Phrase embeddings are a way used to seize the semantic relationships between phrases. By representing every phrase as a vector in a high-dimensional house, phrase embeddings can seize the nuances of language, together with context, idioms, and figurative language.

Phrase embeddings can be utilized to enhance Bleu scores by permitting the mannequin to raised seize the which means of phrases and phrases, and to cut back the reliance on out-of-vocabulary phrases.

“The which means of a phrase will not be fastened, it is dynamic and evolves over time, and phrase embeddings are a strong instrument for capturing that dynamic relationship.”

Named Entity Recognition (NER)

Named entity recognition (NER) is a way used to establish and disambiguate named entities within the translation. By figuring out and labeling named entities, NER can enhance the accuracy of translation and cut back the reliance on out-of-vocabulary phrases.

For instance, within the sentence “John Smith is a software program engineer at Google”, the NER mannequin would establish “John Smith” as an individual, “software program engineer” as a career, and “Google” as a company. This enables the mannequin to precisely convey the which means of the sentence and to cut back the reliance on out-of-vocabulary phrases.

Sentiment Evaluation

Sentiment evaluation is a way used to seize the tone and sentiment of the unique textual content and to make sure that it’s precisely conveyed within the translated textual content. By analyzing the sentiment of the textual content, sentiment evaluation might help to enhance the coherence and consistency of the interpretation.

For instance, within the sentence “I like my new automobile!”, the sentiment evaluation mannequin would establish the sentiment as optimistic, indicating a excessive degree of enthusiasm and pleasure. The mannequin would then use this info to translate the sentence in a approach that conveys the meant tone and sentiment.

Future Instructions of BLEU Analysis

As we proceed to advance within the discipline of machine translation, it is important to discover the potential future instructions of BLEU analysis. It will allow us to enhance the accuracy and effectiveness of machine translation techniques. BLEU has been a pioneering analysis metric within the discipline, and its continued growth is essential for pushing the boundaries of machine translation.

Integration with Different Analysis Metrics

BLEU has been primarily used as a standalone analysis metric for machine translation. Nevertheless, current analysis has proven that combining BLEU with different analysis metrics can present a extra complete and correct evaluation of machine translation techniques. For example, combining BLEU with metrics reminiscent of ROUGE (Recall-Oriented Understudy for Gisting Analysis) and METEOR (Metric for Analysis of Translation with Specific ORdering) can present a extra nuanced analysis of machine translation techniques.

- The mixing of BLEU with different analysis metrics can present a extra complete and correct evaluation of machine translation techniques.

- Combining BLEU with metrics reminiscent of ROUGE and METEOR can present a extra nuanced analysis of machine translation techniques.

- This method might help establish the strengths and weaknesses of machine translation techniques and supply a extra correct analysis of their efficiency.

Area Adaptation and Switch Studying

Area adaptation and switch studying are rising areas of analysis in machine translation that may considerably enhance the efficiency of BLEU scores. Area adaptation includes adapting a machine translation system to a brand new area or job, whereas switch studying includes leveraging the data gained from one job or area to enhance the efficiency on one other job or area. By leveraging area adaptation and switch studying, researchers can enhance the accuracy of BLEU scores and allow machine translation techniques to carry out higher on low-resource languages.

“Area adaptation and switch studying can considerably enhance the efficiency of BLEU scores by enabling machine translation techniques to adapt to new domains and leverage data from different duties or domains.”

Human-Pc Interplay and Human-Machine Translation, Bleu: a technique for computerized analysis of machine translation

The growing use of machine translation in human-computer interplay and human-machine translation presents new challenges and alternatives for BLEU analysis. Human-machine translation requires a extra nuanced analysis metric that may seize the complexities of human-computer interplay. By creating BLEU-based metrics that may seize the subtleties of human-computer interplay, researchers can enhance the efficiency of machine translation techniques and improve the person expertise.

- The growing use of machine translation in human-computer interplay and human-machine translation presents new challenges and alternatives for BLEU analysis.

- A extra nuanced analysis metric is required to seize the complexities of human-computer interplay.

- Growing BLEU-based metrics that may seize the subtleties of human-computer interplay can enhance the efficiency of machine translation techniques and improve the person expertise.

Conclusion

BLEU has revolutionized the sector of machine translation by offering a dependable and goal methodology for evaluating translation high quality. Nevertheless, it isn’t with out its limitations, and researchers are frequently working to enhance and refine the tactic to raised seize the nuances of human language.

FAQ Abstract

What’s the BLEU methodology?

The BLEU methodology is an ordinary analysis metric for machine translation techniques, which compares the output of a machine translation system to a human translation.

How is the BLEU rating calculated?

The BLEU rating is calculated by evaluating the n-gram precision, brevity penalty, and size ratio of the machine translation output to the human translation.

What are the constraints of the BLEU methodology?

The BLEU methodology has limitations, together with its reliance on n-gram precision and its neglect of syntax and semantics. It will also be misled by out-of-vocabulary phrases, punctuation, and particular characters.