Computerized differentiation in machine studying: a survey – Computerized Differentiation in Machine Studying Survey is the important thing to unlocking a world of optimization alternatives. With the ever-growing demand for AI options in robotics, pc imaginative and prescient, and recreation improvement, understanding Computerized Differentiation (AD) has change into a basic requirement for machine studying engineers. On this complete survey, we’ll delve into the world of AD, exploring its significance in gradient-based optimization, varied strategies used for gradient computation, and the software program libraries that make all of it attainable.

The significance of gradient-based optimization in machine studying can’t be overstated. It’s the driving pressure behind many machine studying algorithms, enabling them to study from knowledge and enhance their efficiency over time. Nonetheless, calculating gradients will be computationally costly, making it a difficult activity. That is the place Computerized Differentiation is available in – a method that simplifies the method of computing gradients, making it attainable to optimize machine studying fashions effectively and successfully.

Introduction to Computerized Differentiation in Machine Studying

Computerized differentiation (AD) has change into an important side of recent machine studying (ML) as a consequence of its capability to effectively compute gradients. Nonetheless, the significance of gradient-based optimization in machine studying can’t be overstated. Machine studying fashions are educated utilizing optimization algorithms, which depend on computing the gradient of a loss operate with respect to mannequin parameters. This computation is prime for varied ML duties, together with supervised studying, the place the aim is to reduce the loss between predicted and true outputs.

Machine studying duties typically contain advanced fashions, and the guide computation of gradients for these fashions is a time-consuming and error-prone course of. To handle this problem, varied strategies have been developed for gradient computation. These strategies will be broadly categorized into three important teams: Symbolic Computation, Numerical Computation, and Computerized Differentiation.

Symbolic Computation

Symbolic computation strategies contain computing the derivatives utilizing mathematical algebra. This may be finished utilizing pc algebra techniques (CAS), similar to Mathematica or Sympy. CAS instruments can manipulate mathematical expressions and compute their derivatives. Nonetheless, the computational complexity of this strategy will increase quickly with the scale of the expression, making it much less environment friendly for giant fashions. Moreover, this technique will be computationally costly, making it unsuitable for real-time purposes.

- Benefits: symbolic computation supplies actual derivatives and can be utilized for advanced fashions

- Disadvantages: computationally costly and fewer environment friendly for giant fashions

Numerical Computation

Numerical computation strategies approximate the derivatives utilizing finite variations. This strategy works by perturbing the enter values barely and measuring the change within the output. The spinoff is then approximated because the ratio of those two values.

Numerical computation has change into a preferred selection as a consequence of its pace and ease of implementation. Nonetheless, this strategy suffers from a number of limitations. First, it may be computationally costly, particularly for giant fashions. Second, numerical differentiation is delicate to the selection of step measurement, which might result in inaccurate outcomes.

- Benefits: quick and simple to implement

- Disadvantages: computationally costly and delicate to step measurement

Computerized Differentiation

Computerized differentiation, also referred to as AD, is a technique that computes the spinoff of a operate utilizing its authentic supply code. This strategy is quicker and extra correct than numerical differentiation and may deal with advanced fashions simply.

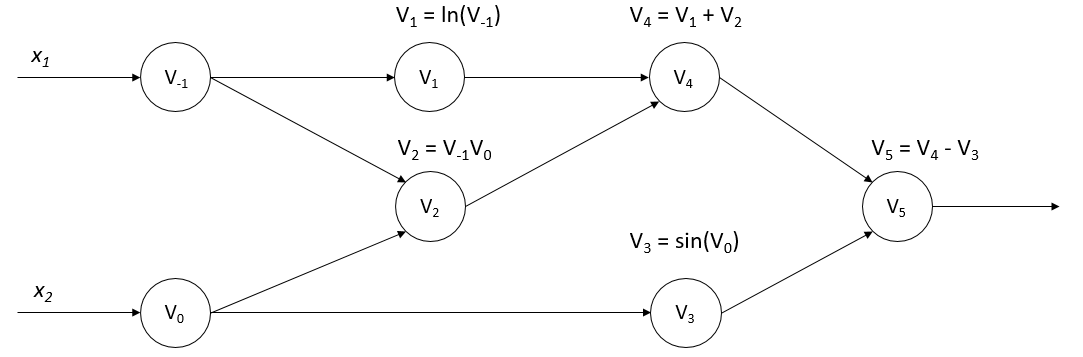

Computerized differentiation will be utilized to each ahead and backward passes of a mannequin. The ahead go entails passing enter values by means of the mannequin to compute the output. The backward go entails computing the derivatives of the output with respect to the enter values. AD algorithms work by traversing the computational graph of the mannequin, figuring out operators, and computing their derivatives on the fly.

Computerized differentiation has change into a preferred selection in machine studying as a consequence of its pace, accuracy, and ease of implementation. Many deep studying frameworks, together with TensorFlow and PyTorch, present built-in assist for automated differentiation.

- Benefits: quick, correct, and simple to implement

- Disadvantages: advanced implementation for sure fashions

AD algorithms will be categorised into two important classes: forward-mode AD and reverse-mode AD

In forward-mode AD, the algorithm traverses the computational graph from enter to output, computing the derivatives of every operator alongside the way in which.

In reverse-mode AD, the algorithm traverses the computational graph from output to enter, computing the derivatives of every operator in reverse order.

Computerized differentiation has quite a few purposes in machine studying, together with neural community coaching, reinforcement studying, and optimization. It has change into a vital device for contemporary machine studying and is extensively utilized in varied domains, together with picture recognition, pure language processing, and speech recognition.

Varieties of Computerized Differentiation

The first concern in machine studying is figuring out the derivatives of capabilities to optimize and enhance fashions. With the emergence of assorted automated differentiation (AD) strategies, researchers and practitioners can effectively compute these derivatives with out tedious guide calculations or relying closely on symbolic differentiation. On this part, two important forms of AD are explored: ahead and reverse mode automated differentiation. These strategies allow the computation of derivatives for advanced capabilities by leveraging the chain rule, a basic idea in calculus.

Ahead Mode Computerized Differentiation, Computerized differentiation in machine studying: a survey

Ahead mode AD, also referred to as ahead mode, is a technique of computing the spinoff of a operate whereas traversing the operate name tree in the identical course because the operate analysis. It entails evaluating the operate and its derivatives concurrently, ranging from the leaf nodes (inputs) and transferring in the direction of the foundation node (output). The approach is usually used within the context of optimization algorithms and gradient-based strategies, similar to stochastic gradient descent.

Ahead mode AD has a number of benefits:

- It’s notably helpful when the spinoff of the output with respect to a single enter is required, similar to in scalar optimization issues.

- It may be applied effectively utilizing a small quantity of extra reminiscence and computational sources.

- It might deal with a variety of mathematical operations, together with vector and matrix operations.

Nonetheless, ahead mode AD additionally has some limitations:

- It turns into much less environment friendly when coping with capabilities which have a lot of inputs or require a lot of operate evaluations.

- It isn’t well-suited for computing the spinoff of a operate with respect to a number of inputs concurrently.

Not like ahead mode AD, reverse mode AD, also referred to as reverse mode, computes the spinoff of a operate by traversing the operate name tree within the reverse course. It begins from the foundation node (output) and strikes in the direction of the leaf nodes (inputs). This strategy is usually used when the spinoff of the output with respect to a number of inputs is required, similar to in vectorized optimization issues.

Reverse mode AD has a number of benefits:

- It’s notably helpful when the spinoff of the output with respect to a number of inputs is required.

- It might deal with advanced capabilities that contain a number of inputs and outputs.

- It may be used along side ahead mode AD to compute the spinoff of a operate in a extra environment friendly method.

Reverse mode AD entails a course of referred to as adjoint sensitivity evaluation. The adjoint variables are used to signify the spinoff of the output with respect to the inputs. The adjoint variables are then used to compute the spinoff of the output with respect to the inputs.

Adjoint Sensitivity Evaluation

Adjoint sensitivity evaluation is a method utilized in reverse mode AD to compute the spinoff of the output with respect to the inputs. The adjoint variables are used to signify the spinoff of the output with respect to the inputs. The adjoint variables are then used to compute the spinoff of the output with respect to the inputs.

The adjoint variables are outlined as follows:

*

∂f = ∂y/∂x ( adjoint variables )

On this equation,

f

represents the operate being differentiated,

y

represents the output of the operate, and

x

represents the enter to the operate. The adjoint variables are used to signify the spinoff of the output with respect to the inputs.

Comparability of Ahead and Reverse Mode AD

Ahead and reverse mode AD are two distinct approaches to computing the spinoff of a operate. Ahead mode AD is helpful when the spinoff of the output with respect to a single enter is required. Reverse mode AD, however, is helpful when the spinoff of the output with respect to a number of inputs is required.

The selection of strategy is determined by the precise downside being solved. If the issue entails computing the spinoff of a single enter, ahead mode AD is an efficient selection. If the issue entails computing the spinoff of a number of inputs, reverse mode AD is a greater possibility.

In conclusion, ahead and reverse mode AD are two highly effective strategies for computing the spinoff of a operate. Ahead mode AD is helpful for computing the spinoff of a single enter, whereas reverse mode AD is helpful for computing the spinoff of a number of inputs. The selection of strategy is determined by the precise downside being solved.

AD Implementation Methods: Computerized Differentiation In Machine Studying: A Survey

Auto-differentiation has change into an important part in fashionable machine studying frameworks, enabling environment friendly and correct computation of gradients. Numerous software program libraries and frameworks have adopted auto-differentiation to simplify the event of deep studying fashions. This part explores the design of autodiff-enabled deep studying frameworks, discusses software program libraries utilizing auto-differentiation, and shares strategies to optimize automated differentiation computation.

Software program Libraries Utilizing Auto-differentiation

A number of famend software program libraries and frameworks have built-in auto-differentiation to simplify the event of deep studying fashions. These libraries allow builders to give attention to mannequin structure and loss capabilities, fairly than gradient computation.

- TensorFlow: TensorFlow is a well-liked open-source machine studying framework developed by Google. It incorporates auto-differentiation to compute gradients effectively, making it a extensively used library within the trade.

- PyTorch: PyTorch is an open-source machine studying framework developed by Fb. It makes use of auto-differentiation to compute gradients, offering a dynamic computation graph that makes it simpler to implement advanced fashions.

- Theano: Theano is a well-liked open-source Python library used for computing and studying advanced mathematical expressions, notably well-suited for deep studying. It incorporates auto-differentiation to compute gradients.

Every of those libraries has its personal strengths and weaknesses, and builders typically select the one which most closely fits their mission’s necessities.

Design of Autodiff-enabled Deep Studying Frameworks

Autodiff-enabled deep studying frameworks sometimes make use of a mix of strategies to optimize automated differentiation computation. These embrace:

-

Operator Overloading

: Many frameworks overload operators like +, -, *, /, and = to allow auto-differentiation. This enables customers to outline advanced mathematical expressions utilizing customary syntax.

-

Dynamic Computation Graph

: Frameworks like PyTorch and TensorFlow make use of dynamic computation graphs, that are modified and up to date throughout the execution of this system. This strategy permits for environment friendly computation of gradients.

-

Computerized Graph Development

: Some frameworks robotically assemble the computation graph based mostly on the user-defined mannequin structure and loss operate. This simplifies the event course of and eliminates the necessity for guide graph building.

The design of autodiff-enabled deep studying frameworks goals to strike a stability between ease of use, computational effectivity, and adaptability.

Optimizing Computerized Differentiation Computation

To optimize automated differentiation computation, builders can make use of a number of strategies:

-

Fusion of Operations

: Combining a number of operations right into a single computation can cut back the variety of intermediate outcomes and enhance efficiency.

-

In-memory computation

: Computing operations in reminiscence will be quicker than computing them on the GPU or CPU.

-

Graph Pruning

: Eradicating pointless nodes and edges from the computation graph can cut back the computational overhead and enhance efficiency.

These strategies may help optimize automated differentiation computation, resulting in quicker mannequin coaching and inference.

Widespread Pitfalls When Implementing AD

Builders ought to concentrate on the next widespread pitfalls when implementing auto-differentiation:

-

Incorrect use of operator overloading

: Misusing operator overloading can result in incorrect computation of gradients or crashes.

-

Pointless computation

: Failing to optimize the computation graph or not utilizing fusion of operations can lead to inefficient computation of gradients.

-

Inadequate error checking

: Ignoring error checking can result in incorrect outcomes or crashes throughout mannequin coaching or inference.

By understanding these widespread pitfalls, builders can keep away from potential points and create sturdy and environment friendly auto-differentiation implementations.

Gradient Computation Strategies

Gradient computation is an important part of machine studying, because it permits the optimization of advanced fashions by figuring out the course and magnitude of the error floor. On this part, we’ll discover the assorted strategies employed to compute gradients, with a give attention to direct differentiation guidelines, adjoint sensitivity evaluation, and adjoint-based gradient computation in reverse mode AD.

Direct Differentiation Guidelines

Direct differentiation guidelines are a basic idea in automated differentiation, enabling the computation of gradients by means of the applying of mathematical guidelines and identities. These guidelines present a scientific strategy to computing gradients, based mostly on the composition of capabilities and the properties of derivatives.

∂(f(g(x)))/∂x = (∂f/∂g) * (∂g/∂x )

This rule illustrates the chain rule for differentiation, the place the spinoff of a composite operate is computed by the product of the derivatives of the person elements.

Adjoint Sensitivity Evaluation

Adjoint sensitivity evaluation is a mathematical framework for analyzing the sensitivity of a system to adjustments in its inputs. This strategy is especially helpful within the context of automated differentiation, the place it permits the computation of gradients by means of the identification of adjoint variables.

The adjoint sensitivity evaluation is predicated on the next equation:

δF/δu = ∫[∂F/∂u]dt

the place δF/δu represents the variation of the target operate F with respect to the enter u, and ∂F/∂u is the spinoff of F with respect to u.

Adjoint-Primarily based Gradient Computation

Adjoint-based gradient computation is a technique for computing gradients by means of the usage of adjoint variables. This strategy is especially environment friendly within the context of reverse mode AD, the place it permits the computation of gradients by following the computation stream backwards.

In adjoint-based gradient computation, the gradient of the target operate F with respect to the enter u is computed as follows:

δF/δu = ∑[∂F/∂u_i]

the place ∂F/∂u_i represents the spinoff of F with respect to the ith enter variable u_i.

Environment friendly Gradient Calculation

Environment friendly gradient calculation is a important side of machine studying, because it impacts the convergence pace and accuracy of optimization algorithms. A number of strategies have been proposed to enhance gradient calculation, together with:

- Approximate gradient calculation: This strategy entails approximating the gradient of the target operate F with respect to the enter u by changing it with an easier expression, similar to a linear or quadratic approximation.

- Gradient compression: This strategy entails compressing the gradient replace vector to cut back the communication overhead in distributed optimization settings.

- Quantization: This strategy entails quantizing the gradient replace vector to cut back the reminiscence necessities and enhance the soundness of optimization algorithms.

These strategies can be utilized along side adjoint-based gradient computation to enhance the effectivity of gradient calculation.

Implementation of Adjoint Algorithms

The implementation of adjoint algorithms is a important side of machine studying, because it impacts the accuracy and effectivity of gradient computation. A number of computational instruments have been developed to implement adjoint algorithms, together with:

- TensorFlow: That is an open-source machine studying framework developed by Google, which supplies a spread of instruments and libraries for implementing adjoint algorithms.

- Singa: That is an open-source deep studying framework developed by the Singapore-MIT Alliance for Analysis and Expertise (SMART), which supplies a spread of instruments and libraries for implementing adjoint algorithms.

- Autograd: That is an open-source automated differentiation library developed by the College of California, Berkeley, which supplies a spread of instruments and libraries for implementing adjoint algorithms.

These computational instruments present a spread of options and libraries for implementing adjoint algorithms, together with automated differentiation, gradient computation, and optimization.

Computational Instruments for Excessive-Dimensional Information

Excessive-dimensional knowledge poses important challenges in machine studying as a consequence of its advanced construction and computational necessities. Specialised libraries and environment friendly algorithms are important for dealing with such knowledge, enabling correct predictions and sturdy fashions. On this part, we discover the computational instruments and methods that facilitate the evaluation of high-dimensional knowledge.

Specialised Libraries for Numerical Computation and Optimization

A number of libraries have been developed to handle the precise wants of high-dimensional knowledge. These libraries present optimized implementations of numerical computation and optimization algorithms, permitting for environment friendly execution on parallel architectures. Some notable examples embrace:

- TensorFlow and PyTorch

- Scikit-learn

- SciPy

Two common deep studying frameworks that present an in depth vary of numerical computation and optimization instruments. Their implementation of automated differentiation, specifically, permits seamless integration with high-dimensional knowledge.

A extensively used library for machine studying that comes with varied optimization algorithms, together with stochastic gradient descent and conjugate gradient. Scikit-learn’s optimization instruments have been optimized for efficiency and reminiscence effectivity.

A scientific computing library that gives a spread of optimization algorithms, together with linear and nonlinear least squares. SciPy’s optimization instruments are designed to deal with high-dimensional knowledge and are sometimes used along side different libraries.

Environment friendly Gradient Approximation Methods

Given the sensitivity of optimization algorithms to gradients, environment friendly gradient approximation is essential in high-dimensional knowledge evaluation. A number of methods will be employed to enhance gradient approximation:

- Stochastic Gradient Descent (SGD)

- Batch Gradient Descent

- Quasi-Newton Strategies

A preferred optimization algorithm that makes use of stochastic gradient approximation. SGD is especially efficient in high-dimensional knowledge as a consequence of its capability to adapt to non-linear relationships.

A basic optimization algorithm that makes use of batch gradient approximation. Whereas much less environment friendly than SGD, batch gradient descent will be efficient for smaller datasets or when computational sources are restricted.

Quasi-Newton strategies, such because the Broyden-Fletcher-Goldfarb-Shanno (BFGS) algorithm, approximate the Hessian matrix utilizing a restricted historical past of gradient evaluations. This strategy can considerably cut back computational overhead whereas sustaining convergence pace.

Software of Quasi-Newton Strategies

Quasi-Newton strategies have been extensively adopted in machine studying as a consequence of their capability to stability convergence pace and computational overhead. These strategies have been notably efficient in high-dimensional knowledge evaluation, the place the variety of mannequin parameters can result in prohibitive computational prices.

The BFGS algorithm, a quasi-Newton technique, has been proven to outperform conventional Newton’s technique in high-dimensional optimization duties. It’s because the BFGS algorithm adaptively updates the Hessian matrix approximation, permitting it to seize non-linear relationships within the knowledge.

Numerical Libraries for Excessive-Dimensional Information

Along with the libraries talked about earlier, there are a number of different numerical libraries that may deal with high-dimensional knowledge:

- Numpy

- PyCUDA

A basic library for numerical computing in Python, offering environment friendly implementations of array operations and linear algebra capabilities.

A library that gives a Python interface to the CUDA GPU structure, enabling parallelization of numerical computations and accelerated efficiency.

These libraries have been optimized for efficiency and reminiscence effectivity, making them splendid for high-dimensional knowledge evaluation.

Optimization of Computerized Differentiation Computation

Computerized differentiation (AD) is a robust device for computing gradients in machine studying, however its computational price will be important, particularly for high-dimensional or advanced fashions. To mitigate this, researchers and practitioners have developed varied methods for optimizing AD computations, that are mentioned beneath.

Parallelization of AD on Distributed Environments

Parallelizing AD computations on distributed environments can considerably pace up gradient computations. This may be achieved by means of varied methods:

- Mannequin parallelism: dividing the mannequin into smaller sub-modules and computing their gradients in parallel.

- Information parallelism: dividing the coaching knowledge into smaller batches and computing their gradients in parallel.

- Distributed reminiscence parallelism: utilizing a number of machines to retailer and compute gradients in parallel.

- Synchronized parallelism: utilizing synchronization primitives to make sure that all processors end computing gradients earlier than updating the mannequin.

Every of those methods has its strengths and weaknesses, and selecting the right strategy is determined by the precise use case and out there computational sources.

Mannequin parallelism, for instance, is especially appropriate for giant fashions that can’t slot in reminiscence on a single processor. By dividing the mannequin into smaller sub-modules, every processor can compute the gradients of its native sub-module in parallel, which might considerably pace up the general computation. Nonetheless, this strategy requires cautious synchronization to make sure that the gradients from every sub-module are appropriately mixed to compute the general gradient.

Lowering Gradient Computations in Sequential Settings

In lots of machine studying purposes, gradients have to be computed sequentially, both as a consequence of reminiscence constraints or computational useful resource limitations. In such circumstances, decreasing the variety of gradient computations will be useful. A number of methods will be employed to realize this:

- Checkpointing: storing the mannequin’s state at intermediate computation factors, in order that gradients will be computed from the checkpointed state as a substitute of the whole mannequin.

- Pipelining: splitting the computation of gradients into smaller, impartial duties that may be executed in parallel.

- Gradient approximation: approximating the gradients utilizing strategies similar to stochastic gradient descent (SGD), which solely requires computing gradients for a subset of the coaching knowledge.

Every of those methods has its personal trade-offs and will be appropriate relying on the precise use case.

Checkpointing, for instance, will be notably efficient when computing gradients is pricey and the mannequin’s state adjustments slowly over time. By storing the mannequin’s state at intermediate factors, gradients will be computed extra effectively with out having to recompute the whole mannequin.

Gradient Approximation Strategies

Gradient approximation strategies are used to approximate the gradients of a mannequin with out computing the precise gradients. This may be useful when computing gradients is pricey or when the mannequin’s gradients are usually not well-defined. A number of gradient approximation strategies can be found:

- Stochastic gradient descent (SGD): approximating gradients by computing the gradient of a single knowledge level as a substitute of the whole coaching dataset.

- Mini-batch gradient descent: approximating gradients by computing the gradient of a small batch of information factors as a substitute of the whole coaching dataset.

- Gradient quantization: approximating gradients by quantizing the gradient values to fewer bits or integers.

- Monte Carlo gradient approximation: approximating gradients by utilizing random samples of the information or mannequin to estimate the gradient.

Every of those strategies has its strengths and weaknesses, and selecting the right strategy is determined by the precise use case.

SGD, for instance, will be notably efficient when the mannequin’s gradients are noisy or have excessive variance. By computing gradients for a single knowledge level, SGD can cut back the impression of noise and enhance the soundness of the coaching course of.

Mitigating Numerical Instability in Gradient-Primarily based Optimization

Numerical instability is a standard problem in gradient-based optimization, the place the gradients of the mannequin change into too giant or too small, resulting in divergence or convergence points. A number of strategies will be employed to mitigate numerical instability:

- Gradient clipping: clipping the gradients to a set vary or customary regular distribution to stop them from turning into too giant.

- Gradient normalization: normalizing the gradients to a set scale or distribution to enhance stability.

- Gradient momentum: including momentum to the gradients to stabilize the optimization course of.

Every of those strategies has its strengths and weaknesses, and selecting the right strategy is determined by the precise use case.

Gradient clipping, for instance, will be notably efficient when the mannequin’s gradients are very giant or have excessive variance. By clipping the gradients, the optimization course of can change into extra secure and fewer inclined to numerical instability.

Functions of Computerized Differentiation

Computerized differentiation (autodiff) has quite a few purposes in varied fields, revolutionizing the way in which advanced issues are tackled. It permits researchers and builders to effectively compute gradients, which is the spine of many machine studying and optimization algorithms.

Imaginative and prescient and Robotics

Autodiff has been instrumental in robotics and pc imaginative and prescient duties requiring exact gradient computation. For example, in robotics, autodiff permits for:

-

Detailed evaluation of motion dynamics by calculating the derivatives of kinematic fashions, enabling optimum path planning and movement management.

-

Exact monitoring of visible options, facilitating object recognition, and scene understanding.

-

Vital evaluation of sensor knowledge, main to raised navigation and manipulation in cluttered environments.

-

Gradient-based studying of robotic insurance policies by means of model-predictive management, resulting in extra environment friendly and agile movement.

“Autodiff simplifies gradient-based studying for robotics, enabling extra correct and environment friendly movement management.”

Pure Language Processing (NLP)

Autodiff performs an important function in NLP by facilitating gradient-based studying and optimization of advanced fashions similar to language translation, sentiment evaluation, and textual content classification:

-

Autodiff in transformer fashions like BERT and RoBERTa permits environment friendly gradient computation throughout coaching, considerably enhancing efficiency on varied NLP benchmarks.

-

Utilizing autodiff in masked language modeling, NLP purposes can profit from self-supervised studying, enabling illustration studying and pre-training of language fashions.

-

Autodiff permits NLP duties to scale to giant datasets and architectures, making it an important part of recent NLP pipelines.

Recreation Improvement

Autodiff helps streamline recreation improvement by optimizing important elements similar to:

-

Collision detection and response techniques, making certain clean gameplay and reasonable simulations.

-

Physics engines, enabling extra correct and environment friendly simulations of advanced bodily phenomena.

-

Synthetic intelligence (AI) decision-making, facilitating extra reasonable agent behaviors and recreation world interactions.

-

Autodiff-based stage era strategies, permitting for the creation of extra various and interesting ranges.

Further Trade Functions

Autodiff has additionally discovered purposes in varied different fields, together with:

-

Scheduling and useful resource allocation optimization in provide chain administration and logistics.

-

Monetary modeling and danger evaluation, permitting for extra correct and environment friendly computation of derivatives and sensitivities.

-

Molecular dynamics simulation, facilitating extra correct and environment friendly computation of gradients in pressure fields.

“Autodiff has change into an important part of many fashionable purposes, enabling environment friendly and scalable gradient computation and facilitating breakthroughs in various fields.”

Final Phrase

And there you’ve got it – Computerized Differentiation in Machine Studying Survey is a basic idea that has revolutionized the sector of machine studying. By understanding the significance of gradients in optimization, the assorted strategies used for gradient computation, and the software program libraries that make it attainable, you will be properly in your method to constructing extra environment friendly and efficient machine studying fashions. Keep in mind, AD isn’t just a method – it is a key to unlocking the complete potential of machine studying.

FAQ Useful resource

What’s Computerized Differentiation?

Computerized Differentiation (AD) is a method used to compute gradients of capabilities, enabling machine studying fashions to study from knowledge and enhance their efficiency over time.

What are the advantages of AD in machine studying?

AD simplifies the method of computing gradients, making it attainable to optimize machine studying fashions effectively and successfully.

What are some widespread purposes of AD in machine studying?

AD is often utilized in robotics, pc imaginative and prescient, and recreation improvement, amongst different purposes.

What are some widespread strategies used for gradient computation?

Direct differentiation guidelines and adjoint sensitivity evaluation are two widespread strategies used for gradient computation.