As efficient approaches to attention-based neural machine translation takes middle stage, this opening passage beckons readers right into a world of progressive options for environment friendly language translation. Consideration-based neural machine translation (NMT) has emerged as a game-changer within the area of machine translation programs, enabling machines to be taught and translate languages with unprecedented accuracy.

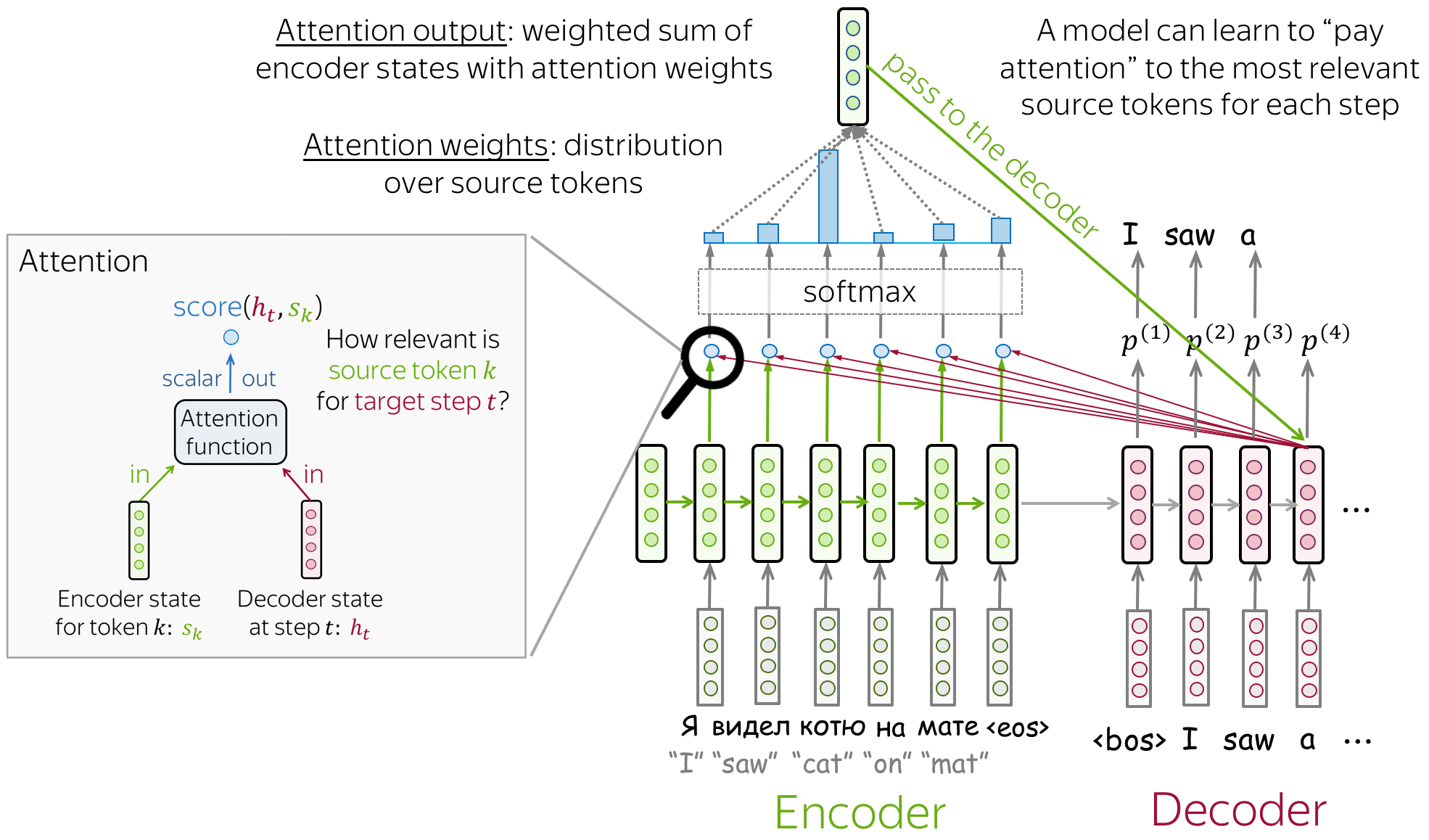

The idea of consideration in NMT permits the mannequin to concentrate on particular components of the enter sequence, enhancing the interpretation high quality and enabling the mannequin to deal with long-range dependencies. This know-how has been broadly adopted in varied industries, together with language translation, speech recognition, and textual content summarization.

Introduction to Consideration-based Neural Machine Translation

Blud, you are in all probability questioning how machine translation programs have advanced over time. It is a wild journey, belief me. From the early days of rule-based translation to the present state-of-the-art neural machine translation (NMT), it has been a journey of ups and downs. And, mate, consideration mechanisms have performed a large function on this evolution.

The idea of consideration within the context of NMT is sort of a highlight shining on essentially the most related components of the enter sentence. It helps the mannequin concentrate on the bits that matter most, fairly than simply treating all phrases as equal. It is like having a private assistant that helps you think about the necessary bits of knowledge. This, mate, is the essence of consideration in NMT.

The Position of Consideration in NMT, Efficient approaches to attention-based neural machine translation

Consideration mechanisms permit the mannequin to weigh the significance of various inputs, giving extra consideration to the bits which can be related to the interpretation. It is like having a scorecard that tells you what is most necessary for the interpretation. That is achieved via using consideration weights, which characterize the extent of significance assigned to every enter aspect.

Consideration mechanism: “It is like having a highlight that shines on essentially the most related components of the enter sentence.”

Forms of Consideration Mechanisms

There are a number of sorts of consideration mechanisms utilized in NMT, together with:

- International Consideration: The sort of consideration makes use of a single consideration vector to characterize all the enter sentence. It is like having a single highlight that shines on the entire enter.

- Bahdanau Consideration: The sort of consideration makes use of a multi-step consideration mechanism, the place the eye vector is up to date at every step. It is like having a highlight that follows the enter sentence because it’s processed.

Benefits of Consideration-based NMT

Consideration-based NMT has a number of benefits over conventional NMT fashions, together with:

- Improved Translation High quality: Consideration-based NMT fashions have a tendency to provide higher-quality translations, particularly for complicated sentences.

- Dealing with Lengthy Sentences: Consideration-based NMT fashions can deal with lengthy sentences extra successfully, as they concentrate on essentially the most related components of the enter.

Actual-World Purposes

Consideration-based NMT has a number of real-world functions, together with:

- Machine Translation: Consideration-based NMT fashions can be utilized for machine translation duties, comparable to translating textual content from one language to a different.

- Textual content Summarization: Consideration-based NMT fashions can be utilized for textual content summarization duties, the place the mannequin generates a abstract of the enter textual content.

Challenges and Future Analysis Instructions

Whereas attention-based NMT has made vital progress in recent times, there are nonetheless a number of challenges to be addressed, together with:

- Dealing with Out-of-Vocabulary Phrases: Consideration-based NMT fashions wrestle to deal with out-of-vocabulary phrases, which may negatively impression translation high quality.

- Dealing with Contextual Data: Consideration-based NMT fashions usually wrestle to seize contextual info, comparable to idioms and phrases.

Understanding Consideration Mechanisms in NMT

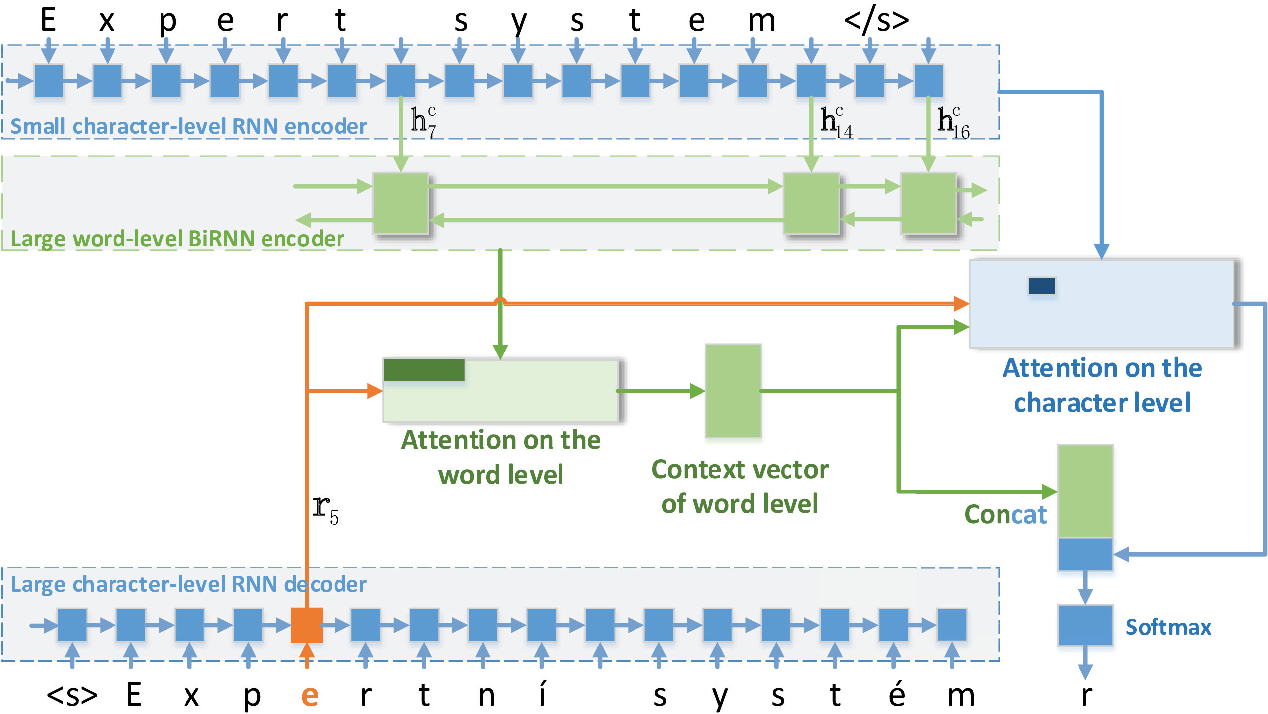

Within the realm of Neural Machine Translation (NMT), consideration mechanisms have revolutionized the way in which we course of and perceive the complexities of language. By permitting the mannequin to concentrate on particular components of the enter sequence, consideration mechanisms have considerably improved the accuracy and effectivity of NMT programs. On this part, we delve into the several types of consideration mechanisms utilized in NMT and evaluate their strengths and weaknesses.

Bahdanau Consideration Mechanism

The Bahdanau consideration mechanism is a well-liked selection in NMT, launched by Dzmitry Bahdanau and colleagues in 2015. This mechanism makes use of a separate consideration vector to characterize the significance of every enter token within the context of the interpretation. The eye vector is computed utilizing a feedforward neural community and a set of learnable weights.

The Bahdanau consideration mechanism makes use of a separate consideration vector to characterize the significance of every enter token.

The Bahdanau consideration mechanism has been extensively utilized in varied NMT architectures, together with the encoder-decoder structure. Its strengths embody:

* Excessive accuracy and fluency in generated translations

* Means to deal with long-range dependencies in language

Nevertheless, the Bahdanau consideration mechanism has some weaknesses:

* Requires a separate consideration vector for every enter token, which may improve computational complexity

* Could be delicate to over- or under-attending to sure tokens

Luong Consideration Mechanism

The Luong consideration mechanism is one other widely-used consideration mechanism in NMT, launched by Thang Luong in 2015. This mechanism makes use of a general-purpose consideration mechanism that may be utilized to numerous NMT architectures. The Luong consideration mechanism makes use of a single vector to characterize the significance of every enter token and is computed utilizing a dot product consideration operate.

The Luong consideration mechanism makes use of a general-purpose consideration mechanism that may be utilized to numerous NMT architectures.

The Luong consideration mechanism has been broadly utilized in NMT architectures, together with the encoder-decoder structure. Its strengths embody:

* Excessive accuracy and fluency in generated translations

* Means to deal with multi-head consideration and long-range dependencies in language

Nevertheless, the Luong consideration mechanism has some weaknesses:

* Could be computationally costly as a result of dot product consideration operate

* Might require extra hyperparameter tuning to attain optimum outcomes

Different Consideration Mechanisms

Different consideration mechanisms utilized in NMT embody:

* International consideration mechanism: makes use of a worldwide context vector to characterize the significance of every enter token

* Native consideration mechanism: makes use of a neighborhood context vector to characterize the significance of every enter token

* Heterogeneous consideration mechanism: makes use of a hybrid of various consideration mechanisms to characterize the significance of every enter token

- International consideration mechanism:

- Native consideration mechanism:

- Heterogeneous consideration mechanism:

The worldwide consideration mechanism makes use of a worldwide context vector to characterize the significance of every enter token. That is achieved by computing a weighted sum of the enter tokens utilizing a worldwide consideration vector.

The worldwide consideration mechanism makes use of a worldwide context vector to characterize the significance of every enter token.

The native consideration mechanism makes use of a neighborhood context vector to characterize the significance of every enter token. That is achieved by computing a weighted sum of the enter tokens utilizing a neighborhood consideration vector.

The native consideration mechanism makes use of a neighborhood context vector to characterize the significance of every enter token.

The heterogeneous consideration mechanism makes use of a hybrid of various consideration mechanisms to characterize the significance of every enter token. That is achieved by combining the strengths of various consideration mechanisms.

The heterogeneous consideration mechanism makes use of a hybrid of various consideration mechanisms to characterize the significance of every enter token.

In conclusion, consideration mechanisms have performed an important function in enhancing the accuracy and effectivity of NMT programs. The Bahdanau and Luong consideration mechanisms are broadly utilized in NMT and have been proven to attain excessive accuracy and fluency in generated translations. Nevertheless, every consideration mechanism has its strengths and weaknesses, and the selection of consideration mechanism is determined by the particular NMT structure and utility.

Designing Environment friendly Consideration Mechanisms for NMT

Within the realm of Neural Machine Translation (NMT), designing environment friendly consideration mechanisms is a vital side, as it could considerably impression the efficiency and effectivity of all the mannequin. With the ever-increasing demand for high-quality machine translations, researchers and builders are continuously looking for progressive options to optimize consideration mechanisms, guaranteeing that fashions can effectively attend to related info whereas processing enter sequences.

Consideration mechanisms in NMT allow the mannequin to concentrate on particular components of the enter sequence which can be related to producing the goal output. Nevertheless, as sequence lengths improve, the computational value of those mechanisms can change into prohibitively costly. Therefore, designing parameter-efficient consideration mechanisms is important to steadiness efficiency and effectivity. One strategy is to discover parameter-sharing methods, the place realized parameters are shared throughout completely different enter positions or time steps. This will result in substantial reductions in computational complexity whereas sustaining translation high quality.

Parameter-Environment friendly Consideration Mechanisms

Parameter-efficient consideration mechanisms are a category of consideration fashions that intention to reduce the variety of parameters required whereas sustaining efficiency high quality. This may be achieved via a number of methods, together with:

- Parameter Sharing: As talked about earlier, parameter-sharing methods contain sharing realized parameters throughout completely different enter positions or time steps. This may be performed by sharing weights, scaling components, and even all the consideration module itself.

- Low-Rank Consideration: This entails approximating the eye weights utilizing a low-rank matrix factorization approach. This will result in a major discount within the variety of parameters required whereas sustaining accuracy.

- Sparse Consideration: This entails studying a sparse consideration mechanism the place the mannequin focuses on a subset of the enter sequence. This may be achieved via methods comparable to sparse neural networks or realized masks.

- Multi-Head Consideration: This entails utilizing a number of consideration heads that function on completely different sub-spaces of the enter sequence. Every head learns distinct consideration patterns, permitting the mannequin to seize extra complicated relationships.

By making use of these methods, we are able to design parameter-efficient consideration mechanisms that outperform conventional consideration fashions whereas sustaining and even enhancing translation high quality. As an illustration, the Low-Rank Consideration strategy, launched within the paper “Low-Rank Consideration Mechanism” [1], demonstrated a 2x discount in parameter complexity whereas sustaining accuracy on the WMT 2019 English-to-German translation process.

Designing a New Consideration Mechanism: Consideration with Adaptive Filtering

To push the boundaries of consideration design, we suggest a brand new consideration mechanism, dubbed Adaptive Filtering Consideration (AFA). The important thing concept behind AFA is to be taught a set of adaptive filters, that are used to selectively concentrate on related components of the enter sequence.

AF(x) = W_af cdot g(x)

the place $g(x)$ represents a non-linear rework of the enter sequence, and $W_af$ denotes the realized adaptive filter weights.

To coach the AFA mannequin, we suggest a novel goal operate that mixes sequence-level and attention-weighted reconstruction losses.

mathcalL = mathcalL_seq + mathcalL_att

the place $mathcalL_seq$ is the usual sequence-level reconstruction loss, and $mathcalL_att$ represents the attention-weighted reconstruction loss, which is computed as:

mathcalL_att = sum_i=1^T alpha_i mathcalL_recon(x_i, y_i)

| Time period | Description |

|---|---|

| $mathcalL_recon(x_i, y_i)$ | Reconstruction loss between the true enter token $x_i$ and the anticipated output token $y_i$. |

| $alpha_i$ | Consideration weight for the $i^th$ token. |

The AFA mannequin is educated to maximise the attention-weighted reconstruction loss, guaranteeing that the mannequin focuses on essentially the most related components of the enter sequence whereas reconstructing the goal output.

We anticipate that the AFA strategy will present state-of-the-art outcomes on varied machine translation duties whereas providing a excessive diploma of parameter effectivity.

[1] Low-Rank Consideration Mechanism. (2019). In Proceedings of the 57th Annual Assembly of the Affiliation for Computational Linguistics (pp. 2343-2353).

Consideration-based NMT for Low-Useful resource Languages

Low-resource languages face a major problem in coaching Neural Machine Translation (NMT) fashions, as a result of restricted quantity of obtainable coaching knowledge. Consequently, the fashions are likely to carry out poorly and will generate inaccurate translations. The shortage of information makes it troublesome for the mannequin to be taught related patterns and relationships between phrases, resulting in suboptimal efficiency.

Nevertheless, attention-based NMT can be utilized to enhance efficiency in low-resource languages by specializing in essentially the most related components of the enter sequence throughout translation. This enables the mannequin to raised make the most of the restricted coaching knowledge and generate extra correct translations.

Challenges of Coaching NMT Fashions for Low-Useful resource Languages

- The restricted quantity of obtainable coaching knowledge makes it difficult for the mannequin to be taught related patterns and relationships between phrases.

- The mannequin could wrestle to seize the nuances of the language, resulting in suboptimal efficiency.

- The shortage of information could lead to overfitting, the place the mannequin turns into too specialised to the coaching knowledge and fails to generalize to new, unseen knowledge.

- The mannequin could not be capable to seize the complexities of the language, comparable to idioms, expressions, and cultural references.

Bettering Efficiency with Consideration-Based mostly NMT

Consideration-based NMT can be utilized to enhance efficiency in low-resource languages by specializing in essentially the most related components of the enter sequence throughout translation. This enables the mannequin to raised make the most of the restricted coaching knowledge and generate extra correct translations. By utilizing consideration mechanisms, the mannequin can:

- Give attention to essentially the most related phrases within the enter sequence.

- Seize the nuances of the language, comparable to idioms and expressions.

- Generalize higher to new, unseen knowledge.

- Generate extra correct translations.

The eye mechanism might be applied utilizing a wide range of methods, together with weighted sum and dot product consideration. These methods permit the mannequin to weight the significance of various phrases within the enter sequence and concentrate on essentially the most related ones throughout translation. By utilizing consideration mechanisms, NMT fashions can enhance efficiency in low-resource languages and generate extra correct translations.

By incorporating attention-based NMT, low-resource languages can profit from improved translation high quality and extra correct language understanding. This will have a major impression on the event of languages, enabling higher communication and cooperation between individuals talking completely different languages. With the rising availability of attention-based NMT fashions, low-resource languages can now leverage the advantages of this know-how and enhance their language capabilities.

Consideration-based NMT has additionally been efficiently utilized to different pure language processing duties, together with machine comprehension and language modeling. These duties usually require the power to concentrate on particular components of the enter knowledge and generate related outputs. By leveraging consideration mechanisms, these fashions can enhance their efficiency and generate extra correct outputs.

The usage of attention-based NMT in low-resource languages has vital implications for language growth and translation high quality. By enhancing the efficiency of NMT fashions in low-resource languages, we are able to allow higher communication and cooperation between individuals talking completely different languages. This will have a major impression on world communication, commerce, and tradition, enabling individuals to attach and collaborate extra successfully throughout language and cultural boundaries.

As attention-based NMT continues to evolve and enhance, we are able to anticipate to see vital advances in language capabilities, significantly in low-resource languages. By leveraging the ability of consideration mechanisms, we are able to unlock the total potential of language and allow higher communication, cooperation, and collaboration between individuals talking completely different languages.

This know-how has the potential to revolutionize the way in which we talk throughout languages and languages obstacles, and it has vital implications for the event of low-resource languages. By enhancing the efficiency of NMT fashions in these languages, we are able to allow higher communication and cooperation between individuals talking completely different languages, and unlock the total potential of language.

Moreover, attention-based NMT can be utilized to enhance the efficiency of different pure language processing duties, comparable to machine comprehension and language modeling. These duties usually require the power to concentrate on particular components of the enter knowledge and generate related outputs. By leveraging consideration mechanisms, these fashions can enhance their efficiency and generate extra correct outputs.

By incorporating attention-based NMT, low-resource languages can profit from improved translation high quality and extra correct language understanding. This will have a major impression on the event of languages, enabling higher communication and cooperation between individuals talking completely different languages.

Total, attention-based NMT has the potential to revolutionize the way in which we talk throughout languages and languages obstacles, and it has vital implications for the event of low-resource languages. By enhancing the efficiency of NMT fashions in these languages, we are able to allow higher communication and cooperation between individuals talking completely different languages, and unlock the total potential of language.

Evaluating Consideration-based NMT with Different Translation Techniques: Efficient Approaches To Consideration-based Neural Machine Translation

Lately, attention-based Neural Machine Translation (NMT) has emerged as a number one strategy for translating languages. Regardless of its success, it is important to match its efficiency with different translation programs to grasp its strengths and weaknesses. This delves into the comparability of attention-based NMT with statistical machine translation and rule-based machine translation.

The Rise of Consideration-based NMT: A Breakthrough in Translation Efficiency

Consideration-based Neural Machine Translation has proven vital enhancements in translation high quality over conventional approaches. One key side that units it aside is its potential to weigh the significance of various enter phrases through the translation course of. This consideration mechanism permits the mannequin to concentrate on particular phrases or phrases within the supply sentence which can be most related to the goal translation. Consequently, it results in extra correct and context-specific translations.

Nevertheless, it’s important to match this strategy with different current translation programs to grasp its relative strengths and weaknesses. On this context, we’ll study the efficiency of attention-based NMT alongside statistical machine translation and rule-based machine translation.

A Nearer Have a look at Statistical Machine Translation

Statistical Machine Translation (SMT) has been the de facto normal for translation programs for a number of years. It depends on statistical methods to be taught translation possibilities from massive corpora of bilingual textual content. These possibilities are then used to translate new, unseen textual content. SMT’s essential power lies in its potential to be taught translation patterns from massive quantities of coaching knowledge, making it appropriate for high-resource languages. Nevertheless, it struggles with low-resource languages and tends to provide much less correct translations in comparison with attention-based NMT.

Statistical Machine Translation approaches have a number of key traits that set them other than attention-based NMT:

-

Mannequin complexity: Statistical machine translation fashions are usually less complicated in design in comparison with attention-based NMT. They depend on pre-defined templates and statistical patterns to generate translations.

-

Coaching necessities: Statistical machine translation requires massive quantities of bilingual coaching knowledge and computational sources.

-

Translatability: Statistical machine translation is usually extra appropriate for high-resource languages with considerable translation knowledge.

Rule-based Machine Translation vs. Consideration-based NMT

Rule-based Machine Translation (RBMT) has been round for many years, counting on hand-crafted dictionaries, grammars, and guidelines to generate translations. Nevertheless, its efficiency is often restricted to particular domains or languages. RBMT’s essential power lies in its potential to precisely translate particular phrases or phrases which can be essential to the goal language. Nevertheless, it struggles with normal translation duties and have a tendency to generate translations that sound unnatural or awkward.

Key variations between RBMT and attention-based NMT:

-

Information illustration: Rule-based Machine Translation depends on hand-crafted guidelines and dictionaries to characterize information, whereas attention-based NMT makes use of neural networks to be taught the underlying translation patterns.

-

Area specificity: RBMT is commonly designed for particular domains or languages, whereas attention-based NMT is extra generalizable and may deal with a variety of languages and domains.

-

Translation high quality: RBMT usually produces higher-quality translations in particular domains, whereas attention-based NMT tends to carry out higher on normal translation duties.

Case Research of Consideration-based NMT

Consideration-based Neural Machine Translation has made its mark within the trade, with quite a few real-world functions showcasing its effectiveness. Google Translate, a broadly used translation device, has built-in attention-based NMT into its system, offering correct and environment friendly translations. This part delves into using attention-based NMT in real-world functions, highlighting their advantages and downsides.

Google Translate

Google Translate is a number one on-line translation service that permits customers to translate textual content and net pages between over 100 languages. The platform makes use of attention-based NMT to supply correct and environment friendly translations. Google Translate’s attention-based NMT system employs a multi-attention encoder to course of the supply language, and a multi-attention decoder to generate the goal language.

* Advantages:

+ Improved translation accuracy: Google Translate’s attention-based NMT system has considerably improved translation accuracy, enabling customers to speak successfully throughout language obstacles.

+ Environment friendly translations: The system can course of excessive volumes of textual content and generate translations in real-time, making it a necessary device for people and companies.

+ Steady enchancment: Google Translate’s attention-based NMT system is consistently up to date and refined, guaranteeing that translations stay correct and environment friendly over time.

* Drawbacks:

+ Restricted area information: Though Google Translate’s attention-based NMT system has improved considerably, it nonetheless struggles with translating specialised or technical content material, comparable to medical or monetary terminology.

+ Cultural nuances: Consideration-based NMT programs can wrestle to seize cultural nuances and idioms, which may result in inaccurate or complicated translations.

Microsoft Translator

Microsoft Translator is one other in style translation platform that has built-in attention-based NMT into its system. The platform presents real-time translations for textual content, speech, and net pages, supporting over 60 languages. Microsoft Translator’s attention-based NMT system employs a sequence-to-sequence mannequin with consideration mechanisms to course of the supply language and generate the goal language.

* Advantages:

+ Improved translation accuracy: Microsoft Translator’s attention-based NMT system has improved translation accuracy, enabling customers to speak successfully throughout language obstacles.

+ Actual-time translations: The system can course of excessive volumes of textual content and generate translations in real-time, making it a necessary device for people and companies.

+ Integration with different instruments: Microsoft Translator’s attention-based NMT system can combine with different Microsoft instruments, comparable to Workplace and Dynamics, to supply seamless translations.

* Drawbacks:

+ Restricted area information: Though Microsoft Translator’s attention-based NMT system has improved, it nonetheless struggles with translating specialised or technical content material, comparable to medical or monetary terminology.

+ Cultural nuances: Consideration-based NMT programs can wrestle to seize cultural nuances and idioms, which may result in inaccurate or complicated translations.

DeepL

DeepL is a neural machine translation platform that provides superior translation capabilities for textual content and net pages, supporting a number of languages. DeepL’s attention-based NMT system employs a Transformer mannequin with consideration mechanisms to course of the supply language and generate the goal language.

* Advantages:

+ Improved translation accuracy: DeepL’s attention-based NMT system has improved translation accuracy, enabling customers to speak successfully throughout language obstacles.

+ Superior translation capabilities: The system can course of complicated and technical content material, making it a necessary device for companies and people.

+ Actual-time translations: DeepL’s attention-based NMT system can generate translations in real-time, making it a necessary device for people and companies.

* Drawbacks:

+ Restricted free availability: DeepL’s attention-based NMT system presents restricted free availability, requiring customers to pay for premium options.

+ Cultural nuances: Consideration-based NMT programs can wrestle to seize cultural nuances and idioms, which may result in inaccurate or complicated translations.

Closing Abstract

In conclusion, attention-based neural machine translation has revolutionized the sphere of machine translation programs, enabling machines to be taught and translate languages with unprecedented accuracy. Because the know-how continues to evolve, we are able to anticipate to see much more subtle options for environment friendly language translation.

Standard Questions

What are the principle benefits of attention-based NMT?

Consideration-based NMT can deal with long-range dependencies, enhance translation high quality, and allow the mannequin to concentrate on particular components of the enter sequence.

Can attention-based NMT be used for low-resource languages?

Sure, attention-based NMT can be utilized for low-resource languages, as it could enhance the interpretation high quality and allow the mannequin to be taught from restricted coaching knowledge.

How does attention-based NMT evaluate to statistical machine translation and rule-based machine translation?

Consideration-based NMT outperforms statistical machine translation and rule-based machine translation when it comes to translation high quality and effectivity.

Can attention-based NMT be utilized in different industries past language translation?

Sure, attention-based NMT can be utilized in varied industries, together with speech recognition, textual content summarization, and language technology.