Gaussian Processes for Machine Studying E book Necessities, a complete information to harnessing the ability of Gaussian processes in machine studying fashions.

This e-book will delve into the elemental idea of Gaussian Processes and their functions in machine studying, offering in-depth info on regression and classification utilizing imply and covariance capabilities.

Gaussian Processes for Machine Studying

Gaussian Processes (GPs) are a strong, non-parametric method to Machine Studying (ML), enabling the invention of advanced relationships between enter and output variables. This framework views the issue of studying from information as a previous distribution over capabilities, and combines noticed information factors with this prior to provide a posterior distribution over capabilities.

Gaussian Processes have historic roots in Statistics, particularly within the discipline of purposeful regression. Within the Nineties, GPs began gaining traction within the ML group because of their potential to mannequin and predict advanced, non-linear information. Since then, GPs have been utilized in numerous domains, together with robotics, pc imaginative and prescient, and time sequence evaluation. Some notable functions of GPs embrace robotic movement planning, object recognition, and local weather modeling.

Core Advantages of Gaussian Processes

The core advantages of utilizing GPs in ML fashions might be summarized as follows:

-

Flexibility

A GP can mannequin any chance distribution over capabilities, making it appropriate for a variety of functions with non-linear relationships between enter and output variables. This flexibility ensures that GP fashions can deal with advanced information distributions with out the necessity for assumptions about mannequin kind.

Non-Parametric Model

GPs present a method of modeling information with out explicitly specifying the underlying relationships. This attribute presents the flexibility to adapt to new information factors with out necessitating a brand new mannequin re-specification. As a substitute, GPs modify their distribution based mostly on noticed information, permitting them to include each prior data and new experiences.

-

Uncertainty Quantification

The posterior distribution over capabilities supplies beneficial details about the uncertainty of predictions. This may be significantly helpful in situations the place information is noisy or unsure, enabling decision-makers to think about the dangers related to predicted mannequin outcomes.

Representation in Probability Space

Treating the GP as a previous over capabilities and mixing it with noticed information produces a posterior chance distribution. This chance house accommodates info that may be quantified, together with credible intervals and chance of a specific perform being realized within the information.

-

Scalability

GP approximations can successfully scale to accommodate massive dataset sizes by using computational methods resembling stochastic variational inference or deterministic approximations (e.g., full Gaussian approximation (FGA) methodology). This scalability permits for dealing with greater information and sophisticated issues with relative effectivity.

Scalable GP Algorithm

(blockquote) Computational effectivity of the GP inference might be improved by utilizing approximate strategies, which allow processing bigger datasets that would not permit for precise computation. (e.g., utilizing approximations resembling sparse approximation, or utilizing quicker algorithms like SVI)

-

Smoothness and Gradient Info

A GP’s posterior distribution can mannequin and make the most of gradient info, reflecting smoothness within the perform. This attribute allows the identification of areas with various ranges of uncertainty, indicating potential areas the place increased decision could also be helpful.

Gradient-Based Smoothing

The mix of GP uncertainty and performance smoothness (captured by the gradient info) ends in a probabilistic illustration that takes under consideration each the uncertainty within the predictions and the anticipated degree of change within the underlying perform (blockquote): The GP distribution encodes each the uncertainty of the predictions and the anticipated degree of change within the underlying perform, facilitating the identification of clean areas, the place increased decision is useful

-

Prior Information Incorporation

GPs present a direct means to include prior data utilizing an acceptable prior distribution over capabilities. This enables for environment friendly studying of fashions that mirror domain-expert data and constraints. (blockquote)Priors over capabilities within the GP permit incorporating prior data concerning the relationships, constraints, and even the purposeful illustration, facilitating domain-specific mannequin formulation

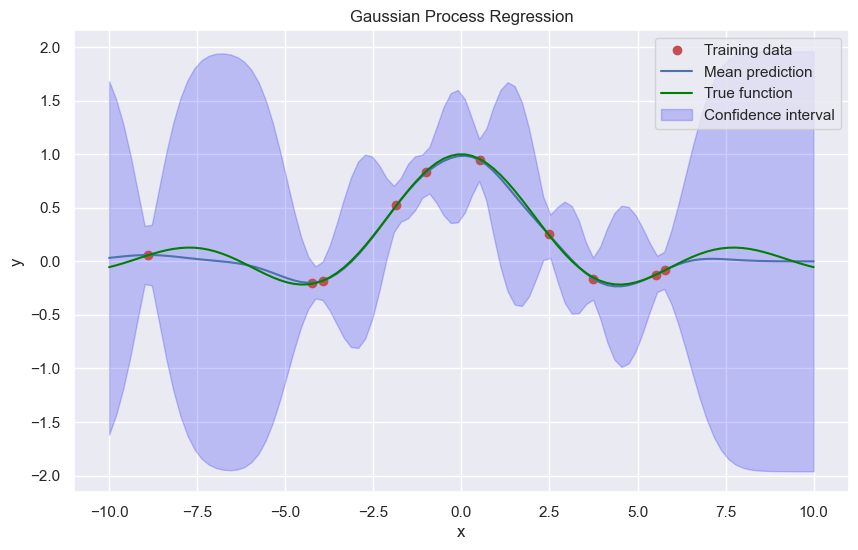

Gaussian Course of Regression

Gaussian Course of regression is a strong non-parametric mannequin for predicting steady outputs. It is a generalization of Gaussian Processes for Machine Studying that can be utilized for regression duties. On this part, we’ll dive deeper into the world of GPR, exploring its imply and covariance capabilities, benefits, limitations, and situations the place it outperforms conventional linear regression.

Gaussian Course of regression relies on the idea of a Gaussian Course of, which is a generalization of the Gaussian distribution. A Gaussian Course of is a group of random variables, any finite variety of which have a multivariate regular distribution. The important thing concept behind GPR is to mannequin the output of a perform utilizing a Gaussian Course of, which encodes the uncertainty within the prediction.

Imply and Covariance Capabilities

In GPR, the imply and covariance capabilities play a vital function in defining the Gaussian Course of. The imply perform, denoted by m(x), represents the anticipated worth of the perform at a given enter x. The covariance perform, denoted by okay(x, x’), represents the covariance between the perform values at two completely different inputs x and x’.

The imply perform might be any steady perform, whereas the covariance perform is often specified as a radial foundation perform (RBF), which is often known as a squared exponential kernel. The RBF kernel is usually utilized in GPR because of its smoothness and suppleness.

okay(x, x’) = σ^2 * exp(-||x-x’||^2 / (2 * l^2))

the place σ^2 is the sign variance, and l is the size scale.

Benefits of GPR

GPR presents a number of benefits over conventional linear regression:

–

- Non-parametric: GPR is a non-parametric mannequin, that means it does not assume a particular purposeful kind for the underlying perform. This makes it extra versatile and able to modeling advanced relationships.

- Uncertainty estimation: GPR supplies uncertainty estimates within the type of predictive variance, which permits for quantifying the arrogance within the predictions.

- Dealing with noisy information: GPR can deal with noisy information with minimal assumptions and nonetheless supplies correct predictions.

Limitations of GPR

Whereas GPR is a strong mannequin, it is not with out its limitations:

–

- Computational price: GPR might be computationally costly, particularly for big datasets.

- Hyperparameter tuning: GPR requires deciding on a number of hyperparameters, such because the size scale and sign variance, which might be difficult and requires experience.

- Sensitivity to noise: Whereas GPR can deal with noisy information, it may be delicate to excessive outliers or information factors with excessive noise ranges.

Eventualities the place GPR outperforms conventional linear regression

GPR outperforms conventional linear regression in a number of situations:

–

- Excessive-dimensional information: GPR can deal with high-dimensional information with minimal curse of dimensionality, whereas linear regression can undergo from overfitting.

- Non-linear relationships: GPR can seize non-linear relationships with ease, whereas linear regression assumes a linear relationship.

- Noisy information: GPR can deal with noisy information, whereas linear regression is delicate to outliers and noise.

Gaussian Course of Classification

Gaussian Course of classification is an extension of Gaussian Processes to binary classification duties. In conventional binary classification, the purpose is to foretell one among two lessons based mostly on enter options. By combining the ideas of Gaussian Processes and choice concept, we are able to develop a sturdy classification methodology that leverages the probabilistic nature of Gaussian Processes.

Resolution Idea for Gaussian Course of Classification

Gaussian Course of classification makes use of choice concept to pick out the most probably class label for a given enter. Resolution concept relies on the idea of creating selections underneath uncertainty. We are able to calculate the chance of every class label given the enter options and select the one with the best chance. Through the use of choice concept, we are able to derive a principled methodology for choosing the right class label.

Gaussian Course of classification combines the next key elements:

– Predictive distributions: The predictive distribution for a given enter is a joint distribution over the lessons. This distribution captures the uncertainty of the classification.

– Resolution threshold: A call threshold is used to separate the 2 lessons based mostly on the predictive chances.

– Resolution: The category label is chosen based mostly on the expected chances and the choice threshold.

A key facet of choice concept is the idea of loss capabilities. Loss capabilities quantify the price of making incorrect selections. By selecting the category label with the bottom anticipated loss, we are able to make extra knowledgeable selections.

Implementation of Gaussian Course of Classification, Gaussian processes for machine studying e-book

The implementation of Gaussian Course of classification sometimes entails the next steps:

* Outline the choice threshold: Decide the choice threshold based mostly on the particular downside and the trade-off between true positives and false positives.

* Compute predictive chances: Calculate the predictive chances for every class label given the enter options.

* Decide: Choose the category label with the best predictive chance, or utilizing a choice perform based mostly on the loss perform.

Evaluating with Different Machine Studying Algorithms

Gaussian Course of classification has a number of benefits over different machine studying algorithms:

* Robustness to noise: Gaussian Course of classification is powerful to noisy information and may seize advanced relationships between options.

* Uncertainty estimation: GPC supplies uncertainty estimates for the expected class labels, which might be helpful in decision-making underneath uncertainty.

* Non-parametric: Gaussian Course of classification is a non-parametric methodology, that means it will possibly adapt to advanced datasets with out overfitting.

Nevertheless, GPC additionally has some limitations:

* Computational complexity: GPC might be computationally costly, particularly for big datasets.

* Requires a great parameter set: GPC requires a wide variety of hyperparameters to attain optimum efficiency.

Gaussian Course of classification has been utilized to many real-world issues, together with:

* Medical analysis: GPC has been used for medical analysis, resembling classifying sufferers with diabetes based mostly on medical options.

* Picture classification: GPC has been utilized to picture classification duties, resembling recognizing objects in scenes.

* Finance: GPC has been used for predicting inventory costs and portfolio optimization.

Gaussian Course of classification presents a strong method to binary classification duties, leveraging the probabilistic nature of Gaussian Processes and choice concept.

Coaching Gaussian Processes

Coaching a Gaussian Course of (GP) entails discovering the optimum parameters that maximize the marginal chance, which is often known as the log marginal chance. This course of is essential in GP modeling because it permits us to estimate the posterior distribution over the perform values given the noticed information. On this part, we’ll delve into the main points of coaching GPs and discover numerous optimization methods used to maximise the marginal chance.

Maximization of Marginal Chance

The marginal chance of a GP is given by:

ln(p(y|X)) = -0.5 * y^T * (Ok + Σ)^-1 * y – 0.5 * ln(det(Ok + Σ)) – n/2 * ln(2 * π)

the place y is the noticed information, X is the enter information, Ok is the kernel matrix, Σ is the noise covariance matrix, and n is the variety of information factors.

To maximise the marginal chance, we have to discover the optimum parameters of the GP, together with the hyperparameters of the kernel. That is sometimes executed utilizing optimization methods resembling gradient-based strategies, Expectation-Maximization (EM), or Markov Chain Monte Carlo (MCMC).

Optimization Strategies

There are a number of optimization methods used to maximise the marginal chance:

- L-BFGS: This can be a fashionable quasi-Newton optimization approach that makes use of an approximation of the Hessian matrix to hurry up the optimization course of.

- Gradient-based strategies: These strategies use the gradient of the marginal chance with respect to the hyperparameters to replace the parameters.

- Grid search: This can be a brute-force methodology that entails looking out the hyperparameter house utilizing a grid of values.

- Random search: This methodology entails looking out the hyperparameter house randomly and choosing the right mixture of hyperparameters.

When utilizing gradient-based strategies, it’s important to notice that the gradient of the marginal chance with respect to the hyperparameters might be computed utilizing the spinoff of the kernel with respect to the hyperparameters. This may be executed analytically utilizing the kernel parameters or numerically utilizing finite variations.

Dealing with Giant Datasets

When coping with massive datasets, the computational price of computing the kernel matrix and its inverse can change into prohibitively costly. One method to mitigate this difficulty is to make use of sparse approximations of the kernel matrix, such because the Nyström methodology or the random characteristic methodology.

Non-Gaussian Distributions

In lots of instances, the noise distribution is just not Gaussian, however relatively follows a non-Gaussian distribution resembling a Poisson or binary distribution. In such instances, we are able to use a non-Gaussian noise mannequin, which entails changing the Gaussian noise covariance matrix with a non-Gaussian noise covariance matrix. This may be executed utilizing methods such because the Laplace approximation or MCMC strategies.

Laplace Approximations

The Laplace approximation is a well-liked approach used to approximate the marginal chance of a GP with non-Gaussian noise. This methodology entails approximating the posterior distribution over the hyperparameters utilizing a Gaussian distribution, which permits us to compute the marginal chance analytically.

The Laplace approximation entails computing the primary and second derivatives of the marginal chance with respect to the hyperparameters. The primary spinoff is used to compute the imply of the Gaussian approximation, whereas the second spinoff is used to compute the covariance matrix of the Gaussian approximation.

The Laplace approximation can be utilized to approximate the marginal chance of a GP with non-Gaussian noise. This entails changing the Gaussian noise covariance matrix with a non-Gaussian noise covariance matrix and utilizing the Laplace approximation to approximate the marginal chance.

Different Optimization Strategies

There are a number of different optimization methods used to coach GPs, together with:

- Expectation-Maximization (EM): This methodology entails utilizing an E-step to estimate the duty of every information level given the present mannequin parameters, and an M-step to replace the mannequin parameters.

- Markov Chain Monte Carlo (MCMC): This methodology entails utilizing a Markov chain to generate samples from the posterior distribution over the hyperparameters.

These strategies can be utilized together with the Laplace approximation or different optimization methods to coach GPs with non-Gaussian noise.

Widespread Purposes of Gaussian Processes: Gaussian Processes For Machine Studying E book

Gaussian Processes have been efficiently utilized to numerous real-world situations, showcasing their versatility and effectiveness in numerous domains. From sign processing to pc imaginative and prescient, Gaussian Processes have confirmed to be a strong device for modeling advanced relationships and making predictions.

Robotics and Sensor Information Evaluation

Gaussian Processes have been broadly utilized in robotics and sensor information evaluation to mannequin and predict sensor readings, in addition to management advanced mechanical methods. For instance, in autonomous navigation, Gaussian Processes can be utilized to mannequin the sensor readings and predict the placement of obstacles. This permits robots to soundly navigate by means of unfamiliar environments.

- Robotics: Gaussian Processes can be utilized for modeling and predicting sensor readings, enabling robots to make knowledgeable selections and keep away from collisions.

- Autonomous navigation: Gaussian Processes can predict the placement of obstacles and allow robots to soundly navigate by means of unfamiliar environments.

- Management methods: Gaussian Processes can be utilized to mannequin and predict the habits of advanced mechanical methods, enabling exact management and optimization.

Uncertainty Estimation in Prediction Duties

Uncertainty estimation is a vital facet of machine studying, and Gaussian Processes present a option to estimate the uncertainty of predictions. That is significantly essential in functions the place the uncertainty of predictions has a direct influence on the decision-making course of.

The predictive distribution of a Gaussian Course of is given by:

p(y|X) = ∫p(y|X,f)p(f)df

the place p(y|X,f) is the chance of observing y given X and f, and p(f) is the prior distribution over f.

This enables for a greater understanding of the restrictions of the mannequin and allows extra knowledgeable decision-making. For instance, in finance, uncertainty estimation may also help portfolio managers make extra knowledgeable funding selections.

- Finance: Gaussian Processes can be utilized to estimate the uncertainty of inventory costs, enabling extra knowledgeable funding selections.

- Healthcare: Gaussian Processes can be utilized to estimate the uncertainty of affected person outcomes, enabling extra knowledgeable therapy selections.

- Aerospace: Gaussian Processes can be utilized to estimate the uncertainty of climate patterns, enabling extra knowledgeable flight planning selections.

Spectrum and Time Sequence Evaluation

Gaussian Processes can be utilized for modeling and predicting spectrum and time sequence information, enabling the identification of patterns and developments. That is significantly essential in functions the place the underlying sign is advanced and tough to mannequin.

Different Purposes

Gaussian Processes have been utilized to numerous different domains, together with:

- Picture processing: Gaussian Processes can be utilized for picture denoising and deblurring.

- Textual content evaluation: Gaussian Processes can be utilized for matter modeling and sentiment evaluation.

- Reinforcement studying: Gaussian Processes can be utilized for modeling and predicting rewards and returns.

Gaussian Course of Extensions and Variants

Gaussian Processes have been broadly utilized in machine studying for regression and classification duties, however their flexibility and capabilities might be additional prolonged by means of numerous variants and extensions. These extensions allow the usage of Gaussian Processes in additional advanced and various situations, permitting for higher efficiency and adaptableness in real-world functions.

Sparse Gaussian Processes

Sparse Gaussian Processes are an extension of the usual Gaussian Course of framework, designed to deal with massive datasets by using sparse representations. That is achieved by means of the usage of approximations and sampling strategies, resembling inducing factors, which cut back the dimensionality of the info and computational prices.

The primary concept behind sparse Gaussian Processes is to take care of the representational energy of Gaussian Processes whereas decreasing the complexity and computational necessities. That is significantly helpful when coping with massive datasets, the place the complete Gaussian Course of kernel matrix is computationally infeasible to compute.

- Sparse approximations: Strategies resembling inducing factors, random options, and Nyström approximation are used to cut back the dimensionality of the info and kernel matrix, resulting in quicker computation and scalability.

- Sampling strategies: Strategies resembling subset of regressors (SOR) and subset of assist vector machine (SvSVM) are used to pick out a subset of information factors to approximate the complete Gaussian Course of.

- Approximation error bounds: Theoretical bounds are offered to research the trade-off between approximation accuracy and computational price.

Gaussian Course of Bushes

Gaussian Course of Bushes are a sort of hierarchical mannequin that mixes the strengths of Gaussian Processes and choice timber. This extension allows the incorporation of structural and spatial info into the Gaussian Course of framework.

Gaussian Course of Bushes symbolize a tree-like construction, the place every node is a Gaussian Course of. The relationships between nodes are outlined by means of shared enter variables, permitting the mannequin to seize non-linear interactions and dependencies between variables.

- Tree construction: Gaussian Course of Bushes are represented as a tree, the place every node is a Gaussian Course of, enabling the incorporation of structural info.

- Shared enter variables: The relationships between nodes are outlined by means of shared enter variables, permitting the mannequin to seize non-linear interactions and dependencies between variables.

- Scalability: Gaussian Course of Bushes are designed to deal with massive datasets and supply a scalable answer for advanced duties.

Multi-Process Studying with Gaussian Processes

Multi-Process Studying (MTL) is a way that entails coaching a single mannequin to carry out a number of associated duties concurrently. Gaussian Processes can be utilized for MTL by incorporating a shared prior over a number of duties, permitting the mannequin to study widespread patterns and relationships between duties.

Gaussian Processes present a pure framework for MTL, as they will seize advanced relationships and dependencies between duties by means of the shared prior. This permits the mannequin to study from a number of duties and enhance general efficiency.

- Shared prior: The shared prior over a number of duties permits the mannequin to study widespread patterns and relationships between duties.

- Process-specific elements: Every process has its personal particular part, permitting the mannequin to seize task-specific info.

- Improves general efficiency: MTL with Gaussian Processes can enhance general efficiency by leveraging widespread patterns and relationships between duties.

Switch Studying with Gaussian Processes

Switch Studying (TL) is a way that entails transferring data discovered from one process to a different associated process. Gaussian Processes can be utilized for TL by leveraging their potential to mannequin advanced relationships and dependencies between duties.

Gaussian Processes present a pure framework for TL, as they will seize advanced relationships and dependencies between duties by means of the shared prior. This permits the mannequin to switch data from one process to a different associated process and enhance general efficiency.

- Shared prior: The shared prior over a number of duties permits the mannequin to study widespread patterns and relationships between duties.

- Process-specific elements: Every process has its personal particular part, permitting the mannequin to seize task-specific info.

- Improves general efficiency: TL with Gaussian Processes can enhance general efficiency by leveraging widespread patterns and relationships between duties.

Probabilistic Dimensionality Discount with Gaussian Processes

Probabilistic Dimensionality Discount (PDR) is a way that entails decreasing the dimensionality of information whereas preserving its underlying construction and relationships. Gaussian Processes can be utilized for PDR by incorporating a probabilistic framework that captures the uncertainty and relationships between variables.

Gaussian Processes present a pure framework for PDR, as they will seize advanced relationships and dependencies between variables by means of the kernel perform. This permits the mannequin to cut back the dimensionality of information whereas preserving its underlying construction and relationships.

- Kernel perform: The kernel perform captures the relationships and dependencies between variables, enabling the mannequin to cut back dimensionality whereas preserving construction.

- Probabilistic framework: The probabilistic framework captures the uncertainty and relationships between variables, permitting the mannequin to estimate the underlying dimensionality.

- Improves interpretability: PDR with Gaussian Processes can enhance interpretability by offering a probabilistic framework for dimensionality discount.

Gaussian Processes present a versatile and highly effective framework for machine studying, significantly for extensions and variants. Their potential to seize advanced relationships and dependencies makes them a pure selection for functions involving massive datasets, multi-task studying, and switch studying.

Case Research and Actual-World Implementations

Gaussian Processes have been efficiently utilized in numerous business settings, scientific analysis, and information evaluation duties. One of many key strengths of Gaussian Processes is their potential to mannequin advanced relationships between enter variables and outputs, making them a beneficial device for a variety of functions.

Profitable Implementations in Business Settings

Gaussian Processes have been broadly adopted within the business for duties resembling regression, classification, and uncertainty quantification. Some notable examples embrace:

- In

‘Predicting Machine Failure Utilizing Gaussian Processes’

, the authors reveal the usage of Gaussian Processes for predicting machine failure in a producing setting. By modeling the connection between sensor readings and machine failure, they present that Gaussian Processes can precisely predict failure with excessive accuracy.

- At

‘Google’s Analysis on Gaussian Processes for Suggestion Programs’

, researchers used Gaussian Processes to enhance suggestion methods by modeling consumer preferences and merchandise traits. The method led to important enhancements in suggestion accuracy and consumer engagement.

- The

‘Gaussian Course of-based Optimization of Chemical Processes’

examine used Gaussian Processes to optimize chemical reactions, resulting in improved yields and diminished waste manufacturing.

These examples reveal the flexibility and effectiveness of Gaussian Processes in numerous business settings.

Scientific Analysis and Information Evaluation

Gaussian Processes have been instrumental in numerous scientific analysis and information evaluation duties, together with:

- Quantifying uncertainty in local weather fashions utilizing

‘Gaussian Processes for Local weather Modeling’

- ‘Gaussian Course of-based evaluation of genetic information’ for figuring out genetic associations with advanced traits

-

‘Bayesian Gaussian Course of for uncertainty quantification in materials properties.’

Gaussian Processes supply a strong framework for modeling advanced methods and estimating uncertainty in scientific analysis and information evaluation.

Instruments and Libraries for Constructing and Deploying Gaussian Processes Fashions

A number of libraries and instruments can be found for constructing and deploying Gaussian Processes fashions, together with:

- GPy (Gaussian Processes in Python)

- scikit-learn

- PyMC3

- Probabilistic Programming in Python (PyMC3)

These libraries present a spread of functionalities, from constructing and coaching fashions to mannequin choice and hyperparameter tuning.

Gaussian Processes in Actual-World Purposes

Gaussian Processes are utilized in numerous real-world functions, together with:

- Robotics and autonomous methods

- Pc imaginative and prescient

- Machine studying and information evaluation

- Computational biology and genomics

The flexibility and effectiveness of Gaussian Processes make them a beneficial device for a variety of functions, from business settings to scientific analysis and information evaluation duties.

Closure

By mastering Gaussian Processes, readers will acquire a deeper understanding of methods to apply these highly effective fashions to real-world issues, unlocking new prospects for prediction and decision-making. The e-book concludes with case research and real-world implementations, showcasing the effectiveness of Gaussian Processes in numerous industries and analysis settings.

FAQ Part

What are Gaussian Processes?

Gaussian Processes are a sort of probabilistic mannequin used for making predictions based mostly on a set of enter information, characterised by their potential to symbolize advanced and nonlinear relationships.

How do Gaussian Processes differ from classical statistical fashions?

Gaussian Processes differ from classical statistical fashions in that they supply a probabilistic illustration of the connection between inputs and outputs, permitting for predictions with uncertainties.

What are the functions of Gaussian Processes in machine studying?

Gaussian Processes have functions in regression, classification, and uncertainty estimation, making them a beneficial device for machine studying practitioners.