Kicking off with optimization strategies for large-scale machine studying, this opening units the tone of innovation and enchancment. In immediately’s high-stakes information panorama, each fraction of a second counts. Massive-scale machine studying fashions aren’t any exception – their coaching and deployment might be time-consuming, expensive, and susceptible to overfitting. As such, understanding and making use of varied optimization methods is essential for enhancing efficiency, lowering computational prices, and staying forward of the competitors.

This information is designed for these looking for to streamline their large-scale machine studying workflows. We’ll delve into the newest methods, focus on their strengths and limitations, and discover real-world purposes throughout varied industries.

Introduction to Massive-Scale Machine Studying Optimization

In immediately’s world of massive information, machine studying fashions have develop into more and more vital in making knowledgeable choices in varied industries. Nonetheless, as the scale of datasets grows, optimizing these fashions turns into a frightening activity. Massive-scale machine studying optimization is a important problem that must be addressed to enhance mannequin efficiency and scale back computational prices.

Machine studying fashions are designed to be taught from information and make predictions or choices based mostly on that information. Nonetheless, as the scale of the dataset will increase, the complexity of the mannequin grows exponentially. This results in a major enhance in computational time and reminiscence necessities, making it troublesome to coach and deploy the mannequin. Furthermore, large-scale datasets typically comprise noisy or irrelevant options, which might additional hinder the mannequin’s efficiency.

The significance of optimization in large-scale machine studying can’t be overstated. By creating environment friendly optimization algorithms, we are able to obtain higher mannequin efficiency, lowered computational prices, and quicker deployment instances. This, in flip, allows organizations to make data-driven choices rapidly and precisely.

Industries Closely Counting on Massive-Scale Machine Studying Fashions

Varied industries rely closely on large-scale machine studying fashions to drive their decision-making processes. A few of these industries embody:

- Finance: Monetary establishments use large-scale machine studying fashions to foretell inventory costs, detect bank card fraud, and handle danger.

- Healthcare: Healthcare organizations use large-scale machine studying fashions to diagnose illnesses, personalize therapy plans, and develop new medical therapies.

- E-commerce: On-line retailers use large-scale machine studying fashions to suggest merchandise to prospects, detect fraudulent transactions, and optimize provide chains.

- Transportation: Firms use large-scale machine studying fashions to optimize routes, predict visitors patterns, and enhance logistics.

These industries rely closely on large-scale machine studying fashions to achieve insights from their information and make knowledgeable choices. The significance of optimization in large-scale machine studying can’t be overstated, because it allows organizations to attain higher mannequin efficiency, lowered computational prices, and quicker deployment instances.

The success of large-scale machine studying relies on the power to develop environment friendly optimization algorithms that may deal with huge datasets and sophisticated fashions.

Examples of Massive-Scale Machine Studying Fashions

Some notable examples of large-scale machine studying fashions embody:

- Google’s AlphaGo: A deep studying mannequin that defeated a human world champion in Go, a recreation that requires an infinite quantity of computational energy and reminiscence.

- Fb’s DeepFace: A deep studying mannequin that may acknowledge faces in real-time, even in low-resolution pictures or with various lighting situations.

- Self-driving automobiles: Firms like Waymo and Tesla use large-scale machine studying fashions to develop self-driving automobiles that may acknowledge obstacles, navigate roads, and make fast choices.

These examples reveal the potential of large-scale machine studying fashions to revolutionize varied industries and enhance folks’s lives.

Widespread Optimization Methods in Machine Studying

_(1).jpg)

Optimization is a vital side of machine studying, because it permits us to search out the absolute best answer for our mannequin given the information we’ve. In large-scale machine studying, optimization methods are used to optimize the mannequin parameters to attain the absolute best efficiency. On this part, we’ll focus on some widespread optimization methods utilized in machine studying, together with gradient descent, stochastic gradient descent, and regularization methods.

Gradient Descent Algorithm

Gradient descent is a well-liked optimization algorithm utilized in machine studying to optimize mannequin parameters by iteratively lowering the loss perform. The algorithm works by taking small steps within the path of the destructive gradient of the loss perform, which is the path of steepest descent. The method entails initializing the mannequin parameters, calculating the loss perform, after which updating the parameters utilizing the next formulation:

θ = θ – α * ∇θ

the place θ is the mannequin parameter, α is the educational fee, and ∇θ is the gradient of the loss perform with respect to θ.

There are a number of variants of the gradient descent algorithm, together with batch gradient descent, on-line gradient descent, and mini-batch gradient descent. Batch gradient descent updates the mannequin parameters utilizing the whole coaching dataset directly, whereas on-line gradient descent updates the parameters utilizing particular person coaching samples. Mini-batch gradient descent updates the parameters utilizing small batches of coaching samples.

Stochastic Gradient Descent

Stochastic gradient descent (SGD) is one other fashionable optimization algorithm utilized in machine studying. In contrast to batch gradient descent, SGD updates the mannequin parameters utilizing particular person coaching samples, fairly than the whole coaching dataset. This makes SGD quicker and extra environment friendly than batch gradient descent, particularly for big datasets. Nonetheless, SGD might be noisy and should not converge to the optimum answer as rapidly as batch gradient descent.

- In SGD, the mannequin parameters are up to date utilizing the next formulation:

- SGD is delicate to the selection of the educational fee α, as a too giant α may cause overshooting, whereas a too small α can lead to gradual convergence.

- SGD can be utilized with any machine studying algorithm, together with linear regression, logistic regression, and neural networks.

θ = θ – α * ∇θ

the place θ is the mannequin parameter, α is the educational fee, and ∇θ is the gradient of the loss perform with respect to θ.

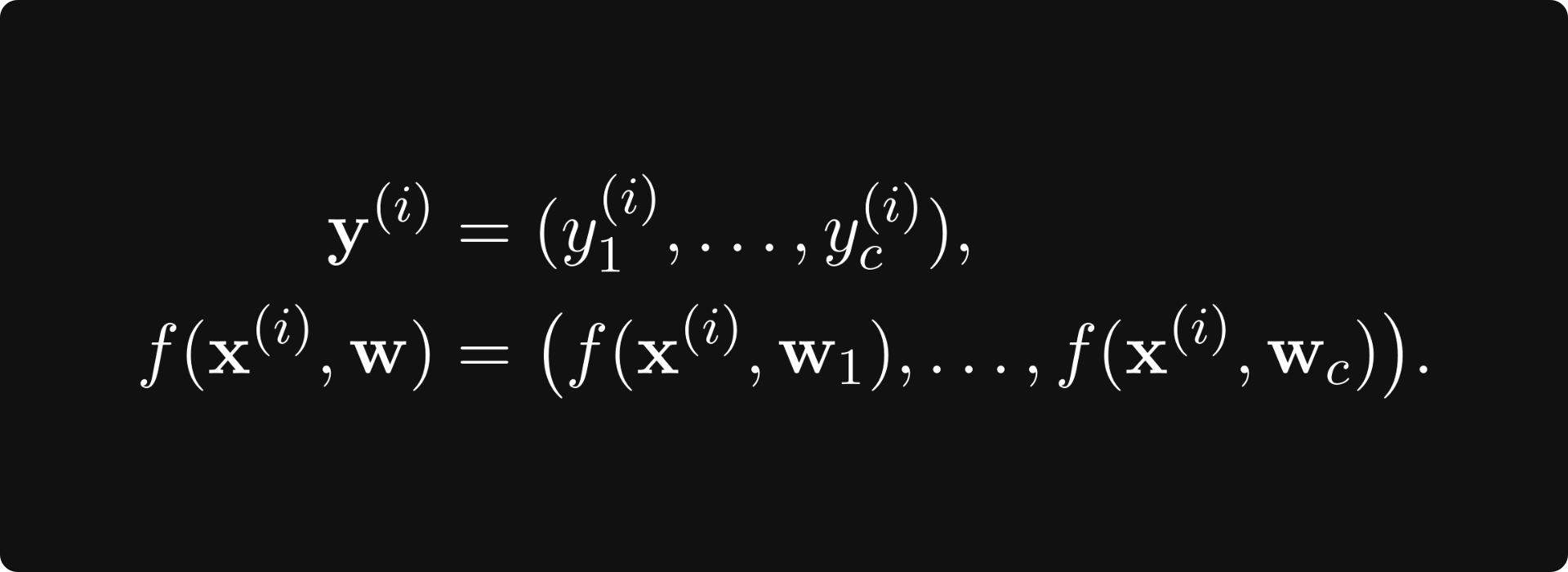

Regularization Methods

Regularization methods are used to forestall overfitting by including a penalty time period to the loss perform. The penalty time period is proportional to the magnitude of the mannequin parameters, and it encourages the mannequin to have smaller parameters. There are a number of regularization methods, together with L1 regularization, L2 regularization, and elastic internet regularization.

- L1 regularization is often known as Lasso regression, and it provides a penalty time period to the loss perform proportional to absolutely the worth of the mannequin parameters.

- L2 regularization is often known as Ridge regression, and it provides a penalty time period to the loss perform proportional to the sq. of the mannequin parameters.

- Elastic internet regularization combines L1 and L2 regularization, and it provides a penalty time period to the loss perform proportional to each absolutely the worth and the sq. of the mannequin parameters.

Loss = (1/n) * ∑(h_θ(x^(i)) – y^(i))^2 + λ * |θ|

Loss = (1/n) * ∑(h_θ(x^(i)) – y^(i))^2 + λ * θ^2

Loss = (1/n) * ∑(h_θ(x^(i)) – y^(i))^2 + λ1 * |θ| + λ2 * θ^2

Comparability of Completely different Optimization Algorithms

The selection of optimization algorithm relies on the precise downside and the traits of the information. Normally, SGD is quicker and extra environment friendly than batch gradient descent, whereas L2 regularization is extra strong than L1 regularization.

- Generally, SGD converges rapidly to the optimum answer.

- Batch gradient descent is extra strong to noise within the information, however it’s slower and extra computationally costly than SGD.

- L1 regularization is more practical in sparse fashions, whereas L2 regularization is more practical in non-sparse fashions.

Gradient-Based mostly Optimization Strategies: Optimization Strategies For Massive-scale Machine Studying

Gradient-based optimization strategies are a household of algorithms used to coach machine studying fashions by minimizing the loss perform. These strategies are extensively utilized in large-scale machine studying issues resulting from their effectivity and effectiveness. On this part, we’ll focus on the idea of gradient descent and its purposes in machine studying, in addition to among the variants of gradient descent which can be generally utilized in observe.

The Idea of Gradient Descent

Gradient descent is a first-order optimization algorithm that’s used to attenuate the loss perform by iteratively updating the mannequin parameters within the path of the destructive gradient of the loss perform with respect to the parameters. The essential concept behind gradient descent is to iteratively replace the mannequin parameters utilizing the next replace rule:

θ = θ – α * ∂/∂θ (Loss perform)

the place θ represents the mannequin parameters, α is the educational fee, and ∂/∂θ represents the partial by-product of the loss perform with respect to the mannequin parameters.

Gradient descent can be utilized to coach a variety of machine studying fashions, together with linear regression, logistic regression, neural networks, and others. As well as, gradient descent is usually used together with different optimization algorithms, corresponding to momentum and Nesterov acceleration, to enhance its efficiency.

Gradient Descent with Momentum

Gradient descent with momentum is a variant of gradient descent that comes with a momentum time period to assist the algorithm escape native minima. The replace rule for gradient descent with momentum is given by:

θ = θ – α * (m * ∂/∂θ (Loss perform) + λ * (θ – θ_prev))

the place m represents the momentum time period, λ represents the educational fee of the momentum time period, and θ_prev represents the mannequin parameters on the earlier iteration.

Gradient descent with momentum is extensively utilized in observe resulting from its skill to flee native minima and enhance the convergence fee of the algorithm. Nonetheless, it may possibly additionally result in overshooting and gradual convergence if the educational fee is simply too excessive.

Nesterov Acceleration

Nesterov acceleration is a variant of gradient descent that comes with a momentum time period and makes use of a distinct replace rule to enhance the convergence fee of the algorithm. The replace rule for Nesterov acceleration is given by:

θ = θ – α * (m * ∂/∂θ (Loss perform) / (1 + λ))

the place m represents the momentum time period, λ represents the educational fee of the momentum time period, and ∂/∂θ represents the partial by-product of the loss perform with respect to the mannequin parameters.

Nesterov acceleration is extensively utilized in observe resulting from its skill to enhance the convergence fee of the algorithm and escape native minima.

RMSProp

RMSProp is a variant of gradient descent that comes with a momentum time period and makes use of a distinct replace rule to enhance the convergence fee of the algorithm. The replace rule for RMSProp is given by:

θ = θ – α * (∂/∂θ (Loss perform) / sqrt(∈ + (∂/∂θ (Loss perform))^2))

the place α represents the educational fee, ∈ represents a small worth to forestall division by zero, and ∂/∂θ represents the partial by-product of the loss perform with respect to the mannequin parameters.

RMSProp is extensively utilized in observe resulting from its skill to enhance the convergence fee of the algorithm and escape native minima.

Adam

Adam is a variant of gradient descent that comes with a momentum time period and makes use of a distinct replace rule to enhance the convergence fee of the algorithm. The replace rule for Adam is given by:

θ = θ – α * (∂/∂θ (Loss perform) / (√(∈ + (∂/∂θ (Loss perform))^2) + √(∈ + (∂/∂θ_prev (Loss perform))^2)))

the place α represents the educational fee, ∈ represents a small worth to forestall division by zero, and ∂/∂θ represents the partial by-product of the loss perform with respect to the mannequin parameters.

Adam is extensively utilized in observe resulting from its skill to enhance the convergence fee of the algorithm and escape native minima.

Non-Gradient Optimization Strategies

Non-gradient optimization strategies are important in machine studying when gradient-based strategies fail to converge or are computationally costly. These strategies depend on various methods to optimize mannequin parameters with out explicitly utilizing gradients.

First-Order and Second-Order Optimization Strategies

First-order optimization strategies replace mannequin parameters based mostly on the gradient of the target perform at a single level. Examples of first-order strategies embody gradient descent and its variants. In distinction, second-order strategies use each the primary and second derivatives of the target perform to replace mannequin parameters. Second-order strategies like Newton’s methodology are sometimes extra computationally costly however can converge quicker than first-order strategies. Nonetheless, second-order strategies might be troublesome to use in observe because of the computational price of computing the Hessian matrix.

Quasi-Newton Strategies

Quasi-Newton strategies are a category of first-order optimization strategies that approximate the Hessian matrix utilizing an iteratively up to date matrix. BFGS and L-BFGS are fashionable quasi-Newton strategies which have been extensively utilized in varied machine studying purposes. These strategies replace the Hessian matrix approximation at every iteration, which permits them to adapt to the altering panorama of the target perform.

- BFGS (Broyden-Fletcher-Goldfarb-Shanno) algorithm:

- L-BFGS (Restricted Reminiscence BFGS):

The BFGS algorithm updates the Hessian matrix approximation utilizing the formulation:

B_H^(okay+1) = B_H^okay + (y^okay * y^okay)^T / (y^okay^T * s^okay) – B_H^okay * (s^okay * s^okay)^T / (s^okay^T * s^okay * s^okay)

the place y^okay = g(x^okay + s^okay) – g(x^okay) and s^okay = x^okay + 1 – x^okay. The ensuing Hessian matrix approximation is then used to replace the mannequin parameters.

L-BFGS is a variant of the BFGS algorithm that limits the reminiscence utilization by storing solely the newest iterations. This makes L-BFGS extra appropriate for large-scale optimization issues. The L-BFGS algorithm makes use of the same replace rule as BFGS however with a further parameter that controls the reminiscence utilization.

Non-Gradient Optimization Strategies

Non-gradient optimization strategies don’t depend on the gradient of the target perform to replace mannequin parameters. As an alternative, they use various methods corresponding to random search, simulated annealing, and genetic algorithms. These strategies might be helpful when the target perform has a fancy panorama or when the gradient info is just not accessible.

- Simulated Annealing:

- Genetic Algorithms:

Simulated annealing is a non-gradient optimization methodology that makes use of a temperature schedule to manage the likelihood of accepting new options. The algorithm begins with an preliminary answer and iteratively applies a perturbation to generate new options. The brand new answer is accepted if it has a decrease goal perform worth, and the temperature is decreased after every iteration.

Genetic algorithms are a category of non-gradient optimization strategies that use ideas of pure choice and genetics to seek for the optimum answer. The algorithm begins with an preliminary inhabitants of options and iteratively applies choice, crossover, and mutation operators to generate new options.

Regularization Methods for Optimization

Regularization methods are a necessary a part of machine studying optimization. They assist stop overfitting by including a penalty time period to the loss perform, thereby lowering the mannequin’s complexity. On this part, we’ll focus on among the hottest regularization methods, together with L1 and L2 norm regularization, dropout regularization, early stopping, and information augmentation.

L1 and L2 Norm Regularization

L1 and L2 norm regularization are two forms of regularization methods that add a penalty time period to the loss perform to cut back mannequin complexity. The primary distinction between the 2 lies in the way in which they weight the mannequin’s parameters.

L1 norm regularization, often known as Lasso regression, provides a penalty time period to the loss perform that is the same as absolutely the worth of the mannequin’s parameters. The L1 penalty time period is given by the equation:

$$Omega(beta) = sum_i=1^n | beta_i |$$

the place $beta_i$ are the mannequin’s parameters.

L2 norm regularization, however, provides a penalty time period to the loss perform that is the same as the sq. of the mannequin’s parameters. The L2 penalty time period is given by the equation:

$$Omega(beta) = sum_i=1^n beta_i^2$$

the place $beta_i$ are the mannequin’s parameters.

Dropout Regularization

dropout regularization is a regularization method that randomly drops out models throughout coaching. This prevents the mannequin from relying too closely on any single unit, thereby lowering overfitting. Throughout coaching, every unit has a 50% likelihood of being dropped out for every ahead move. Which means the mannequin will solely use 50% of the models to make predictions on a selected information level. By dropping out models, the mannequin learns to make use of the remaining models in numerous combos, thereby growing its robustness to overfitting.

Early Stopping

Early stopping is a regularization method that stops coaching when the mannequin’s efficiency on a validation set begins to degrade. This prevents the mannequin from overfitting to the coaching information and ensures that it generalizes effectively to unseen information.

Early stopping works by monitoring the mannequin’s efficiency on a validation set throughout coaching. When the mannequin’s efficiency begins to degrade, the coaching course of is stopped, and the mannequin is saved to a file to be used in testing.

Knowledge Augmentation

Knowledge augmentation is a regularization method that artificially will increase the scale of the coaching information by making use of random transformations to present information factors. This could embody rotations, scaling, flipping, and different transformations.

Knowledge augmentation helps to forestall overfitting by coaching the mannequin on quite a lot of completely different information factors, which forces it to be taught a extra basic illustration of the information. For instance, if we’re coaching a mannequin on pictures of handwritten digits, we are able to apply random rotations and scaling to the photographs to create new coaching samples.

Knowledge augmentation has been proven to enhance the efficiency of fashions in quite a lot of duties, together with picture classification, object detection, and segmentation.

To keep away from overfitting, we are able to apply the next information augmentation methods:

- Rotation: Randomly rotate the picture by 10% to 20% levels.

- Scaling: Randomly scale the picture by 50% to 150%.

- Flipping: Randomly flip the picture horizontally or vertically.

- Coloration jittering: Randomly alter the brightness, saturation, and distinction of the picture.

Hyperparameter Optimization

Hyperparameter optimization is a vital step in machine studying that may considerably affect the efficiency of a mannequin. It entails adjusting the parameters of a mannequin, corresponding to the educational fee, regularization power, or variety of hidden layers, to optimize its efficiency on a given activity. Hyperparameter optimization is important as a result of the efficiency of a mannequin might be extremely delicate to the selection of hyperparameters, and even small modifications to those parameters can lead to vital enhancements or deteriorations within the mannequin’s efficiency. On this subsection, we’ll focus on the significance of hyperparameter tuning and the strategies used for hyperparameter optimization, together with grid search and random search.

Grid Search vs Random Search

Grid search and random search are two fashionable strategies used for hyperparameter optimization. Grid search entails systematically looking over a predefined vary of hyperparameter values, evaluating the efficiency of the mannequin at every level, and choosing the hyperparameters that lead to one of the best efficiency. Nonetheless, grid search might be computationally costly, particularly when coping with a lot of hyperparameters or complicated fashions. Random search, however, entails randomly sampling hyperparameter values and evaluating the efficiency of the mannequin at every level. Random search might be extra environment friendly than grid search, however it may possibly additionally lead to suboptimal options.

- Grid Search

- Benefits:

- Ensures that the mannequin is evaluated in any respect potential combos of hyperparameter values

- May end up in the optimum answer if the search house is sufficiently small

- Disadvantages:

- May be computationally costly for big search areas

- Might lead to overfitting or underfitting if not carried out rigorously

- Random Search

- Benefits:

- Extra environment friendly than grid search, particularly for big search areas

- May end up in good options with fewer iterations

- Disadvantages:

- Might lead to suboptimal options if not sufficient iterations are carried out

- Requires cautious tuning of hyperparameters, such because the variety of iterations and the random seed

Hyperparameter Optimization Frameworks

A number of frameworks, corresponding to Hyperopt and Optuna, have been developed to facilitate hyperparameter optimization. These frameworks present a versatile and environment friendly option to search over hyperparameter areas, lowering the necessity for handbook tuning and growing the chance of discovering good options.

- Hyperopt

- Helps a variety of optimization algorithms, together with grid search and random search

- Permits for customizing the search course of utilizing Python code

- For instance, Hyperopt gives a `fmin` perform that takes a loss perform, a search house, and a variety of iterations as enter and returns the optimum hyperparameters.

- Optuna

- Supplies a easy and intuitive API for hyperparameter optimization

- Helps a variety of optimization algorithms, together with grid search, random search, and Bayesian optimization

- For instance, Optuna gives a `Examine` class that encapsulates the search course of, permitting for straightforward customization and logging of the optimization course of.

Hyperparameter optimization is a vital step in machine studying that may considerably affect the efficiency of a mannequin. By choosing the proper hyperparameter optimization methodology and framework, researchers and practitioners can make sure that their fashions are well-tuned and carry out optimally on a given activity.

Distributed Optimization Strategies

_(1).jpg)

Distributed optimization is a important strategy to large-scale machine studying, enabling the environment friendly processing of huge datasets by dividing them amongst a number of computational models. This strategy leverages parallel processing and distributed computing frameworks to speed up computation and decrease coaching time.

Distributed optimization strategies depend on the ideas of parallel processing and distributed computing, that are elementary to tackling the computational challenges of large-scale machine studying. Parallel processing entails dividing duties amongst a number of processing models, whereas distributed computing extends this idea to a number of machines.

Parallel Processing Frameworks

Parallel processing frameworks are important for distributed optimization, offering a structured strategy to divide duties and handle computational assets. Two outstanding frameworks utilized in machine studying are Apache Spark and Hadoop.

Apache Spark is an open-source, in-memory information processing engine that gives high-performance capabilities for parallel processing. Its scalability and suppleness make it appropriate for large-scale machine studying purposes, together with distributed optimization.

Hadoop is a distributed computing framework that gives a scalable storage and processing structure for giant information. Hadoop’s core parts, HDFS (Hadoop Distributed File System) and MapReduce, allow the environment friendly distribution of information and computation amongst a number of nodes.

Distributed Optimization Algorithms, Optimization strategies for large-scale machine studying

Distributed optimization algorithms are designed to reap the benefits of a number of processing models and computational assets. Two notable examples of distributed optimization strategies are Distributed Gradient Descent and MapReduce.

Distributed Gradient Descent

Distributed gradient descent is an extension of the gradient descent algorithm, appropriate for large-scale machine studying issues. On this strategy, the information is split amongst a number of nodes, and every node performs gradient descent iterations independently. The nodes then mixture their outcomes to converge to the optimum answer.

MapReduce

MapReduce is a programming mannequin used for processing giant information units, carried out in Hadoop. It consists of two major phases: the map part and the scale back part. The map part divides the information into smaller chunks and processes every chunk utilizing the map perform. The scale back part aggregates the output from the map part and applies the scale back perform to provide the ultimate outcome.

Each Distributed Gradient Descent and MapReduce are efficient distributed optimization strategies for large-scale machine studying issues. They reveal the potential of distributed computing frameworks in processing huge datasets and accelerating computation.

Analysis Metrics for Optimization

Analysis metrics play a vital function in machine studying optimization as they supply a quantitative measure of mannequin efficiency. These metrics allow mannequin practitioners to guage the effectiveness of a mannequin, determine areas that require enchancment, and make data-driven choices. On this part, we’ll focus on among the mostly used analysis metrics in machine studying optimization.

Accuracy, Precision, Recall, and F1 Rating

Accuracy, precision, recall, and F1 rating are among the most generally used analysis metrics in machine studying. Accuracy measures the proportion of accurately categorised situations out of all situations. Precision, however, measures the proportion of true positives amongst all predicted constructive situations. Recall measures the proportion of true positives amongst all precise constructive situations. The F1 rating is the harmonic imply of precision and recall.

- Accuracy = TP + TN / (TP + TN + FP + FN)

- Precision = TP / (TP + FP)

- Recall = TP / (TP + FN)

- F1 Rating = 2 * (Precision * Recall) / (Precision + Recall)

These metrics are used to guage the efficiency of classification fashions. As an illustration, take into account a classification mannequin that’s used to foretell whether or not a buyer will churn or not. The mannequin has 90% accuracy, precision of 0.8, recall of 0.9, and F1 rating of 0.85. This suggests that the mannequin accurately classifies 90% of situations, however tends to foretell extra false positives than false negatives.

AUC-ROC and AUC-PR

AUC-ROC (Space Beneath the Receiver Working Attribute Curve) and AUC-PR (Space Beneath the Precision-Recall Curve) are used to guage the efficiency of classification fashions, notably in imbalanced datasets. AUC-ROC measures the mannequin’s skill to differentiate between courses, whereas AUC-PR measures the mannequin’s skill to foretell constructive situations.

AUC-ROC > 0.5 implies that the mannequin is healthier than a random classifier.

AUC-PR is especially helpful in binary classification issues the place the constructive class is uncommon. As an illustration, take into account a binary classification downside the place the constructive class (fraudulent transactions) accounts for 1% of all situations. A mannequin with AUC-ROC of 0.8 and AUC-PR of 0.95 signifies that it’s higher at predicting fraudulent transactions than a mannequin with AUC-ROC of 0.75 and AUC-PR of 0.5.

Case Research of Massive-Scale Machine Studying Optimization

On this planet of large-scale machine studying, corporations are consistently looking for methods to optimize their fashions for improved efficiency, effectivity, and scalability. One such firm that has carried out large-scale machine studying optimization is Google, notably of their promoting enterprise. Google’s promoting platform depends closely on machine studying algorithms to foretell consumer habits, personalize adverts, and optimize advert placements.

Google’s Optimization Method

To sort out the complexity of large-scale machine studying, Google makes use of a mix of gradient-based and non-gradient optimization strategies. Particularly, they make use of the Stochastic Gradient Descent (SGD) algorithm to optimize their neural community fashions. SGD is an iterative optimization methodology that updates the mannequin parameters at every step, considering a random subset of the coaching information. This strategy permits Google to deal with huge datasets and sophisticated fashions, attaining state-of-the-art efficiency in promoting auctions.

Regularization Methods

To stop overfitting and enhance generalization, Google implements varied regularization methods, corresponding to L1 and L2 regularization. L1 regularization provides a penalty time period to the associated fee perform, discouraging giant weights and thus stopping overfitting. L2 regularization, however, provides a squared penalty time period, which is more practical in lowering giant characteristic values. Google’s expertise reveals {that a} mixture of L1 and L2 regularization results in improved mannequin efficiency and robustness.

Hyperparameter Optimization

Hyperparameter optimization is a important element of mannequin coaching, because it impacts the mannequin’s efficiency and generalization. Google makes use of methods like Grid Search, Random Search, and Bayesian Optimization to tune hyperparameters. Grid Search entails evaluating a predefined set of hyperparameters, whereas Random Search explores a random subset of the hyperparameter house. Bayesian Optimization, nonetheless, makes use of a probabilistic strategy to seek for the optimum hyperparameters. By using these methods, Google’s machine studying staff can rapidly and effectively discover the optimum hyperparameters for his or her fashions.

Distributed Optimization

To deal with huge quantities of information and sophisticated fashions, Google employs distributed optimization strategies. Particularly, they use a distributed model of the SGD algorithm, which permits them to parallelize the optimization course of throughout a number of machines. This strategy allows Google to deal with huge datasets and sophisticated fashions, attaining state-of-the-art efficiency in promoting auctions.

Classes Realized

Google’s expertise with large-scale machine studying optimization provides priceless insights for corporations looking for to implement related strategies. The important thing takeaways from Google’s case examine embody:

–

- The significance of mixing a number of optimization methods, corresponding to gradient-based and non-gradient strategies, to attain state-of-the-art efficiency.

- The advantages of implementing regularization methods, corresponding to L1 and L2 regularization, to forestall overfitting and enhance generalization.

- The worth of hyperparameter optimization methods, corresponding to Grid Search, Random Search, and Bayesian Optimization, to find the optimum hyperparameters for fashions.

- The facility of distributed optimization strategies in dealing with huge datasets and sophisticated fashions.

By making use of these classes realized, corporations can enhance their large-scale machine studying optimization efforts, main to higher mannequin efficiency, elevated effectivity, and improved scalability.

Closing Notes

In conclusion, optimization strategies for large-scale machine studying play a pivotal function in unlocking the true potential of AI-driven methods. By adopting the proper methods, organizations can enhance mannequin efficiency, scale back computational prices, and keep aggressive of their respective markets. Whether or not you are a seasoned practitioner or embarking in your machine studying journey, this information provides actionable insights and sensible recommendation that can assist you optimize your large-scale machine studying workflows.

FAQ Insights

Q: What are the commonest optimization strategies utilized in machine studying?

A: Gradient descent, stochastic gradient descent, and regularization methods are extensively utilized in machine studying optimization.

Q: How can I select the proper optimization methodology for my large-scale machine studying mannequin?

A: It is important to think about the mannequin’s complexity, information measurement, and computational assets to pick out probably the most appropriate optimization methodology.

Q: What’s the function of hyperparameter optimization in machine studying?

A: Hyperparameter optimization entails tuning mannequin hyperparameters to enhance generalization and efficiency, which is important in machine studying.