Because the Statquest Illustrated Information to Machine Studying takes middle stage, this opening passage beckons readers right into a world crafted with knowledgeable data, guaranteeing a studying expertise that’s each absorbing and distinctly authentic.

Inside this complete information, readers will navigate the elemental ideas of machine studying, its purposes, and the varied varieties: supervised, unsupervised, and reinforcement studying. Actual-world examples will illustrate the chances and limitations of machine studying, offering a stable basis for additional exploration.

Introduction to Machine Studying

Machine studying is a synthetic intelligence (AI) subfield that permits methods to be taught from knowledge and enhance their efficiency on a job with out being explicitly programmed. That is akin to how people be taught, the place publicity to experiences and repetitive observe enable us to be taught and refine our abilities. Identical to the way you would possibly be taught a brand new language by continually talking it, machine studying algorithms be taught by analyzing giant datasets and adapting to the patterns they uncover.

Elementary Ideas of Machine Studying

Machine studying encompasses three main varieties: supervised, unsupervised, and reinforcement studying. Every of those strategies depends on the info offered to make predictions or choices. Once we think about the elemental ideas of machine studying, we should additionally think about the info it is primarily based on.

In supervised studying, the info is labeled, guaranteeing that the machines can affiliate the specified outcomes with the given inputs. This sort of studying is commonly utilized in picture classification, pure language processing, and predicting steady outcomes. As an illustration, self-driving vehicles use a mixture of pc imaginative and prescient and machine studying to foretell the actions of different vehicles.

- Gross sales Forecasting

- Picture Recognition

- Predicting Buyer Churn

In gross sales forecasting, the algorithm is educated to foretell future gross sales primarily based on historic knowledge. Utilizing this data, corporations can regulate their manufacturing ranges, stock administration, and advertising methods to fulfill buyer calls for.

In picture recognition, the algorithm is educated to acknowledge patterns and objects in photographs. This know-how is utilized in purposes comparable to facial recognition, object detection in self-driving vehicles, and medical imaging evaluation.

Predicting buyer churn includes utilizing historic knowledge to determine patterns and behaviors that result in prospects switching to a different service supplier. This enables corporations to supply focused promotions and enhance buyer retention.

Varieties of Machine Studying

Machine studying is categorized into three main varieties primarily based on the character of enter knowledge and the specified consequence. Every of those varieties has its distinctive purposes and is utilized in numerous industries.

-

Supervised Studying

Supervised studying includes coaching the machine studying mannequin utilizing labeled knowledge. The algorithms be taught to affiliate the enter knowledge with the proper output primarily based on the offered labels. This sort of studying is usually utilized in picture classification, pure language processing, and forecasting. An instance of supervised studying could be a facial recognition system that is educated utilizing photographs of recognized people with labels indicating what they’re.

-

Unsupervised Studying

Unsupervised studying trains algorithms on unlabeled knowledge, permitting them to find patterns and relationships that may not be instantly obvious. This sort of studying is commonly utilized in clustering, segmentation, and anomaly detection. As an illustration, in finance, unsupervised studying can assist detect uncommon transaction patterns, flagging suspicious actions.

-

Reinforcement Studying

-

Automated Buyer Service

-

Healthcare Analysis

-

Monetary Threat Administration, The statquest illustrated information to machine studying

- Instance of one-hot encoding and label encoding:

Suppose now we have a categorical variable ‘Shade’ with 3 doable values: ‘Crimson’, ‘Blue’, ‘Inexperienced’. One-hot encoding would lead to three binary vectors:

["Red" -> [1, 0, 0]]

["Blue" -> [0, 1, 0]]

["Green" -> [0, 0, 1]]

Label encoding would lead to:

["Red" -> 1]

["Blue" -> 2]

["Green" -> 3] - Straightforward to interpret: Determination bushes present a visible illustration of the decision-making course of, making it simple to grasp and interpret the mannequin.

- Dealing with lacking values: Determination bushes can deal with lacking values within the knowledge by ignoring the lacking values through the splitting course of.

- Dealing with non-linear relationships: Determination bushes can deal with non-linear relationships by recursively splitting the info into smaller subsets.

- Overfitting: Determination bushes can endure from overfitting, the place the mannequin turns into too advanced and fails to generalize properly to new knowledge.

- Delicate to outliers: Determination bushes will be delicate to outliers, the place a single outlier can considerably have an effect on the mannequin’s efficiency.

- Improved accuracy: Random forests can enhance the accuracy of the mannequin by combining the predictions from a number of bushes.

- Lowering overfitting: Random forests can cut back overfitting by averaging the predictions from a number of bushes.

- Dealing with high-dimensional knowledge: Random forests can deal with high-dimensional knowledge by choosing a random subset of options for every tree.

- Computational complexity: Random forests will be computationally costly, particularly when coping with giant datasets.

- Issue in deciphering: Random forests will be troublesome to interpret, because the mannequin is an ensemble of a number of bushes.

- Good generalization: SVMs can generalize properly to new knowledge, particularly when the lessons are linearly separable.

- Dealing with non-linear relationships: SVMs can deal with non-linear relationships through the use of a kernel operate to map the info right into a higher-dimensional house.

- Sturdy to outliers: SVMs will be sturdy to outliers, because the mannequin relies on the gap between the samples and the hyperplane.

- Sensitivity to hyperparameters: SVMs will be delicate to hyperparameters, such because the kernel operate and regularization parameter.

- Lengthy coaching time: SVMs can take a very long time to coach, particularly when coping with giant datasets.

- Select an preliminary set of centroids.

- Assign every knowledge level to the cluster with the closest centroid.

- Replace the centroid of every cluster to be the imply of its assigned knowledge factors.

- Repeat steps 2 and three till convergence or a stopping criterion is met.

- Straightforward to implement, particularly when ok is small.

- Scalable to giant datasets, as every knowledge level solely must be in contrast with the centroids.

- Sturdy to noise and outliers, particularly when ok is giant.

- Delicate to preliminary centroid placement, as it could actually result in native optima.

- No assure of manufacturing a singular or significant clustering resolution.

- Agglomerative clustering begins with every knowledge level as its personal cluster and iteratively merges the closest pairs till a stopping criterion is met.

- Divisive clustering begins with all knowledge factors in a single cluster and iteratively splits the clusters till a stopping criterion is met.

- Offering a hierarchical illustration of the info, permitting for exploration at totally different ranges of granularity.

- Sensitivity to native construction, capturing refined patterns within the knowledge.

- Larger computational price in comparison with k-means, particularly for giant datasets.

- More difficult to interpret and visualize the outcomes.

- Compute the covariance matrix of the info.

- Discover the eigenvectors of the covariance matrix, which symbolize the principal axes.

- Type the eigenvectors by their corresponding eigenvalues in descending order.

- Select the highest ok eigenvectors to type the brand new coordinate system.

- Lowering the dimensionality of the info, making it extra tractable for evaluation.

- Preserving the relationships between the info factors, particularly the variance.

- No assure of retaining the unique knowledge distribution or relationships.

- Sensitivity to outliers and noise, which might have an effect on the eigenvector computation.

- Be taught from giant datasets with out being explicitly programmed

- Deal with advanced relationships between variables

- Make predictions and choices primarily based on patterns within the knowledge

- Issue in understanding how they make their choices

- Requires giant quantities of coaching knowledge

- Susceptible to overfitting

- Acknowledge patterns in giant quantities of information

- Make predictions or choices primarily based on advanced relationships between variables

- Enhance accuracy in classification or regression duties

- Picture classification: Neural networks can be utilized to categorise photographs into their respective classes, comparable to animals, automobiles, or buildings.

- Pure language processing: RNNs can be utilized to course of and perceive pure language, enabling purposes comparable to chatbots and language translation.

- Predictive upkeep: Neural networks can be utilized to foretell when tools is more likely to fail, enabling proactive upkeep and lowering downtime.

- Picture recognition: Neural networks are utilized in facial recognition methods, self-driving vehicles, and medical imaging methods.

- NLP: RNNs are utilized in chatbots, language translation methods, and sentiment evaluation.

- Predictive upkeep: Neural networks are utilized in industries comparable to manufacturing, healthcare, and finance to foretell tools failure and optimize upkeep schedules.

- Imply Squared Error (MSE)

- Imply Absolute Error (MAE)

- R-Squared (R²)

- Accuracy

- Complexity

- Interpretability

- Accuracy

- Complexity

- Interpretability

- Random Forest Algorithm: This algorithm trains a number of determination bushes on totally different subsets of the dataset and combines their predictions to enhance the accuracy of the mannequin.

- Gradient Boosting Algorithm: This algorithm trains a number of weak fashions on totally different subsets of the dataset and combines their predictions to enhance the accuracy of the mannequin.

- AdaBoost Algorithm: This algorithm trains a number of determination bushes on the identical dataset and combines their predictions utilizing a weighted sum. The weights used within the sum are decided by the efficiency of every determination tree on the coaching dataset.

- Gradient Boosting Algorithm: This algorithm trains a number of weak fashions on the identical dataset and combines their predictions utilizing a weighted sum. The weights used within the sum are decided by the efficiency of every weak mannequin on the coaching dataset.

- Stacking Algorithm: This algorithm trains a number of fashions on the identical dataset and combines their predictions utilizing a meta-model. The meta-model is educated on the predictions of the a number of fashions, quite than the unique dataset.

- Convolutional Neural Networks (CNNs): These networks use convolutional and pooling layers to be taught advanced patterns in photographs.

- Recurrent Neural Networks (RNNs): These networks use recurrent layers to be taught advanced patterns in sequential knowledge.

- Generative Adversarial Networks (GANs): These networks use a generator and a discriminator to be taught advanced patterns in knowledge.

- Necessities Gathering:

- Decide the undertaking scope

- Establish the target market

- Outline the anticipated outcomes

- Have interaction with stakeholders

- Formulate a undertaking proposal

- Knowledge Assortment:

- Decide the kind of knowledge required

- Acquire knowledge from numerous sources

- Clear and preprocess the info

- Analyze the info for insights

- Kind of Knowledge:

- Structured knowledge (e.g., tables, databases)

- Semi-structured knowledge (e.g., CSV recordsdata, JSON recordsdata)

- Unstructured knowledge (e.g., textual content, photographs)

- Python Libraries:

- Scikit-learn

- TensorFlow

- PyTorch

- IDEs:

- Visible Studio Code

- PyCharm

- Encryption: Use safe encryption protocols to guard knowledge each in transit and at relaxation.

- Entry controls: Implement strict entry controls to make sure solely licensed personnel have entry to delicate knowledge.

- Safe knowledge storage: Retailer knowledge in a safe setting, shielded from unauthorized entry and breaches.

- Compliance with laws: Familiarize your self with related laws and guarantee compliance to keep away from knowledge breaches.

- Knowledge evaluation: Study the dataset for indicators of bias, comparable to uneven illustration of sure teams or attributes.

- Statistical testing: Use statistical strategies to check for bias, comparable to equity metrics and sensitivity evaluation.

- Human oversight: Frequently assessment and assess mannequin efficiency to detect and tackle biases.

Reinforcement studying permits machines to be taught by way of trial and error by interacting with an setting and receiving rewards or penalties. This studying is usually utilized in robotics, autonomous automobiles, and recreation enjoying. Take into account a robotic studying to navigate a brand new house by receiving rewards for proper actions and penalties for obstacles.

The next desk summarizes the kinds of machine studying:

| Kind | Description | Instance Software |

| — | — | — |

| Supervised | Coaching on labeled knowledge | Facial recognition |

| Unsupservised | Coaching on unlabeled knowledge | Anomaly detection |

| Reinforcement | Studying by way of trials and rewards | Autonomous automobiles |

Actual-world Purposes of Machine Studying

Machine studying has quite a few real-world purposes in numerous industries, together with however not restricted to:

Automated customer support methods use machine studying to offer customized help and resolve buyer complaints in a well timed method. As an illustration, chatbots and digital assistants are programmed to grasp and reply to person queries primarily based on patterns within the knowledge.

Machine studying in healthcare prognosis permits the evaluation of medical photographs and affected person knowledge to foretell the probability of illness or determine high-risk sufferers. As an illustration, algorithms can detect abnormalities in MRI scans to diagnose situations comparable to tumors or cysts.

In monetary danger administration, machine studying algorithms analyze market tendencies, financial indicators, and buyer knowledge to foretell potential losses and recommend methods for mitigation. This method helps organizations optimize their danger publicity and make knowledgeable funding choices.

Knowledge Preprocessing: The Statquest Illustrated Information To Machine Studying

Knowledge preprocessing is the preliminary step within the machine studying pipeline, the place uncooked knowledge is cleaned, remodeled, and formatted for use for mannequin coaching. This course of includes dealing with lacking knowledge, normalizing and standardizing options, and encoding categorical variables.

Dealing with Lacking Knowledge

Lacking knowledge can come up resulting from numerous causes comparable to non-response, knowledge assortment errors, or knowledge degradation over time. There are a number of strategies to deal with lacking knowledge: imply imputation, median imputation, and deletion.

Imply imputation includes changing lacking values with the imply of the respective function. This technique is often used when the info distribution is symmetric and the imply is an effective illustration of the info.

Imply = (Sum of all values) / (Variety of non-missing values)

Median imputation is one other technique the place lacking values are changed with the median of the respective function. This technique is used when the info distribution is skewed or the median is a greater illustration of the info.

Deletion includes eradicating rows or columns containing lacking values. This technique is used when the lacking values are sparse and eradicating them does not considerably have an effect on the info.

Knowledge Normalization and Standardization

Knowledge normalization and standardization are methods used to scale and rework options to a typical vary. Normalization includes scaling options to a selected vary, often between 0 and 1, to stop options with giant ranges from dominating the mannequin.

Standardization, alternatively, includes scaling options to have a imply of 0 and an ordinary deviation of 1. This method helps fashions be taught extra effectively and improves the convergence of optimization algorithms.

Encoding Categorical Variables

Categorical variables are options which have a restricted variety of distinct values. Encoding categorical variables includes remodeling these values into numerical representations for use in fashions.

One-hot encoding is a technique the place every class is represented by a binary vector. For instance, if a class has 3 doable values, the one-hot encoding would lead to a 3-element vector with a 1 on the index of the precise worth and 0s elsewhere.

One-hot encoding instance:

| Worth | One-hot Encoding |

| — | — |

| A | [1, 0, 0] |

| B | [0, 1, 0] |

| C | [0, 0, 1] |

Label encoding is one other technique the place every class is represented by a singular numerical worth. This technique is less complicated than one-hot encoding however can result in knowledge leakage if the goal variable is extremely correlated with the specific variable.

Supervised Studying Algorithms

Supervised studying is a sort of machine studying the place the algorithm is educated on labeled knowledge, the place the proper output is already recognized. This permits the mannequin to be taught the connection between inputs and outputs, permitting it to make predictions on new, unseen knowledge. Supervised studying algorithms are broadly utilized in numerous fields, together with picture classification, pure language processing, and advice methods. On this part, we’ll discover three well-liked supervised studying algorithms: determination bushes, random forests, and help vector machines.

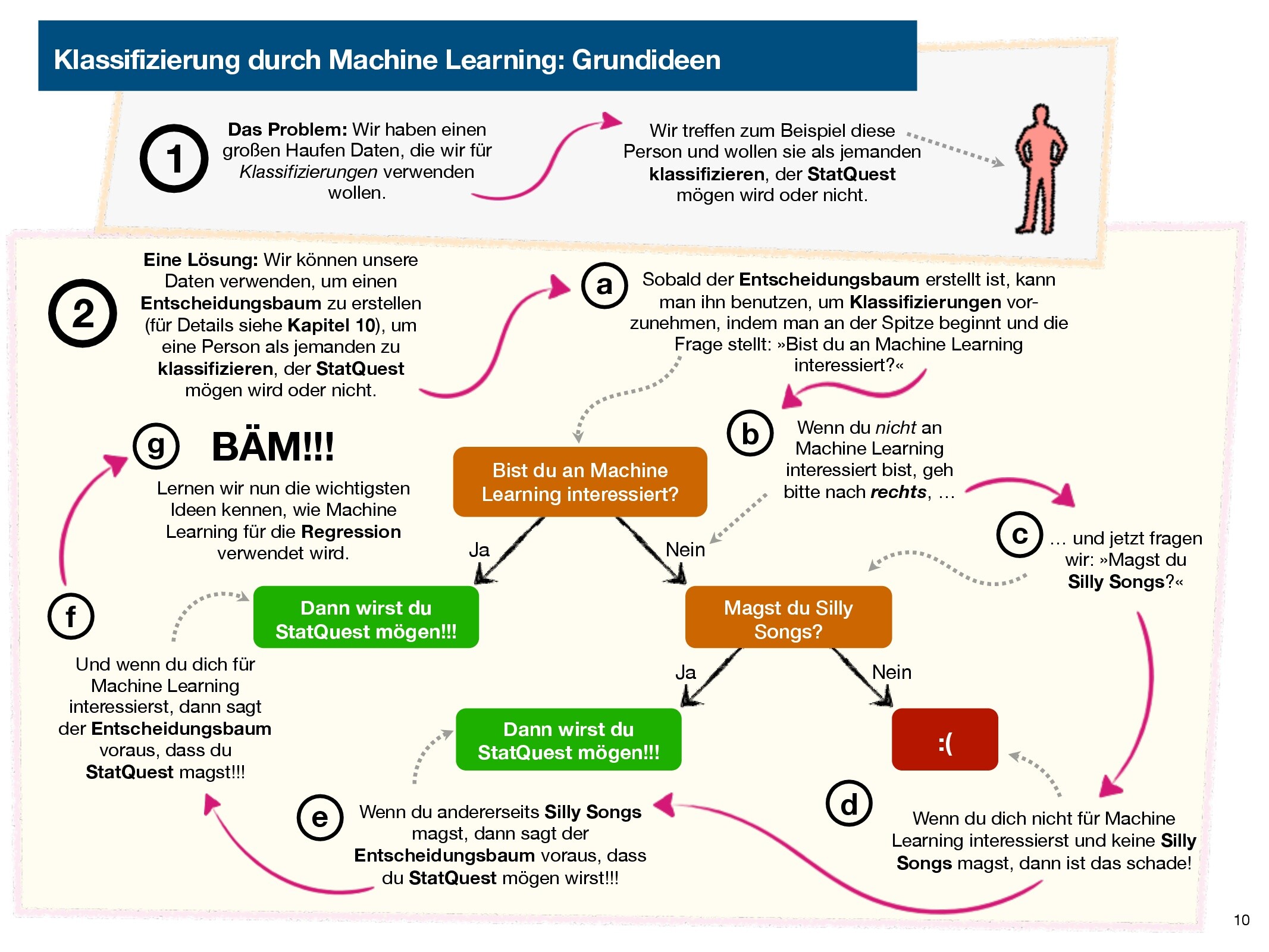

Determination Bushes

A call tree is a supervised studying algorithm that works by recursively partitioning the info into smaller subsets primarily based on the options. It begins by selecting the most effective function to separate the info, after which recursively splits the info into smaller subsets till a stopping criterion is met. The choice tree mannequin makes predictions by traversing the tree from the basis node to the leaf node, the place the anticipated output is decided by the bulk vote of the samples within the leaf node.

Determination bushes are sometimes used for classification issues, however will also be used for regression issues.

Some great benefits of determination bushes embody:

Nevertheless, determination bushes even have some disadvantages, together with:

Random Forests

A random forest is an ensemble studying algorithm that mixes a number of determination bushes to enhance the mannequin’s efficiency. It really works by coaching a number of determination bushes on random subsets of the info, after which averaging the predictions from every tree to supply the ultimate output. The random forest mannequin can deal with lacking values, outliers, and high-dimensional knowledge, making it a well-liked selection for a lot of purposes.

Random forests are sometimes used for classification and regression issues.

Some great benefits of random forests embody:

Nevertheless, random forests even have some disadvantages, together with:

Help Vector Machines

A help vector machine (SVM) is a supervised studying algorithm that works by discovering the hyperplane that maximally separates the lessons within the function house. It really works by fixing a quadratic programming drawback to seek out the optimum hyperplane that minimizes the error and maximizes the margin between the lessons. The SVM mannequin can deal with non-linear relationships through the use of a kernel operate to map the info right into a higher-dimensional house.

SVMs are sometimes used for classification issues, however will also be used for regression issues.

Some great benefits of SVMs embody:

Nevertheless, SVMs even have some disadvantages, together with:

Unsupervised Studying Algorithms

Within the realm of machine studying, unsupervised studying algorithms are designed to uncover hidden patterns and relationships inside knowledge with none prior data of the proper output. This method permits for the invention of recent insights and understanding of advanced knowledge distributions.

Clustering Algorithms: Okay-Means and Hierarchical Clustering

Unsupervised studying algorithms will be broadly categorized into clustering and dimensionality discount methods. Clustering algorithms, particularly, are helpful for grouping related knowledge factors into significant classes. Two well-liked clustering algorithms are k-means and hierarchical clustering.

Okay-Means Clustering

Okay-means clustering is an iterative algorithm that partitions the info into ok clusters, the place every cluster is represented by a centroid or imply worth. The algorithm works as follows:

Okay-Means clustering will be formalized as: min ∑ok C=1 ∑xi ∈ C ||xi − μC||2, the place xi represents a knowledge level, C denotes a cluster, and μC denotes the centroid of cluster C.

The k-means algorithm has a number of benefits, together with:

Nevertheless, k-means additionally has some disadvantages:

Examples of situations the place k-means clustering could be used embody:

– Buyer segmentation: grouping prospects primarily based on their shopping for habits and demographics.

– Picture compression: clustering related photographs collectively to cut back space for storing.

Hierarchical Clustering

Hierarchical clustering, alternatively, builds a hierarchy of clusters by merging or splitting current clusters. This method will be visualized as a dendrogram, which represents the cluster tree. There are two principal kinds of hierarchical clustering: agglomerative and divisive.

Hierarchical clustering has the benefit of:

Nevertheless, it additionally has some disadvantages:

Examples of situations the place hierarchical clustering could be used embody:

– Gene expression evaluation: grouping genes primarily based on their expression ranges inside totally different tissues.

– Community group detection: figuring out clusters of extremely interconnected nodes in a community.

Dimensionality Discount: Principal Element Evaluation (PCA)

Principal Element Evaluation (PCA) is a dimensionality discount method that transforms the info into a brand new coordinate system with the axes representing the principal elements. This method captures a lot of the variance within the knowledge alongside the primary few axes.

PCA has the benefit of:

Nevertheless, it additionally has some disadvantages:

Examples of situations the place PCA could be used embody:

– Picture function extraction: lowering the dimensionality of picture knowledge to enhance classification efficiency.

– Inventory portfolio optimization: capturing the underlying relationships between totally different shares and lowering the dimensionality of the info.

Neural Networks

Neural networks symbolize a major leap in machine studying. Basically, they’re designed to imitate the human mind’s construction, comprising interconnected nodes (neurons) that course of and transmit data. This advanced community of nodes permits machines to be taught from knowledge with out being explicitly programmed, revolutionizing the sector of synthetic intelligence.

Feedforward Neural Networks

Feedforward neural networks are some of the fundamental kinds of neural networks. The stream of data is linear, transferring solely from the enter layer to the hidden layers and eventually to the output layer. Every node processes the data, applies a non-linear transformation (activation operate), and passes the outcome to the subsequent layer.

A easy instance of a feedforward neural community is a multi-layer perceptron, which will be considered a sequence of related layers of neurons. The primary layer, the enter layer, receives the info, whereas the final layer, the output layer, produces the outcome. In between, there will be any variety of hidden layers.

Convolutional Neural Networks

Convolutional neural networks (CNNs) are designed to deal with picture and video knowledge. They use a sequence of convolutional and pooling layers to extract options from the enter knowledge. The convolutional layers apply filters to the info to determine options, and the pooling layers cut back the dimensionality of the info.

The important thing benefit of CNNs is their means to be taught spatial hierarchies of options from photographs, enabling them to acknowledge objects at totally different scales and orientations. A easy instance of a CNN is a LeNet-5 community, which is a sort of convolutional neural community that makes use of convolutional and pooling layers to categorise handwritten digits.

Recurrent Neural Networks

Recurrent neural networks (RNNs) are designed to deal with sequential knowledge. They use a sequence of recurrent connections to permit inputs at totally different timestamps to be depending on one another.

RNNs are notably helpful for pure language processing, the place the relationships between phrases in a sentence are sometimes sequential. A easy instance of an RNN is an easy recurrent neural community, which makes use of a single recurrent layer to foretell the subsequent phrase in a sentence given the earlier phrases.

Advantages and Challenges of Neural Networks

The advantages of neural networks are quite a few, together with the power to:

Nevertheless, neural networks even have a number of challenges, together with:

Situations The place Neural Networks Would Be Used

Neural networks are notably helpful in situations the place there’s a have to:

Examples of such situations embody:

Actual-World Purposes of Neural Networks

Neural networks have a variety of real-world purposes, together with:

Mannequin Analysis and Choice

Mannequin analysis and choice are essential steps within the machine studying workflow. They be sure that the chosen mannequin is correct, dependable, and generalizable to unseen knowledge. On this context, we’ll delve into the significance of mannequin analysis, the important thing metrics used for comparability, and methods for choosing the optimum mannequin.

Metrics for Mannequin Analysis

When evaluating a machine studying mannequin, we usually use a set of metrics to measure its efficiency. These metrics are important for understanding how properly the mannequin is doing on a selected job. Listed here are some widespread metrics utilized in mannequin analysis:

Imply Squared Error (MSE) is a metric used to measure the common distinction between predicted and precise values. It is calculated because the sum of the squared variations between predicted and precise values, divided by the variety of samples. The system for MSE is:

MSE = Σ(y_predicted – y_actual)^2 / n

Imply Absolute Error (MAE) is one other metric used to measure the common distinction between predicted and precise values. It is calculated because the sum of absolutely the variations between predicted and precise values, divided by the variety of samples. The system for MAE is:

MAE = Σ|y_predicted – y_actual| / n

R-Squared (R²), often known as the coefficient of dedication, measures the proportion of variance within the dependent variable that is defined by the impartial variable(s). It is calculated as 1 – (sum of squared residuals / sum of squared complete variability). The system for R² is:

R² = 1 – (SS_res / SS_tot)

Along with these metrics, we additionally use cross-validation to judge our mannequin’s efficiency. Cross-validation includes splitting our dataset into coaching and validation units, coaching our mannequin on the coaching set, and evaluating its efficiency on the validation set. This course of is repeated a number of instances, with totally different subsets of the info used for coaching and validation every time. By averaging the efficiency metrics throughout all iterations, we get a extra correct estimate of our mannequin’s efficiency on unseen knowledge.

Overfitting Detection and Hyperparameter Tuning

Overfitting happens when a mannequin is just too advanced, main it to suit the noise within the coaching knowledge quite than the underlying patterns. To detect overfitting, we will plot the coaching and validation accuracy (or loss) over time. If we see a major distinction within the accuracy between the 2, it might point out overfitting.

Hyperparameter tuning includes adjusting the mannequin’s parameters to optimize its efficiency. That is usually achieved utilizing methods like grid search or random search, the place we strive totally different combos of hyperparameters and choose the mix that yields the most effective efficiency.

Evaluating Fashions

When evaluating fashions, we have to think about a number of elements, together with:

We additionally have to determine find out how to choose the most effective mannequin for a given drawback. This includes weighing the trade-offs between totally different fashions and choosing the one which’s best suited for the duty at hand.

Deciding on the Optimum Mannequin

When choosing the optimum mannequin, we have to think about a number of elements, together with:

We will use numerous metrics to match the efficiency of various fashions. By choosing the mannequin with the most effective efficiency, we will be sure that our mannequin is correct, dependable, and generalizable to unseen knowledge.

Superior Strategies

Superior methods in machine studying are important for fixing advanced issues and bettering the accuracy of fashions. On this part, we’ll focus on three superior methods: ensemble strategies, switch studying, and deep studying.

Ensemble Strategies

Ensemble strategies mix the predictions of a number of fashions to enhance the general accuracy of the mannequin. That is achieved by coaching a number of fashions on the identical dataset after which combining their predictions utilizing methods comparable to bagging, boosting, and stacking.

Bagging, quick for Bootstrap Aggregating, includes coaching a number of fashions on totally different subsets of the dataset after which combining their predictions. This helps to cut back overfitting and enhance the robustness of the mannequin. Bagging will be applied utilizing the Random Forest algorithm, which trains a number of determination bushes on totally different subsets of the dataset.

For instance, for example now we have a dataset of photographs of cats and canine, and we wish to practice a mannequin to categorise them. We will practice a number of determination bushes on totally different subsets of the dataset, after which mix their predictions to enhance the accuracy of the mannequin.

Boosting

Boosting includes coaching a number of fashions on the identical dataset and mixing their predictions utilizing a weighted sum. The weights used within the sum are decided by the efficiency of every mannequin on the coaching dataset. Fashions that carry out poorly on the coaching dataset are given greater weights, whereas fashions that carry out properly are given decrease weights. This helps to enhance the accuracy of the mannequin by specializing in the fashions that carry out poorly on the coaching dataset.

For instance, for example now we have a dataset of photographs of cats and canine, and we wish to practice a mannequin to categorise them. We will practice a number of determination bushes on the identical dataset, after which mix their predictions utilizing a weighted sum. The weights used within the sum are decided by the efficiency of every determination tree on the coaching dataset.

Stacking

Stacking includes coaching a number of fashions on the identical dataset and mixing their predictions utilizing a meta-model. The meta-model is educated on the predictions of the a number of fashions, quite than the unique dataset. This helps to enhance the accuracy of the mannequin by combining the strengths of a number of fashions.

For instance, for example now we have a dataset of photographs of cats and canine, and we wish to practice a mannequin to categorise them. We will practice a number of determination bushes on the identical dataset, after which mix their predictions utilizing a meta-model. The meta-model is educated on the predictions of the a number of determination bushes, quite than the unique dataset.

Switch Studying

Switch studying includes utilizing a pre-trained mannequin as a place to begin for a brand new mannequin. This helps to hurry up the coaching course of and enhance the accuracy of the mannequin, by leveraging the data discovered from the pre-trained mannequin. High quality-tuning a pre-trained mannequin includes adjusting the weights of the mannequin to suit the brand new dataset.

For instance, for example now we have a dataset of photographs of cats and canine, and we wish to practice a mannequin to categorise them. We will use a pre-trained mannequin, comparable to VGG16, as a place to begin for our mannequin. We will fine-tune the weights of the pre-trained mannequin to suit our dataset, quite than coaching a brand new mannequin from scratch.

VGG16 is a pre-trained mannequin that has been educated on a big dataset of photographs.

Deep Studying

Deep studying includes utilizing neural networks with a number of layers to be taught advanced patterns in knowledge. This helps to enhance the accuracy of the mannequin by leveraging the strengths of a number of layers.

For instance, for example now we have a dataset of photographs of cats and canine, and we wish to practice a mannequin to categorise them. We will use a convolutional neural community (CNN) with a number of layers to be taught advanced patterns within the photographs.

Convolutional Neural Networks (CNNs) are a sort of neural community which are notably well-suited to picture classification duties.

Implementing Machine Studying Tasks

A machine studying undertaking begins with a transparent understanding of the issue assertion and the gathering of necessities. This includes defining the undertaking scope, figuring out the target market, and figuring out the anticipated outcomes. The next steps of information assortment, function engineering, and mannequin constructing are all essential for guaranteeing that the answer meets the undertaking’s targets. On this chapter, we’ll delve into the undertaking planning and growth course of, and discover the varied instruments and applied sciences obtainable for implementing machine studying tasks.

Undertaking Planning

Undertaking planning is an indispensable step within the machine studying pipeline. It commences with necessities gathering, which entails figuring out the issue assertion, defining the undertaking scope, and figuring out the anticipated outcomes. This course of includes stakeholder engagement, knowledge evaluation, and the formulation of a undertaking proposal. A well-planned undertaking ensures that the answer meets the undertaking’s targets and that assets are allotted effectively.

A transparent drawback assertion and well-defined undertaking scope are important for guaranteeing that the answer meets the undertaking’s targets.

Knowledge Assortment

Knowledge assortment is a essential step within the machine studying pipeline. It includes figuring out the kind of knowledge required, amassing knowledge from numerous sources, and preprocessing the info for evaluation. There are a number of kinds of knowledge that can be utilized in machine studying, together with structured knowledge (e.g., tables, databases), semi-structured knowledge (e.g., CSV recordsdata, JSON recordsdata), and unstructured knowledge (e.g., textual content, photographs).

Structured knowledge is organized in a selected format, whereas semi-structured knowledge has some group, and unstructured knowledge lacks a predefined format.

Deciding on the Proper Instruments and Applied sciences

The selection of instruments and applied sciences for a machine studying undertaking is dependent upon numerous elements, together with the undertaking scope, the kind of knowledge, and the experience of the crew. Some well-liked Python libraries and frameworks for machine studying embody Scikit-learn, TensorFlow, and PyTorch. An Built-in Improvement Setting (IDE) will also be used to facilitate undertaking growth.

The selection of library is dependent upon the undertaking necessities and the experience of the crew.

Fashionable IDEs for Machine Studying

IDEs can be utilized to facilitate undertaking growth by offering options comparable to code completion, debugging, and undertaking group. Some well-liked IDEs for machine studying embody Visible Studio Code and PyCharm.

IDEs can enhance productiveness by offering options comparable to code completion and debugging.

Moral Concerns and Accountable Use

Within the realm of machine studying, a refined but essential side usually will get neglected – ethics. As fashions grow to be more and more subtle, the chance of unintended penalties grows. It is important to acknowledge the accountability that comes hand-in-hand with these technological developments.

Knowledge Privateness and Safety

Knowledge privateness and safety are two sides of the identical coin. Relating to machine studying, securing delicate knowledge is of paramount significance. This consists of safeguarding private data, medical knowledge, and monetary data, amongst others. Think about a situation the place delicate knowledge falls into the fallacious fingers – it may result in catastrophic penalties. To forestall such a situation, it is essential to implement sturdy safety measures, comparable to encryption, entry controls, and safe knowledge storage. Complying with laws like GDPR, HIPAA, and CCPA ensures that organizations prioritize knowledge safety.

Algorithmic Bias and Detection

Algorithmic bias is a stealthy enemy that may creep into machine studying fashions with out warning. It is a state of affairs the place a mannequin is educated on biased knowledge or has design flaws, resulting in biased outcomes. Think about a advice engine that constantly suggests the identical merchandise to a selected group of customers – it is time to take a better look. Detecting algorithmic bias requires a mix of human oversight, knowledge evaluation, and statistical testing. By recognizing these biases, we will make knowledgeable choices to enhance the equity and accuracy of our fashions.

Explainable AI and Mannequin Auditing

Explainable AI (XAI) is a subset of machine studying that focuses on offering readability and transparency into the decision-making strategy of advanced fashions. By utilizing methods like function significance and partial dependence plots, XAI permits us to grasp how fashions arrive at their conclusions. Think about being able to ask your mannequin, “Hey, what led you to suggest this product?” and receiving a transparent, concise reply. Mannequin auditing, alternatively, includes evaluating a mannequin’s efficiency, figuring out areas for enchancment, and guaranteeing it meets enterprise necessities. By combining XAI and mannequin auditing, we will construct belief in our fashions and make knowledgeable choices.

Utilizing methods like SHAP and LIME, we will present function significance and partial dependence plots to achieve insights into the decision-making course of.

Throughout mannequin auditing, we will determine areas for enchancment, comparable to mannequin efficiency, function engineering, and knowledge preprocessing.

Final Phrase

This information represents a fruits of data, bringing collectively key ideas, terminology, and knowledgeable recommendation. By the tip of this journey, you will possess a deeper understanding of machine studying, its advantages, and its potential dangers. Whether or not you are a seasoned skilled or a newbie, the Statquest Illustrated Information to Machine Studying will equip you with the necessities to achieve this quickly evolving discipline.

FAQ Part

What’s machine studying?

MACHINE studying is a sort of synthetic intelligence that allows methods to be taught from knowledge, making predictions or choices primarily based on patterns and tendencies with out being explicitly programmed.

What are the kinds of machine studying?

The principle classes of machine studying are supervised, unsupervised, and reinforcement studying. Supervised studying includes coaching on labeled knowledge, unsupervised studying focuses on discovery of patterns in unlabeled knowledge, and reinforcement studying includes studying by way of trial and error.

What’s the significance of information preprocessing?

Knowledge preprocessing is an important step in machine studying that includes dealing with lacking knowledge, normalizing options, and encoding categorical variables to organize the info for modeling.