With what are micromodels in machine studying NLP on the forefront, this matter opens a window to a world of thrilling potentialities and insights within the realm of synthetic intelligence. Micromodels in machine studying NLP are revolutionary fashions which have gained recognition for his or her potential to be taught and adapt from huge quantities of information, making them an important part in trendy AI.

These fashions differ from conventional machine studying fashions of their potential to supply a extra correct and nuanced understanding of advanced duties corresponding to language translation, textual content summarization, and sentiment evaluation. By analyzing the strengths and limitations of micromodels, researchers and builders can unlock new functions and enhance current ones, pushing the boundaries of what’s doable in AI.

Introduction to Micromodels in Machine Studying NLP

Within the bustling world of Machine Studying and Pure Language Processing (NLP), a brand new pattern is rising – Micromodels. Micromodels are small, domain-specific neural networks which have gained recognition as a result of their potential to course of and perceive advanced language patterns. These fashions have been efficiently utilized in numerous NLP duties corresponding to language translation, sentiment evaluation, and textual content summarization.

A Temporary Historical past of Micromodels in NLP

The idea of Micromodels originated from the thought of distilling advanced neural networks into smaller, extra manageable fashions. In 2019, researchers launched the idea of “Tiny Neural Networks” which had been designed to be extremely expressive and compact. Micromodels have since advanced to develop into an important part in numerous NLP pipelines, enabling functions with improved efficiency, pace, and interpretability.

The Significance of Micromodels in NLP Purposes

Micromodels have revolutionized the sector of NLP by offering a number of key advantages:

Environment friendly Inference

Micromodels require considerably much less computational sources in comparison with bigger neural networks. This permits real-time inference and sooner processing of huge volumes of textual content knowledge.

Improved Efficiency

Micromodels have been proven to attain higher efficiency in numerous NLP duties, typically surpassing the efficiency of bigger neural networks.

Enhanced Interpretability

Micromodels present insights into the decision-making course of, enabling builders to grasp and enhance the mannequin’s conduct.

-

Micromodels have been utilized in numerous NLP functions, together with language translation, sentiment evaluation, and textual content summarization.

-

“Micromodels could be seen as a bridge between conventional machine studying and deep studying, offering the most effective of each worlds.”

What are Micromodels?

Micromodels are comparatively new within the area of machine studying, particularly in Pure Language Processing (NLP). Not like conventional machine studying fashions, micromodels give attention to smaller, extra particular duties, leveraging the benefits of each symbolic reasoning and connectionist studying.

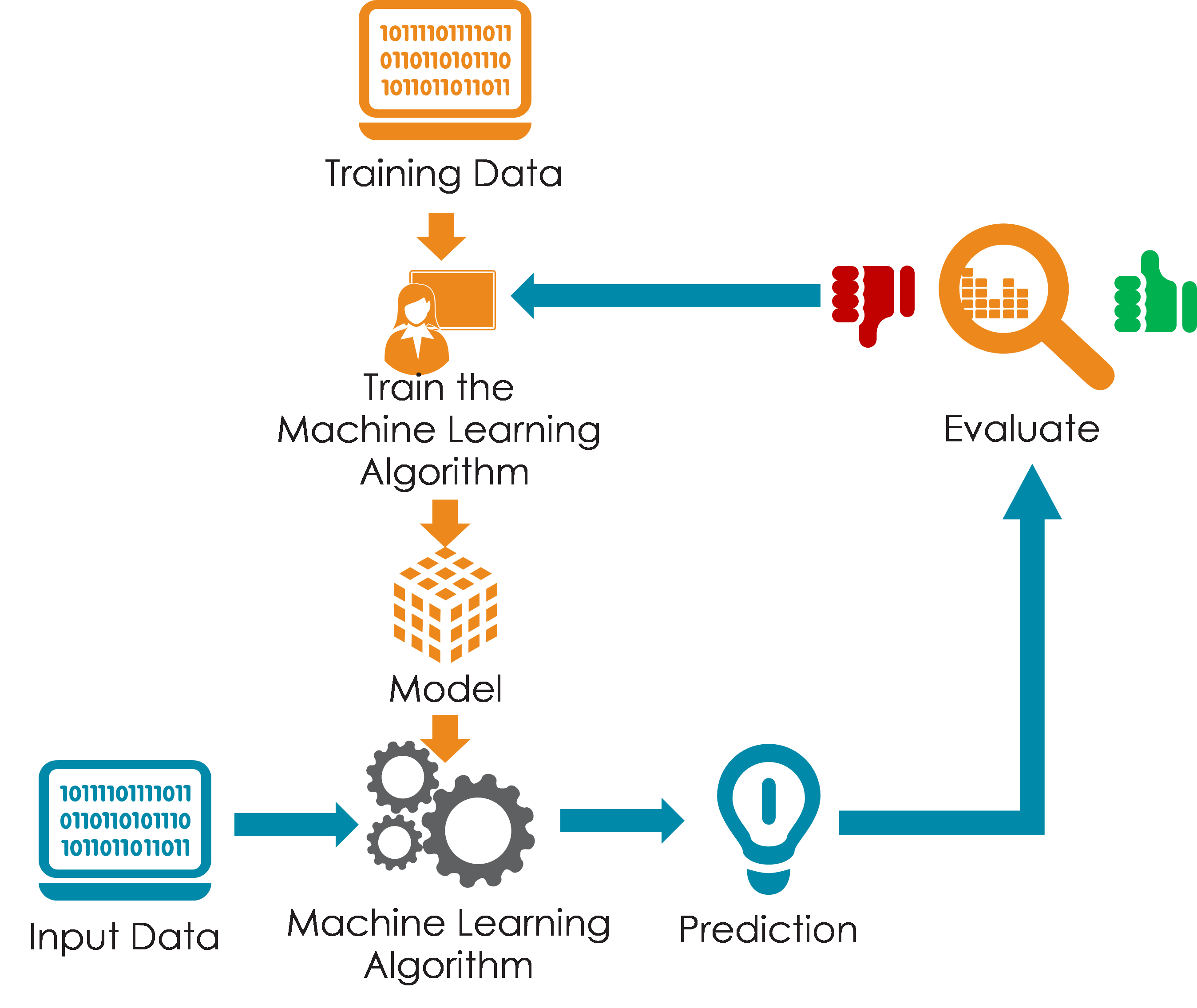

Historically, machine studying fashions relied on the collective energy of advanced neural networks, skilled on monumental datasets to be taught relationships between inputs and outputs. Nevertheless, such fashions typically wrestle with dealing with the complexity and nuances of human language, a basic side of NLP functions. These limitations led to the event of micromodels, which give a definite method by breaking down the issue into smaller, extra manageable items.

Distinction between Micromodels and Conventional Machine Studying Fashions

Conventional machine studying fashions are advanced networks that deal with huge quantities of information. Conversely, micromodels are extra modular, every specializing in smaller, associated duties. Not like their conventional counterparts, micromodels leverage symbolic reasoning along with connectionist studying. Symbolic programs, in a method, mimic how people assume through the use of symbolic representations of information and guidelines to derive conclusions. This hybrid method offers micromodels a aggressive edge in dealing with particular NLP duties, like language interpretation, info retrieval, or textual content classification.

Examples of Micromodels in NLP Purposes

Micromodels are utilized in quite a lot of NLP functions, corresponding to info retrieval, question-answering programs, textual content classification, and sentiment evaluation.

The usage of micromodels simplifies many duties inside NLP, permitting for higher efficiency and extra correct outcomes. For example, a micromodel designed for sentiment evaluation won’t solely search for s but in addition think about the context through which they’re used.

Strengths and Limitations of Micromodels in NLP

Micromodels provide a extra exact and environment friendly method to NLP, which comes with a number of strengths:

–

Environment friendly and Exact

Micromodels could be designed to deal with particular NLP duties, offering a extra targeted answer in comparison with conventional machine studying fashions.

–

Hierarchical Reasoning

They mix symbolic reasoning with connectionist studying, mimicking human thought processes and enabling them to derive conclusions primarily based on symbolic representations of information and guidelines.

–

Flexibility

Since micromodels are modular, they are often simply up to date, modified, or changed with out affecting the general efficiency of the bigger system.

Nevertheless, micromodels even have some limitations:

–

Complexity of Integration

Integrating micromodels into a bigger system could be advanced, particularly if the fashions aren’t designed with integration in thoughts.

–

Overfitting

If not correctly skilled, micromodels can overfit to the coaching knowledge, making them much less efficient on unseen knowledge.

–

Restricted Scalability

Whereas micromodels are extra environment friendly and exact, they could face challenges when coping with very massive datasets or advanced duties that require a bigger mannequin.

Varieties of Micromodels: What Are Micromodels In Machine Studying Nlp

Within the realm of machine studying and NLP, micromodels are an important part that permits environment friendly and correct processing of knowledge. Understanding the several types of micromodels is essential to leveraging their potential. Right here, we dive into the varied classes of micromodels and discover their traits.

1. Rule-based Micromodels

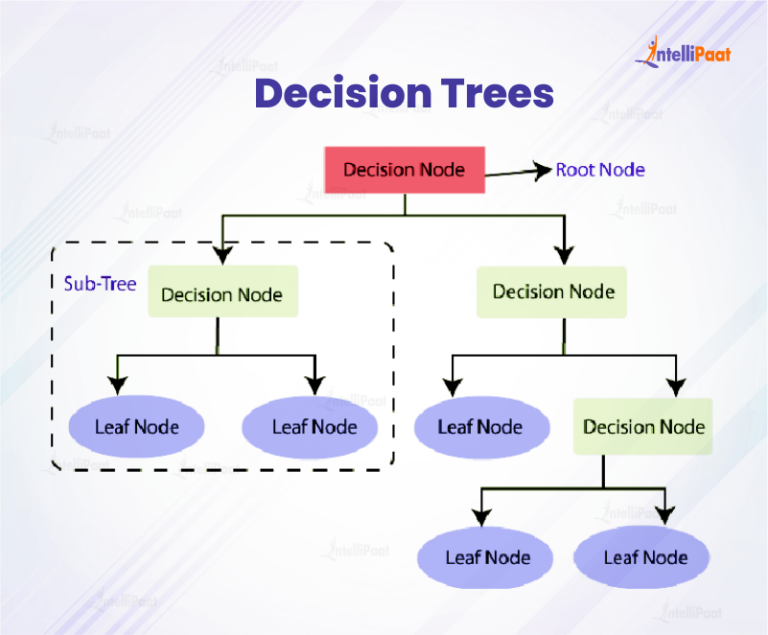

Rule-based micromodels function on predetermined guidelines and decision-making processes which can be hardcoded into the system. These guidelines are normally within the type of IF-THEN statements or determination timber. This kind of micromodel is especially helpful in conditions the place the principles are well-defined and do not require steady adaptation. The foundations and decision-making course of in rule-based micromodels are sometimes specific and could be understood by people. Nevertheless, they are often restricted of their potential to deal with advanced or unsure conditions.

| Rule-based Micromodel Traits | Instance |

|---|---|

| Mounted algorithm | Prioritizing buyer complaints primarily based on severity and urgency |

| Choice-making course of is specific | Utilizing a call tree to find out the probability of a buyer shopping for a product |

| Might not deal with advanced or unsure conditions nicely | Problem in dealing with ambiguous buyer suggestions |

2. Statistical Micromodels

Statistical micromodels make use of statistical strategies to make predictions or classify knowledge. They typically depend on supervised studying methods, corresponding to determination timber, Bayesian networks, or random forests. This kind of micromodel is beneficial in conditions the place there may be a considerable amount of knowledge and the relationships between variables are advanced. Statistical micromodels could be skilled on previous knowledge to enhance their accuracy over time.

| Statistical Micromodel Traits | Instance |

|---|---|

| Makes use of statistical strategies to make predictions or classify knowledge | Predicting buyer churn primarily based on previous utilization patterns |

| Usually depends on supervised studying methods | Coaching a call tree to categorise buyer suggestions as constructive or detrimental |

| Will be skilled on previous knowledge for improved accuracy | Updating a Bayesian community with new buyer buy knowledge |

3. Neural Micromodels

Neural micromodels make the most of synthetic neural networks to course of and analyze knowledge. These fashions can be taught advanced patterns and relationships in knowledge by way of a course of known as deep studying. Neural micromodels are sometimes utilized in conditions the place conventional machine studying strategies are inadequate. They may also be used for duties corresponding to pure language processing, pc imaginative and prescient, and recommender programs.

| Neural Micromodel Traits | Instance |

|---|---|

| Makes use of synthetic neural networks | Utilizing a neural community to categorise buyer opinions as constructive or detrimental |

| Can be taught advanced patterns and relationships in knowledge | Figuring out advanced buyer buying conduct utilizing a neural community |

| Usually used for duties corresponding to NLP and pc imaginative and prescient | Utilizing a neural community to translate buyer suggestions from one language to a different |

Micromodel Architectures

Within the realm of Micromodels, the structure performs an important position in figuring out the mannequin’s efficiency, complexity, and suppleness. The structure of a Micromodel refers back to the structural group of its elements, which might considerably affect its potential to seize advanced patterns and relationships within the knowledge. On this part, we’ll delve into the completely different Micromodel architectures which have gained prominence within the area of NLP.

Micromodels could be broadly categorized into three important architectures: Layered, Tree-like, and Graph-based. Every of those architectures has its distinctive traits, benefits, and use instances.

Layered Micromodels

Layered Micromodels are essentially the most conventional and broadly used structure in NLP. This structure consists of a number of layers, the place every layer is chargeable for processing the enter knowledge in a selected method. The layers are sometimes organized in a hierarchical method, with the enter knowledge flowing by way of every layer till it reaches the ultimate output layer.

One of many key traits of Layered Micromodels is using activation capabilities, which assist to introduce non-linearity within the mannequin and allow it to seize advanced relationships between options. The most typical layers in a Layered Micromodel are:

- Embedding Layer: This layer converts enter phrases or tokens into dense vectors, known as embeddings, which seize the semantic which means of the phrases.

- Recurrent Neural Community (RNN) Layer: This layer makes use of RNNs to course of sequential knowledge, corresponding to textual content or speech, and seize the temporal relationships between inputs.

- Convolutional Neural Community (CNN) Layer: This layer makes use of CNNs to course of enter knowledge in a convolutional method, which is especially helpful for picture and audio processing duties.

- Consideration Layer: This layer permits the mannequin to give attention to particular components of the enter knowledge, corresponding to sure phrases or sentences, when producing output.

The benefits of Layered Micromodels embody their potential to seize advanced patterns, deal with sequential knowledge, and leverage pre-trained language fashions. Nevertheless, they are often computationally costly and require cautious tuning of hyperparameters.

Tree-like Micromodels

Tree-like Micromodels are a variation of the Layered structure, the place every layer is represented as a call tree. This structure is especially helpful for duties that contain hierarchical classification or regression, corresponding to doc categorization or sentiment evaluation.

The important thing traits of Tree-like Micromodels embody using determination timber, which recursively partition the enter house into smaller sub-spaces primarily based on the enter options. The choice timber are sometimes shallow, with a small variety of ranges, to stop overfitting.

One of many benefits of Tree-like Micromodels is their potential to deal with high-dimensional enter knowledge and cut back overfitting. They’re additionally comparatively quick to coach and could be parallelized effectively. Nevertheless, they could not seize advanced relationships between options as successfully as Layered Micromodels.

Graph-based Micromodels

Graph-based Micromodels are a sort of Micromodel that represents the enter knowledge as a graph, the place nodes signify entities and edges signify relationships between them. This structure is especially helpful for duties that contain entity recognition, relationship extraction, or graph classification.

One of many key traits of Graph-based Micromodels is using graph neural networks (GNNs), which might effectively course of graph-structured knowledge and seize advanced relationships between entities. The GNNs sometimes include a number of layers, every of which processes the graph otherwise.

The benefits of Graph-based Micromodels embody their potential to seize advanced relationships between entities and deal with graph-structured knowledge. They’re additionally comparatively quick to coach and could be parallelized effectively. Nevertheless, they could require cautious tuning of hyperparameters and could be computationally costly for big graphs.

Purposes of Micromodels in NLP

Machine learning-based micromodels in NLP have quite a few functions throughout numerous industries, enhancing language understanding and processing capabilities. These functions showcase the flexibility of micromodels in dealing with advanced language duties effectively.

Micromodels have been efficiently utilized in a number of areas of NLP, bettering the accuracy and effectivity of language processing duties. These functions leverage the strengths of micromodels, corresponding to their potential to seize localized patterns and relationships inside language knowledge.

Language Translation

Micromodels have been successfully utilized in language translation duties, enabling machines to grasp and generate correct translations of languages. On this utility, micromodels are skilled on massive datasets of parallel textual content, the place the enter and output are paired with their corresponding translations. By capturing localized patterns and relationships inside these datasets, micromodels can generate extra correct and nuanced translations.

- Machine translation programs depend on micromodels to translate languages with greater accuracy, particularly for languages with much less accessible sources.

- Micromodels could be fine-tuned for particular language pairs, adapting to the distinctive traits of the languages being translated.

- By leveraging micromodels, language translation programs can deal with advanced texts, together with idioms, colloquial expressions, and specialised terminology.

Textual content Summarization, What are micromodels in machine studying nlp

Micromodels are additionally utilized in textual content summarization duties, enabling machines to robotically generate concise summaries of lengthy paperwork. On this utility, micromodels are skilled on massive datasets of articles, paperwork, or different texts, the place they be taught to establish crucial info and rephrase it in a concise method.

- Textual content summarization programs utilizing micromodels can establish key factors and supporting particulars in paperwork, making certain that the ultimate abstract precisely displays the content material.

- Micromodels could be fine-tuned for particular domains or industries, adapting to the distinctive traits of the textual content being summarized.

- By leveraging micromodels, textual content summarization programs can deal with lengthy and complicated texts, together with technical paperwork, tutorial papers, and information articles.

Sentiment Evaluation

Micromodels are additionally utilized in sentiment evaluation duties, enabling machines to establish and categorize opinions and feelings expressed in textual content. On this utility, micromodels are skilled on massive datasets of labeled textual content, the place the textual content is annotated with its corresponding sentiment (constructive, detrimental, impartial, and so forth.).

- Sentiment evaluation programs utilizing micromodels can establish the general sentiment of textual content, together with feelings and opinions, with excessive accuracy.

- Micromodels could be fine-tuned for particular domains or industries, adapting to the distinctive language and tone used within the textual content being analyzed.

- By leveraging micromodels, sentiment evaluation programs can deal with lengthy and complicated texts, together with social media posts, product opinions, and buyer suggestions.

Future Instructions in Micromodel Analysis

As micromodel analysis continues to evolve, it’s important to contemplate future instructions that may additional improve the capabilities of those fashions. The potential advantages and challenges of those instructions might be mentioned under.

Integration with Different AI Strategies

The combination of micromodels with different AI methods, corresponding to deep studying and reinforcement studying, can result in the event of extra highly effective and versatile fashions. This integration can allow micromodels to leverage the strengths of different AI methods and enhance their efficiency on advanced duties. For instance, combining micromodels with deep studying can allow the fashions to be taught from massive datasets and enhance their accuracy.

- Switch studying: Micromodels could be built-in with different AI methods to allow switch studying, the place a pre-trained mannequin is fine-tuned for a selected process.

- Multi-task studying: Micromodels could be built-in with a number of AI methods to allow multi-task studying, the place a single mannequin learns to carry out a number of duties concurrently.

- Adversarial coaching: Micromodels could be built-in with different AI methods to allow adversarial coaching, the place a mannequin is skilled to be strong towards adversarial assaults.

Improvement of New Micromodel Architectures

New micromodel architectures could be developed to allow the fashions to carry out extra advanced duties and deal with bigger datasets. These new architectures could be designed to benefit from the strengths of micromodels, corresponding to their potential to be taught from small datasets. For instance, a brand new micromodel structure could be developed to allow the fashions to be taught from sequential knowledge.

- RNN-based micromodels: RNN-based micromodels could be developed to allow the fashions to be taught from sequential knowledge, corresponding to textual content or speech.

- Transformer-based micromodels: Transformer-based micromodels could be developed to allow the fashions to be taught from parallel knowledge, corresponding to photos or textual content.

- Graph-based micromodels: Graph-based micromodels could be developed to allow the fashions to be taught from advanced relationships between knowledge factors.

Utility to Different Domains

Micromodels could be utilized to different domains, corresponding to pc imaginative and prescient, pure language processing, and advice programs. These functions may also help to enhance the efficiency of the fashions and allow them to deal with extra advanced duties.

- Picture classification: Micromodels could be utilized to picture classification duties to allow the fashions to be taught from photos and classify them into completely different classes.

- Speech recognition: Micromodels could be utilized to speech recognition duties to allow the fashions to be taught from speech knowledge and acknowledge spoken phrases.

- Advice programs: Micromodels could be utilized to advice programs to allow the fashions to be taught from person conduct and suggest personalised merchandise.

Closing Notes

In conclusion, micromodels in machine studying NLP are a game-changing know-how that has the potential to revolutionize the sector of synthetic intelligence. As analysis continues to advance and new functions emerge, it’s important to grasp the ideas and advantages of micromodels so we will harness their energy to create smarter AI options for a brighter future.

Widespread Queries

What’s the major benefit of micromodels in machine studying NLP?

Micromodels present a extra correct and nuanced understanding of advanced duties, enabling researchers and builders to unlock new functions and enhance current ones.

How do micromodels differ from conventional machine studying fashions?

Micromodels be taught and adapt from huge quantities of information, offering a extra correct and nuanced understanding of advanced duties.

What are some widespread functions of micromodels in machine studying NLP?

Micromodels are utilized in language translation, textual content summarization, sentiment evaluation, and different NLP functions.

What are some potential future instructions in micromodel analysis?

Future analysis instructions embody integrating micromodels with different AI methods, creating new micromodel architectures, and making use of them to different domains.