What’s an epoch machine studying is all about, innit? Consider it like working laps in a marathon, and every lap known as an epoch, yeah? However as an alternative of sweating buckets, we’re coaching neural networks to get higher at recognizing patterns, blud.

Epochs are principally the constructing blocks of machine studying, and it is a essential a part of how neural networks study and enhance. It is just like the extra laps we run, the higher we get at recognizing the correct route, mate.

Definition of Epoch in Machine Studying

Within the context of machine studying, an epoch is a single cross via the coaching dataset of a neural community. Because of this the neural community processes every pattern within the coaching dataset precisely as soon as throughout a single epoch. This idea is essential for understanding how neural networks study from knowledge.

In machine studying, neural networks are skilled on massive datasets to study patterns and relationships between variables. The coaching course of sometimes includes iteratively adjusting the mannequin’s parameters to reduce the distinction between its predictions and the precise output. The time period “epoch” refers to one in all these iterations, the place the mannequin sees each knowledge level within the coaching dataset as soon as.

Epochs in Neural Community Coaching

Epochs are a vital part of neural community coaching as they allow the mannequin to study from the complete coaching dataset, relatively than only a subset of it. The method of coaching a neural community could be considered a cycle of epochs, the place every epoch consists of the next steps:

- Ahead cross: The mannequin processes the enter knowledge, making predictions and computing the loss.

- Backward cross: The mannequin calculates the gradients of the loss with respect to every parameter, and the optimization algorithm updates the parameters. This step could be repeated a number of instances throughout a single epoch.

Epochs can be utilized to regulate the mannequin’s studying charge, in addition to to implement varied regularization strategies. For instance, throughout every epoch, the mannequin may be uncovered to a random subset of coaching knowledge to stop overfitting. The variety of epochs, epoch dimension, and studying charge are key hyperparameters that must be tuned for optimum mannequin efficiency.

Key Traits of Epochs

There are a number of key traits of epochs that have an effect on the efficiency of a neural community:

-

Batch Dimension:

The variety of samples which can be processed as a bunch throughout every epoch.

-

Analysis Metrics:

Metrics comparable to loss, accuracy, and precision are sometimes evaluated on the finish of every epoch to observe progress.

-

Early Stopping:

Coaching is halted when a pre-defined metric stops bettering, which could be after a sure variety of epochs.

-

Studying Charge:

The speed at which mannequin parameters are up to date throughout every epoch.

-

Regularization Strategies:

These embrace dropout, weight decay, and batch normalization, that are utilized throughout every epoch.

Influence of Epochs on Mannequin Efficiency

The length and frequency of epochs have a direct affect on mannequin efficiency. If epochs are too lengthy, the mannequin might study particular artifacts of the coaching knowledge relatively than basic patterns, resulting in overfitting. Conversely, shorter epochs won’t present sufficient studying alternatives for the mannequin to achieve optimum efficiency.

The stability between epoch length and frequency is subsequently a fragile one, requiring experimentation and cautious tuning of hyperparameters to attain the very best outcomes. Moreover, utilizing totally different coaching settings, knowledge augmentation, and early stopping strategies might help forestall overfitting and obtain extra environment friendly coaching.

Frequent Eventualities The place Epochs are Vital

Epochs play a important function in a number of machine studying functions, together with:

- Deep studying fashions: Epochs are important for coaching massive neural networks, the place a single cross over the info is probably not adequate for mannequin convergence.

- Time-series prediction: Epochs are used to forecast sequences with a time dimension, typically utilizing fashions like RNNs, LSTMs, or transformers.

- Picture classification: Epochs are employed for coaching on massive picture datasets, the place fashions might require a number of iterations to study strong options.

- Causal inference: Epochs can be utilized to research and perceive complicated relationships between variables in time-series knowledge.

Conclusion

Epochs are a basic idea in machine studying, significantly within the context of neural community coaching. Understanding epochs is crucial for choosing the correct hyperparameters, monitoring progress, and stopping overfitting. Efficient use of epochs can considerably affect mannequin efficiency and accuracy, making it a vital side of machine studying engineering.

Significance of Epochs in Deep Studying

Epochs play a vital function in deep studying fashions as they allow the mannequin to study from the coaching knowledge in an iterative method. This course of permits the mannequin to enhance its efficiency with every iteration, thereby enabling it to study from its errors and adapt to the complexities of the info.

The Influence of Epochs on Mannequin Efficiency

The variety of epochs has a major affect on the efficiency of a deep studying mannequin. The selection of epoch quantity impacts the trade-off between overfitting and underfitting the info. The next variety of epochs can lead to overfitting, the place the mannequin turns into too specialised to the coaching knowledge and fails to generalize properly to new, unseen knowledge. However, a decrease variety of epochs might lead to underfitting, the place the mannequin fails to seize the underlying patterns within the knowledge.

Overfitting vs. Underfitting

Overfitting happens when a mannequin is just too complicated and suits the noise within the coaching knowledge, leading to poor efficiency on new knowledge. Underfitting happens when a mannequin is just too easy and fails to seize the underlying patterns within the knowledge, additionally leading to poor efficiency.

-

Mannequin Complexity

A mannequin with too many parameters or layers might lead to overfitting.

-

Early Stopping

Often monitoring the mannequin’s efficiency on a validation set and stopping the coaching course of earlier than overfitting happens might help forestall overfitting.

Underfitting, however, could be addressed by growing the variety of epochs, utilizing regularization strategies, or incorporating extra knowledge into the coaching course of.

| Approach | Description |

|---|---|

| Regularization | Including a penalty time period to the loss operate to discourage massive weights and stop overfitting. |

| Information Augmentation | Producing further coaching knowledge by making use of random transformations to the prevailing knowledge. |

Varieties of Epochs in Machine Studying

Within the realm of machine studying, epochs function a vital part in coaching and evaluating fashions. As we delve deeper into the world of machine studying, it turns into important to know the various kinds of epochs that facilitate the training course of. This part will delve into the varied varieties of epochs, shedding gentle on their goal and significance within the machine studying panorama.

Coaching Epochs

Coaching epochs are the first focus of machine studying mannequin growth. These epochs are used to replace the mannequin’s parameters primarily based on the coaching knowledge. Throughout every coaching epoch, the mannequin is introduced with a batch of knowledge, and the loss operate is calculated. The mannequin’s parameters are then up to date utilizing backpropagation and optimization algorithms to reduce the loss. The method is repeated for a number of epochs, permitting the mannequin to study and enhance its efficiency on the coaching knowledge.

- Preliminary Epochs: Within the preliminary epochs, the mannequin’s parameters are up to date quickly, and the loss decreases considerably. It’s because the mannequin is studying from the coaching knowledge and updating its parameters to reduce the loss.

- Convergence Epochs: Because the mannequin approaches convergence, the loss decreases regularly, and the mannequin’s parameters turn out to be secure. This is a sign that the mannequin has reached some extent of diminishing returns, and additional coaching might not considerably enhance the mannequin’s efficiency.

Validation Epochs

Validation epochs are used to guage the mannequin’s efficiency on a separate validation dataset. These epochs are important in stopping overfitting, the place the mannequin turns into too specialised within the coaching knowledge and fails to generalize properly to new knowledge. Throughout validation epochs, the mannequin is introduced with a batch of validation knowledge, and the loss is calculated. This course of helps to estimate the mannequin’s efficiency on unseen knowledge and gives a extra correct illustration of the mannequin’s generalization capabilities.

Testing Epochs

Testing epochs are used to guage the mannequin’s efficiency on a separate check dataset. These epochs are essential in assessing the mannequin’s skill to generalize to new, unseen knowledge. Throughout testing epochs, the mannequin is introduced with a batch of check knowledge, and the loss is calculated. The efficiency of the mannequin on the check knowledge is then in comparison with its efficiency on the coaching knowledge, offering a complete understanding of the mannequin’s strengths and weaknesses.

Different Varieties of Epochs

Along with coaching, validation, and testing epochs, there are different varieties of epochs which can be utilized in machine studying, comparable to:

- Early Stopping Epochs: These epochs contain stopping the coaching course of prematurely if the mannequin’s efficiency on the validation knowledge doesn’t enhance after a sure variety of epochs.

- Heat-Up Epochs: These epochs contain coaching the mannequin on a small fraction of the info earlier than scaling as much as the complete dataset, serving to to stop overfitting and scale back the mannequin’s sensitivity to hyperparameters.

- Decay Epochs: These epochs contain regularly decreasing the mannequin’s studying charge throughout coaching, serving to to fine-tune the mannequin’s parameters and enhance its efficiency on the validation knowledge.

Every of those epochs performs a important function within the machine studying course of, and understanding their goal and significance is crucial for growing efficient and environment friendly machine studying fashions.

Position of Epochs in Mannequin Analysis

Epochs play a pivotal function in evaluating the efficiency of a machine studying mannequin. By dividing the coaching course of into a number of epochs, machine studying fashions could be evaluated at common intervals, offering insights into their efficiency, accuracy, and consistency over time.

Metric Analysis Throughout Every Epoch

The efficiency of a machine studying mannequin is often evaluated utilizing a set of metrics. Some widespread metrics used to guage mannequin efficiency throughout every epoch embrace:

- Loss Operate: This can be a measure of the distinction between predicted and precise values. A decrease loss operate signifies higher mannequin efficiency.

- Accuracy: This measures the proportion of appropriate predictions made by the mannequin.

- Precision: This measures the proportion of true positives amongst all optimistic predictions.

- Recall: This measures the proportion of true positives amongst all precise optimistic situations.

- F1-Rating: That is the harmonic imply of precision and recall and is usually used to guage the mannequin’s efficiency.

- Validation Loss: This metric measures the mannequin’s efficiency on a separate validation set. If the validation loss begins to extend after a sure level, it might point out that the mannequin is overfitting.

- Accuracy, Precision, and Recall: These metrics consider the mannequin’s efficiency on a check set. If the mannequin’s efficiency on these metrics is just too good on the coaching set however poor on the check set, it might point out that the mannequin is overfitting.

- Dynamic studying charge adjustment: The educational charge is adjusted throughout coaching primarily based on the mannequin’s efficiency throughout every epoch. This enables the algorithm to stability exploration and exploitation, making certain that the mannequin converges to the optimum answer.

- Epsilon-greedy exploration: The algorithm explores the mannequin area by including a random part to the mannequin’s predictions throughout every epoch. This helps the mannequin to keep away from getting caught in native minima and improves its skill to generalize.

- Early stopping: The algorithm stops coaching when the mannequin’s efficiency on the validation set begins to degrade. This prevents overfitting and ensures that the mannequin generalizes properly to unseen knowledge.

- Adagrad: This algorithm adapts the training charge for every parameter individually, primarily based on the magnitude of the gradient.

- Adam: This algorithm combines the advantages of Adagrad and RMSProp, and adapts the training charge for every parameter primarily based on the magnitude of the gradient and the historic magnitude of the gradient.

- Schedule Studying Charge: This algorithm adjusts the training charge primarily based on the mannequin’s efficiency throughout every epoch, utilizing a predetermined schedule.

- Use a logging framework to trace coaching metrics, comparable to TensorBoard or PyTorch Lightning’s built-in logging system.

- Often visualize coaching metrics, comparable to loss and accuracy, to establish tendencies and points.

- Use a validation set to guage mannequin efficiency and regulate coaching parameters as wanted.

- Begin with a small variety of epochs (e.g., 10-20) and regularly improve as essential.

- Use early stopping to cease coaching when the mannequin’s efficiency on the validation set begins to degrade.

- Often consider mannequin efficiency on a validation set to find out if further coaching epochs are wanted.

- Often save mannequin weights at particular intervals (e.g., each 10 epochs) utilizing a logging framework or a customized operate.

- Use a constant naming conference for saved mannequin weights to keep away from confusion.

- Load saved mannequin weights solely when essential (e.g., when resuming coaching from a earlier checkpoint).

[blockquote]>Loss operate + Motion Taken -> Epoch Development

These metrics are calculated on the finish of every epoch and are used to evaluate the mannequin’s efficiency. The particular metrics used might range relying on the issue and the kind of machine studying process being carried out.

Deciphering Metrics Throughout Epoch Analysis

As soon as the metrics are calculated, they can be utilized to guage the efficiency of the machine studying mannequin. For instance, if the loss operate is reducing over epochs, it signifies that the mannequin is bettering its efficiency. Nonetheless, if the loss operate is just not reducing, it might be a sign that the mannequin is just not studying from the info.

| Epoch | Loss Operate | Accuracy | Precision | Recall | F1-Rating |

|——-|—————|———-|———–|——–|———-|

| 1 | 0.5 | 0.8 | 0.9 | 0.7 | 0.8 |

| 2 | 0.4 | 0.9 | 0.95 | 0.85 | 0.9 |

| 3 | 0.3 | 0.95 | 0.98 | 0.92 | 0.95 |

On this instance, the loss operate is reducing over epochs, which signifies that the mannequin is bettering its efficiency.

Evaluating Mannequin Efficiency Over Epochs

Evaluating mannequin efficiency over epochs is a vital side of tuning hyperparameters and bettering mannequin efficiency. By analyzing the efficiency of the mannequin over epochs, knowledge scientists can establish areas the place the mannequin could also be struggling and take corrective motion.

For instance, if the accuracy of the mannequin is plateauing over epochs, it might be a sign that the mannequin has reached its restrict, and additional coaching won’t enhance efficiency. In such circumstances, hyperparameter tuning or altering the structure of the mannequin could also be essential to enhance efficiency.

Epoch analysis is a vital side of machine studying mannequin growth, because it gives insights into the efficiency of the mannequin and informs decision-making through the growth course of.

Designing Epoch-Primarily based Coaching Methods

Designing epoch-based coaching methods is a vital side of machine studying, because it instantly impacts the efficiency and effectivity of the mannequin. By choosing the correct technique, knowledge scientists can optimize their fashions to achieve the very best outcomes. This part delves into the varied epoch-based coaching methods, their benefits, and drawbacks, to supply a complete understanding of the best strategies.

Gradient-Primarily based Descent

Gradient-based descent is a broadly used epoch-based coaching technique that depends on gradients to optimize the mannequin’s parameters. This technique works by iteratively updating the mannequin’s parameters within the route reverse to the gradient of the loss operate, successfully decreasing the loss at every step. The method includes two essential elements: the training charge and the gradient.

The educational charge determines the step dimension of every replace, whereas the gradient is used to information the updates in the direction of the optimum answer. Gradient-based descent is an efficient technique, significantly when mixed with different strategies, comparable to momentum and Nesterov acceleration, which assist to keep away from native minima and enhance convergence charges.

Stochastic Gradient Descent

Stochastic gradient descent (SGD) is a variant of gradient-based descent that makes use of a single knowledge level to approximate the gradient at every iteration. This technique is especially helpful when coping with massive datasets, because it reduces the computational overhead and reminiscence necessities. Nonetheless, SGD typically requires a excessive studying charge to attain convergence, which might result in oscillations and decreased efficiency.

SGD is often utilized in on-line studying situations, the place the mannequin must adapt to new knowledge because it turns into out there. By utilizing a single knowledge level at every iteration, SGD can shortly adapt to modifications within the knowledge distribution, making it an efficient technique for real-time functions.

Mini-Batch Gradient Descent

Mini-batch gradient descent is a compromise between gradient-based descent and SGD. As an alternative of utilizing a single knowledge level, this technique makes use of a small batch of knowledge factors, sometimes within the vary of 16 to 512. Mini-batch gradient descent affords a stability between accuracy and computational effectivity, making it a preferred selection for a lot of machine studying functions.

Mini-batch gradient descent is especially efficient when coping with massive datasets, as it will possibly present a extra correct approximation of the gradient than SGD whereas sustaining a decrease computational overhead than full-batch gradient descent.

Blocquote:

The selection of epoch-based coaching technique finally will depend on the precise necessities of the issue and the traits of the info. By understanding the strengths and weaknesses of every technique, knowledge scientists could make knowledgeable choices to optimize their fashions and obtain the very best outcomes.

| Coaching Technique | Benefits | Disadvantages |

|---|---|---|

| Gradient-Primarily based Descent | Efficient for big datasets, could be mixed with different strategies to enhance convergence charges | Computationally costly, might get caught in native minima |

| Stochastic Gradient Descent | Quicker convergence charges, appropriate for on-line studying situations | Requires excessive studying charge, might oscillate and reduce efficiency |

| Mini-Batch Gradient Descent | Steadiness between accuracy and computational effectivity, appropriate for big datasets | Could also be computationally costly for very massive datasets |

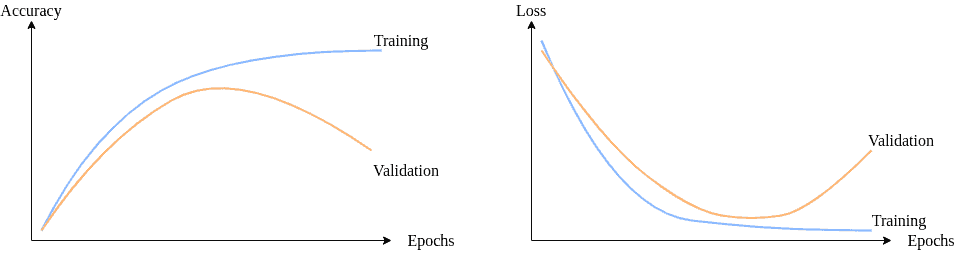

Visualizing Epoch Progress in Machine Studying

Visualizing epoch progress is a vital step in understanding and optimizing the efficiency of machine studying fashions. By visualizing the coaching progress, builders can shortly establish the strengths and weaknesses of their fashions and make data-driven choices to enhance them.

Line Plots of Loss and Accuracy

Line plots of loss and accuracy can present a transparent and concise visible illustration of the mannequin’s efficiency over epochs. The x-axis can characterize the epoch numbers, and the y-axis can characterize the loss or accuracy values. This visualization might help establish tendencies, patterns, and correlations between the mannequin’s efficiency and the coaching parameters.

A line plot of loss and accuracy could be created utilizing libraries like Matplotlib in Python. For instance, suppose we’ve got a mannequin that has been skilled for 10 epochs with a lack of 0.05 and an accuracy of 0.8. The road plot would present a decline in loss and a rise in accuracy because the epochs progress.

loss = [0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002]

accuracy = [0.6, 0.7, 0.75, 0.8, 0.85, 0.86, 0.87, 0.88, 0.89, 0.9]

The road plot would present a transparent pattern of decline in loss and improve in accuracy because the epochs progress.

Bar Charts of Epoch-Smart Efficiency

Bar charts of epoch-wise efficiency can present a transparent comparability of the mannequin’s efficiency at every epoch. The x-axis can characterize the epoch numbers, and the y-axis can characterize the loss or accuracy values. This visualization might help establish which epochs are the strongest or weakest and make data-driven choices to enhance the mannequin.

A bar chart of epoch-wise efficiency could be created utilizing libraries like Matplotlib in Python. For instance, suppose we’ve got a mannequin that has been skilled for 10 epochs with losses of 0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002. The bar chart would present a transparent comparability of the mannequin’s efficiency at every epoch.

loss = [0.1, 0.05, 0.03, 0.02, 0.01, 0.005, 0.002, 0.001, 0.0005, 0.0002]

The bar chart would present a transparent pattern of decline in loss because the epochs progress.

Advantages of Visualizing Epoch Progress

Visualizing epoch progress can have a number of advantages, together with:

* Figuring out tendencies and patterns within the mannequin’s efficiency

* Making data-driven choices to enhance the mannequin

* Evaluating the mannequin’s efficiency at every epoch

* Figuring out the strongest and weakest epochs

By visualizing epoch progress, builders can achieve beneficial insights into their mannequin’s efficiency and make knowledgeable choices to enhance it.

Dealing with Overfitting and Underfitting Throughout Epochs

Overfitting and underfitting are two widespread points that come up through the epoch-based coaching course of in machine studying. Overfitting happens when a mannequin turns into too complicated and begins to memorize the coaching knowledge, leading to poor efficiency on new, unseen knowledge. However, underfitting happens when a mannequin is just too easy and fails to seize the underlying patterns within the knowledge. Dealing with overfitting and underfitting throughout epochs is essential to attain optimum mannequin efficiency.

Methods for Dealing with Overfitting

One of many easiest methods to deal with overfitting is by regularizing the mannequin utilizing strategies comparable to L1 and L2 regularization. Regularization provides a penalty time period to the loss operate, encouraging the mannequin to scale back the complexity of the weights. One other technique is to make use of dropout, which randomly removes neurons throughout coaching to stop the mannequin from counting on a single characteristic.

Methods for Dealing with Underfitting

To deal with underfitting, we will attempt growing the capability of the mannequin by including extra layers or neurons. We will additionally attempt utilizing totally different activation features, comparable to ReLU or leaky ReLU, which might help the mannequin study extra complicated patterns.

Detecting Overfitting and Underfitting

There are a number of metrics that can be utilized to detect overfitting and underfitting. One of the crucial generally used metrics is the validation loss, which measures the mannequin’s efficiency on a separate validation set. We will additionally use metrics comparable to accuracy, precision, and recall to guage the mannequin’s efficiency.

Analysis Metrics for Overfitting and Underfitting

Listed below are some widespread analysis metrics used to detect overfitting and underfitting:

| Metric | Description |

| — | — |

| Coefficient of Willpower (R-squared) | Measures the proportion of variance within the dependent variable that’s predictable from the unbiased variable |

| Imply Squared Error (MSE) | Measures the common squared distinction between predicted and precise values |

| Imply Absolute Error (MAE) | Measures the common absolute distinction between predicted and precise values |

| Root Imply Squared Error (RMSE) | Measures the sq. root of the common squared distinction between predicted and precise values |

Creating Epoch-Conscious Machine Studying Algorithms

Creating epoch-aware machine studying algorithms is essential for bettering the effectivity and effectiveness of coaching deep neural networks. Epoch-aware algorithms adapt their coaching methods to the altering wants of the mannequin because it progresses via the coaching course of.

Epoch-aware algorithms are designed to be dynamic, permitting them to regulate their studying charge, exploration, and different hyperparameters in real-time primarily based on the mannequin’s efficiency throughout every epoch. This adaptive strategy allows the algorithm to optimize the coaching course of and enhance the mannequin’s accuracy and generalization capabilities.

Epoch-Consciousness in Machine Studying Algorithms

Epoch-awareness is achieved via varied strategies, together with:

Examples of Epoch-Conscious Algorithms

Some examples of epoch-aware algorithms embrace:

The important thing benefit of epoch-aware algorithms is their skill to adapt to altering mannequin efficiency and optimize the coaching course of in real-time. This results in improved mannequin accuracy, sooner convergence, and higher generalization capabilities.

Illustrative Examples, What’s an epoch machine studying

Contemplate a deep neural community skilled on the MNIST dataset. The epoch-aware algorithm adjusts the training charge throughout coaching primarily based on the mannequin’s efficiency throughout every epoch. Because the mannequin converges to the optimum answer, the training charge is diminished, permitting the mannequin to make finer changes and keep away from overfitting.

epoch-aware algorithms can result in enhancements in mannequin accuracy and convergence velocity by adaptively adjusting the training charge and different hyperparameters.

In abstract, epoch-aware machine studying algorithms are designed to adapt to the altering wants of the mannequin throughout coaching, leading to improved accuracy, sooner convergence, and higher generalization capabilities.

Greatest Practices for Epoch-Primarily based Coaching

Epoch-based coaching is a vital side of deep studying, requiring cautious monitoring and optimization to attain one of the best outcomes. Often monitoring coaching progress and adjusting the variety of epochs primarily based on efficiency are important greatest practices for epoch-based coaching.

Often Monitoring Coaching Progress

Monitoring coaching progress is significant to understanding how your mannequin is performing and whether or not it is heading in the right direction. This may be finished by monitoring metrics comparable to loss, accuracy, and validation loss over time. Often monitoring coaching progress lets you establish points and regulate your mannequin or coaching parameters as wanted.

Adjusting the Variety of Epochs Primarily based on Efficiency

The variety of epochs is a important hyperparameter in deep studying, and adjusting it primarily based on efficiency can vastly affect mannequin outcomes. Overfitting happens when the mannequin is skilled too lengthy, inflicting it to memorize the coaching knowledge relatively than generalizing to new knowledge.

Saving and Loading Mannequin Weights

Saving and loading mannequin weights is a vital side of epoch-based coaching, permitting you to proceed coaching from a earlier checkpoint or use a mannequin that has already been skilled on an analogous process.

By following these greatest practices, you may make sure that your epoch-based coaching is optimized for fulfillment and that your mannequin achieves the very best outcomes.

Remaining Abstract: What Is An Epoch Machine Studying

So, to wrap it up, epochs are the important thing to machine studying, and understanding them is like understanding the key to getting higher at something, innit? Whether or not you are making an attempt to acknowledge cat footage or predict inventory costs, epochs are the best way to go, yeah?

FAQs

Q: What is the distinction between a coaching epoch and a validation epoch?

A: A coaching epoch is when the mannequin is fed knowledge to study from, whereas a validation epoch is when it is examined to see how properly it is doing, innit?

Q: How do epochs have an effect on the efficiency of a mannequin?

A: The extra epochs, the higher the mannequin will get at recognizing patterns, however too many epochs can result in overfitting, blud.

Q: What is the goal of visualizing epoch progress in machine studying?

A: It is like taking a look at a graph of your marathon run, mate – it helps you monitor your progress and make essential modifications to enhance, yeah?

Q: How do you detect overfitting and underfitting throughout epoch-based coaching?

A: You need to use metrics like accuracy, loss, and precision to determine how properly the mannequin’s doing, innit?